Quantum Mixed-State Self-Attention Network

Mar 05, 2024Fu Chen, Qinglin Zhao, Li Feng, Chuangtao Chen, Yangbin Lin, Jianhong Lin

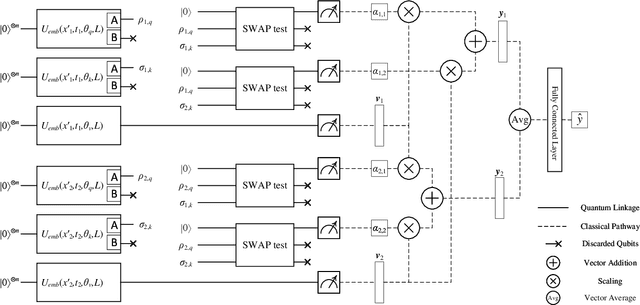

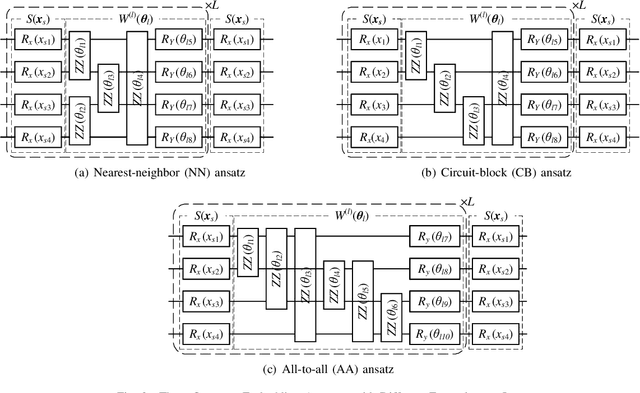

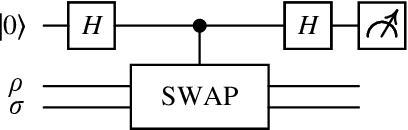

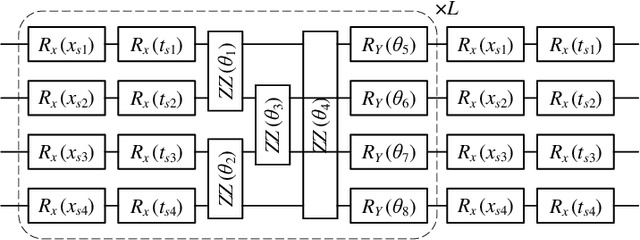

The rapid advancement of quantum computing has increasingly highlighted its potential in the realm of machine learning, particularly in the context of natural language processing (NLP) tasks. Quantum machine learning (QML) leverages the unique capabilities of quantum computing to offer novel perspectives and methodologies for complex data processing and pattern recognition challenges. This paper introduces a novel Quantum Mixed-State Attention Network (QMSAN), which integrates the principles of quantum computing with classical machine learning algorithms, especially self-attention networks, to enhance the efficiency and effectiveness in handling NLP tasks. QMSAN model employs a quantum attention mechanism based on mixed states, enabling efficient direct estimation of similarity between queries and keys within the quantum domain, leading to more effective attention weight acquisition. Additionally, we propose an innovative quantum positional encoding scheme, implemented through fixed quantum gates within the quantum circuit, to enhance the model's accuracy. Experimental validation on various datasets demonstrates that QMSAN model outperforms existing quantum and classical models in text classification, achieving significant performance improvements. QMSAN model not only significantly reduces the number of parameters but also exceeds classical self-attention networks in performance, showcasing its strong capability in data representation and information extraction. Furthermore, our study investigates the model's robustness in different quantum noise environments, showing that QMSAN possesses commendable robustness to low noise.

Improvements on Recommender System based on Mathematical Principles

Apr 26, 2023Fu Chen, Junkang Zou, Lingfeng Zhou, Zekai Xu, Zhenyu Wu

In this article, we will research the Recommender System's implementation about how it works and the algorithms used. We will explain the Recommender System's algorithms based on mathematical principles, and find feasible methods for improvements. The algorithms based on probability have its significance in Recommender System, we will describe how they help to increase the accuracy and speed of the algorithms. Both the weakness and the strength of two different mathematical distance used to describe the similarity will be detailed illustrated in this article.

Explainable Enterprise Credit Rating via Deep Feature Crossing Network

May 22, 2021Weiyu Guo, Zhijiang Yang, Shu Wu, Fu Chen

Due to the powerful learning ability on high-rank and non-linear features, deep neural networks (DNNs) are being applied to data mining and machine learning in various fields, and exhibit higher discrimination performance than conventional methods. However, the applications based on DNNs are rare in enterprise credit rating tasks because most of DNNs employ the "end-to-end" learning paradigm, which outputs the high-rank representations of objects and predictive results without any explanations. Thus, users in the financial industry cannot understand how these high-rank representations are generated, what do they mean and what relations exist with the raw inputs. Then users cannot determine whether the predictions provided by DNNs are reliable, and not trust the predictions providing by such "black box" models. Therefore, in this paper, we propose a novel network to explicitly model the enterprise credit rating problem using DNNs and attention mechanisms. The proposed model realizes explainable enterprise credit ratings. Experimental results obtained on real-world enterprise datasets verify that the proposed approach achieves higher performance than conventional methods, and provides insights into individual rating results and the reliability of model training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge