Neural Plasticity-Inspired Foundation Model for Observing the Earth Crossing Modalities

Mar 22, 2024Zhitong Xiong, Yi Wang, Fahong Zhang, Adam J. Stewart, Joëlle Hanna, Damian Borth, Ioannis Papoutsis, Bertrand Le Saux, Gustau Camps-Valls, Xiao Xiang Zhu

The development of foundation models has revolutionized our ability to interpret the Earth's surface using satellite observational data. Traditional models have been siloed, tailored to specific sensors or data types like optical, radar, and hyperspectral, each with its own unique characteristics. This specialization hinders the potential for a holistic analysis that could benefit from the combined strengths of these diverse data sources. Our novel approach introduces the Dynamic One-For-All (DOFA) model, leveraging the concept of neural plasticity in brain science to integrate various data modalities into a single framework adaptively. This dynamic hypernetwork, adjusting to different wavelengths, enables a single versatile Transformer jointly trained on data from five sensors to excel across 12 distinct Earth observation tasks, including sensors never seen during pretraining. DOFA's innovative design offers a promising leap towards more accurate, efficient, and unified Earth observation analysis, showcasing remarkable adaptability and performance in harnessing the potential of multimodal Earth observation data.

Recovering Latent Confounders from High-dimensional Proxy Variables

Mar 21, 2024Nathan Mankovich, Homer Durand, Emiliano Diaz, Gherardo Varando, Gustau Camps-Valls

Detecting latent confounders from proxy variables is an essential problem in causal effect estimation. Previous approaches are limited to low-dimensional proxies, sorted proxies, and binary treatments. We remove these assumptions and present a novel Proxy Confounder Factorization (PCF) framework for continuous treatment effect estimation when latent confounders manifest through high-dimensional, mixed proxy variables. For specific sample sizes, our two-step PCF implementation, using Independent Component Analysis (ICA-PCF), and the end-to-end implementation, using Gradient Descent (GD-PCF), achieve high correlation with the latent confounder and low absolute error in causal effect estimation with synthetic datasets in the high sample size regime. Even when faced with climate data, ICA-PCF recovers four components that explain $75.9\%$ of the variance in the North Atlantic Oscillation, a known confounder of precipitation patterns in Europe. Code for our PCF implementations and experiments can be found here: https://github.com/IPL-UV/confound_it. The proposed methodology constitutes a stepping stone towards discovering latent confounders and can be applied to many problems in disciplines dealing with high-dimensional observed proxies, e.g., spatiotemporal fields.

Causal Graph Neural Networks for Wildfire Danger Prediction

Mar 13, 2024Shan Zhao, Ioannis Prapas, Ilektra Karasante, Zhitong Xiong, Ioannis Papoutsis, Gustau Camps-Valls, Xiao Xiang Zhu

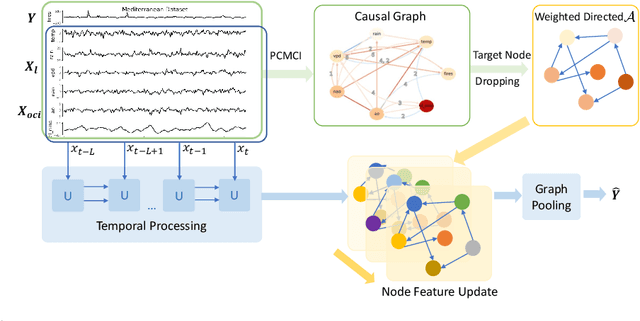

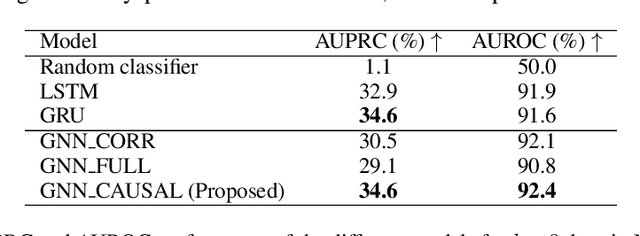

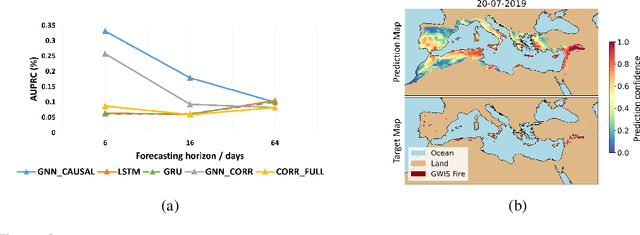

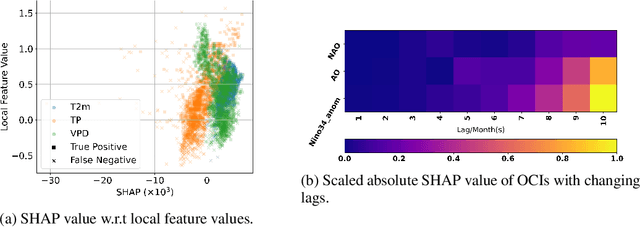

Wildfire forecasting is notoriously hard due to the complex interplay of different factors such as weather conditions, vegetation types and human activities. Deep learning models show promise in dealing with this complexity by learning directly from data. However, to inform critical decision making, we argue that we need models that are right for the right reasons; that is, the implicit rules learned should be grounded by the underlying processes driving wildfires. In that direction, we propose integrating causality with Graph Neural Networks (GNNs) that explicitly model the causal mechanism among complex variables via graph learning. The causal adjacency matrix considers the synergistic effect among variables and removes the spurious links from highly correlated impacts. Our methodology's effectiveness is demonstrated through superior performance forecasting wildfire patterns in the European boreal and mediterranean biome. The gain is especially prominent in a highly imbalanced dataset, showcasing an enhanced robustness of the model to adapt to regime shifts in functional relationships. Furthermore, SHAP values from our trained model further enhance our understanding of the model's inner workings.

Improving generalisation via anchor multivariate analysis

Mar 11, 2024Homer Durand, Gherardo Varando, Nathan Mankovich, Gustau Camps-Valls

We introduce a causal regularisation extension to anchor regression (AR) for improved out-of-distribution (OOD) generalisation. We present anchor-compatible losses, aligning with the anchor framework to ensure robustness against distribution shifts. Various multivariate analysis (MVA) algorithms, such as (Orthonormalized) PLS, RRR, and MLR, fall within the anchor framework. We observe that simple regularisation enhances robustness in OOD settings. Estimators for selected algorithms are provided, showcasing consistency and efficacy in synthetic and real-world climate science problems. The empirical validation highlights the versatility of anchor regularisation, emphasizing its compatibility with MVA approaches and its role in enhancing replicability while guarding against distribution shifts. The extended AR framework advances causal inference methodologies, addressing the need for reliable OOD generalisation.

Double machine learning for causal hybrid modeling -- applications in the Earth sciences

Feb 20, 2024Kai-Hendrik Cohrs, Gherardo Varando, Nuno Carvalhais, Markus Reichstein, Gustau Camps-Valls

Hybrid modeling integrates machine learning with scientific knowledge with the goal of enhancing interpretability, generalization, and adherence to natural laws. Nevertheless, equifinality and regularization biases pose challenges in hybrid modeling to achieve these purposes. This paper introduces a novel approach to estimating hybrid models via a causal inference framework, specifically employing Double Machine Learning (DML) to estimate causal effects. We showcase its use for the Earth sciences on two problems related to carbon dioxide fluxes. In the $Q_{10}$ model, we demonstrate that DML-based hybrid modeling is superior in estimating causal parameters over end-to-end deep neural network (DNN) approaches, proving efficiency, robustness to bias from regularization methods, and circumventing equifinality. Our approach, applied to carbon flux partitioning, exhibits flexibility in accommodating heterogeneous causal effects. The study emphasizes the necessity of explicitly defining causal graphs and relationships, advocating for this as a general best practice. We encourage the continued exploration of causality in hybrid models for more interpretable and trustworthy results in knowledge-guided machine learning.

Fun with Flags: Robust Principal Directions via Flag Manifolds

Jan 08, 2024Nathan Mankovich, Gustau Camps-Valls, Tolga Birdal

Principal component analysis (PCA), along with its extensions to manifolds and outlier contaminated data, have been indispensable in computer vision and machine learning. In this work, we present a unifying formalism for PCA and its variants, and introduce a framework based on the flags of linear subspaces, \ie a hierarchy of nested linear subspaces of increasing dimension, which not only allows for a common implementation but also yields novel variants, not explored previously. We begin by generalizing traditional PCA methods that either maximize variance or minimize reconstruction error. We expand these interpretations to develop a wide array of new dimensionality reduction algorithms by accounting for outliers and the data manifold. To devise a common computational approach, we recast robust and dual forms of PCA as optimization problems on flag manifolds. We then integrate tangent space approximations of principal geodesic analysis (tangent-PCA) into this flag-based framework, creating novel robust and dual geodesic PCA variations. The remarkable flexibility offered by the 'flagification' introduced here enables even more algorithmic variants identified by specific flag types. Last but not least, we propose an effective convergent solver for these flag-formulations employing the Stiefel manifold. Our empirical results on both real-world and synthetic scenarios, demonstrate the superiority of our novel algorithms, especially in terms of robustness to outliers on manifolds.

Causality and Explainability for Trustworthy Integrated Pest Management

Dec 07, 2023Ilias Tsoumas, Vasileios Sitokonstantinou, Georgios Giannarakis, Evagelia Lampiri, Christos Athanassiou, Gustau Camps-Valls, Charalampos Kontoes, Ioannis Athanasiadis

Pesticides serve as a common tool in agricultural pest control but significantly contribute to the climate crisis. To combat this, Integrated Pest Management (IPM) stands as a climate-smart alternative. Despite its potential, IPM faces low adoption rates due to farmers' skepticism about its effectiveness. To address this challenge, we introduce an advanced data analysis framework tailored to enhance IPM adoption. Our framework provides i) robust pest population predictions across diverse environments with invariant and causal learning, ii) interpretable pest presence predictions using transparent models, iii) actionable advice through counterfactual explanations for in-season IPM interventions, iv) field-specific treatment effect estimations, and v) assessments of the effectiveness of our advice using causal inference. By incorporating these features, our framework aims to alleviate skepticism and encourage wider adoption of IPM practices among farmers.

Evaluating the Impact of Humanitarian Aid on Food Security

Oct 17, 2023Jordi Cerdà-Bautista, José María Tárraga, Vasileios Sitokonstantinou, Gustau Camps-Valls

In the face of climate change-induced droughts, vulnerable regions encounter severe threats to food security, demanding urgent humanitarian assistance. This paper introduces a causal inference framework for the Horn of Africa, aiming to assess the impact of cash-based interventions on food crises. Our contributions encompass identifying causal relationships within the food security system, harmonizing a comprehensive database, and estimating the causal effect of humanitarian interventions on malnutrition. Our results revealed no significant effects, likely due to limited sample size, suboptimal data quality, and an imperfect causal graph resulting from our limited understanding of multidisciplinary systems like food security. This underscores the need to enhance data collection and refine causal models with domain experts for more effective future interventions and policies, improving transparency and accountability in humanitarian aid.

Graphs in State-Space Models for Granger Causality in Climate Science

Jul 20, 2023Víctor Elvira, Émilie Chouzenoux, Jordi Cerdà, Gustau Camps-Valls

Granger causality (GC) is often considered not an actual form of causality. Still, it is arguably the most widely used method to assess the predictability of a time series from another one. Granger causality has been widely used in many applied disciplines, from neuroscience and econometrics to Earth sciences. We revisit GC under a graphical perspective of state-space models. For that, we use GraphEM, a recently presented expectation-maximisation algorithm for estimating the linear matrix operator in the state equation of a linear-Gaussian state-space model. Lasso regularisation is included in the M-step, which is solved using a proximal splitting Douglas-Rachford algorithm. Experiments in toy examples and challenging climate problems illustrate the benefits of the proposed model and inference technique over standard Granger causality methods.

TeleViT: Teleconnection-driven Transformers Improve Subseasonal to Seasonal Wildfire Forecasting

Jun 19, 2023Ioannis Prapas, Nikolaos Ioannis Bountos, Spyros Kondylatos, Dimitrios Michail, Gustau Camps-Valls, Ioannis Papoutsis

Wildfires are increasingly exacerbated as a result of climate change, necessitating advanced proactive measures for effective mitigation. It is important to forecast wildfires weeks and months in advance to plan forest fuel management, resource procurement and allocation. To achieve such accurate long-term forecasts at a global scale, it is crucial to employ models that account for the Earth system's inherent spatio-temporal interactions, such as memory effects and teleconnections. We propose a teleconnection-driven vision transformer (TeleViT), capable of treating the Earth as one interconnected system, integrating fine-grained local-scale inputs with global-scale inputs, such as climate indices and coarse-grained global variables. Through comprehensive experimentation, we demonstrate the superiority of TeleViT in accurately predicting global burned area patterns for various forecasting windows, up to four months in advance. The gain is especially pronounced in larger forecasting windows, demonstrating the improved ability of deep learning models that exploit teleconnections to capture Earth system dynamics. Code available at https://github.com/Orion-Ai-Lab/TeleViT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge