Hsueh-Ti Derek Liu

Learning Smooth Neural Functions via Lipschitz Regularization

Feb 16, 2022

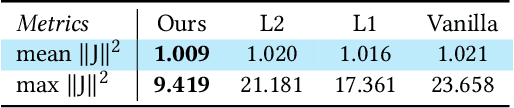

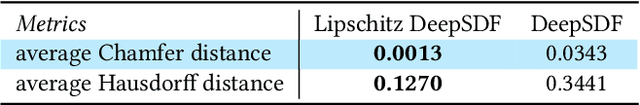

Neural implicit fields have recently emerged as a useful representation for 3D shapes. These fields are commonly represented as neural networks which map latent descriptors and 3D coordinates to implicit function values. The latent descriptor of a neural field acts as a deformation handle for the 3D shape it represents. Thus, smoothness with respect to this descriptor is paramount for performing shape-editing operations. In this work, we introduce a novel regularization designed to encourage smooth latent spaces in neural fields by penalizing the upper bound on the field's Lipschitz constant. Compared with prior Lipschitz regularized networks, ours is computationally fast, can be implemented in four lines of code, and requires minimal hyperparameter tuning for geometric applications. We demonstrate the effectiveness of our approach on shape interpolation and extrapolation as well as partial shape reconstruction from 3D point clouds, showing both qualitative and quantitative improvements over existing state-of-the-art and non-regularized baselines.

Neural Subdivision

May 04, 2020

This paper introduces Neural Subdivision, a novel framework for data-driven coarse-to-fine geometry modeling. During inference, our method takes a coarse triangle mesh as input and recursively subdivides it to a finer geometry by applying the fixed topological updates of Loop Subdivision, but predicting vertex positions using a neural network conditioned on the local geometry of a patch. This approach enables us to learn complex non-linear subdivision schemes, beyond simple linear averaging used in classical techniques. One of our key contributions is a novel self-supervised training setup that only requires a set of high-resolution meshes for learning network weights. For any training shape, we stochastically generate diverse low-resolution discretizations of coarse counterparts, while maintaining a bijective mapping that prescribes the exact target position of every new vertex during the subdivision process. This leads to a very efficient and accurate loss function for conditional mesh generation, and enables us to train a method that generalizes across discretizations and favors preserving the manifold structure of the output. During training we optimize for the same set of network weights across all local mesh patches, thus providing an architecture that is not constrained to a specific input mesh, fixed genus, or category. Our network encodes patch geometry in a local frame in a rotation- and translation-invariant manner. Jointly, these design choices enable our method to generalize well, and we demonstrate that even when trained on a single high-resolution mesh our method generates reasonable subdivisions for novel shapes.

* 16 pages

Adversarial Geometry and Lighting using a Differentiable Renderer

Aug 08, 2018

Many machine learning classifiers are vulnerable to adversarial attacks, inputs with perturbations designed to intentionally trigger misclassification. Modern adversarial methods either directly alter pixel colors, or "paint" colors onto a 3D shapes. We propose novel adversarial attacks that directly alter the geometry of 3D objects and/or manipulate the lighting in a virtual scene. We leverage a novel differentiable renderer that is efficient to evaluate and analytically differentiate. Our renderer generates images realistic enough for correct classification by common pre-trained models, and we use it to design physical adversarial examples that consistently fool these models. We conduct qualitative and quantitate experiments to validate our adversarial geometry and adversarial lighting attack capabilities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge