Nonparametric Variational Regularisation of Pretrained Transformers

Dec 01, 2023Fabio Fehr, James Henderson

The current paradigm of large-scale pre-training and fine-tuning Transformer large language models has lead to significant improvements across the board in natural language processing. However, such large models are susceptible to overfitting to their training data, and as a result the models perform poorly when the domain changes. Also, due to the model's scale, the cost of fine-tuning the model to the new domain is large. Nonparametric Variational Information Bottleneck (NVIB) has been proposed as a regulariser for training cross-attention in Transformers, potentially addressing the overfitting problem. We extend the NVIB framework to replace all types of attention functions in Transformers, and show that existing pretrained Transformers can be reinterpreted as Nonparametric Variational (NV) models using a proposed identity initialisation. We then show that changing the initialisation introduces a novel, information-theoretic post-training regularisation in the attention mechanism, which improves out-of-domain generalisation without any training. This success supports the hypothesis that pretrained Transformers are implicitly NV Bayesian models.

Transformers as Graph-to-Graph Models

Oct 27, 2023James Henderson, Alireza Mohammadshahi, Andrei C. Coman, Lesly Miculicich

We argue that Transformers are essentially graph-to-graph models, with sequences just being a special case. Attention weights are functionally equivalent to graph edges. Our Graph-to-Graph Transformer architecture makes this ability explicit, by inputting graph edges into the attention weight computations and predicting graph edges with attention-like functions, thereby integrating explicit graphs into the latent graphs learned by pretrained Transformers. Adding iterative graph refinement provides a joint embedding of input, output, and latent graphs, allowing non-autoregressive graph prediction to optimise the complete graph without any bespoke pipeline or decoding strategy. Empirical results show that this architecture achieves state-of-the-art accuracies for modelling a variety of linguistic structures, integrating very effectively with the latent linguistic representations learned by pretraining.

Learning to Abstract with Nonparametric Variational Information Bottleneck

Oct 26, 2023Melika Behjati, Fabio Fehr, James Henderson

Learned representations at the level of characters, sub-words, words and sentences, have each contributed to advances in understanding different NLP tasks and linguistic phenomena. However, learning textual embeddings is costly as they are tokenization specific and require different models to be trained for each level of abstraction. We introduce a novel language representation model which can learn to compress to different levels of abstraction at different layers of the same model. We apply Nonparametric Variational Information Bottleneck (NVIB) to stacked Transformer self-attention layers in the encoder, which encourages an information-theoretic compression of the representations through the model. We find that the layers within the model correspond to increasing levels of abstraction and that their representations are more linguistically informed. Finally, we show that NVIB compression results in a model which is more robust to adversarial perturbations.

GADePo: Graph-Assisted Declarative Pooling Transformers for Document-Level Relation Extraction

Aug 28, 2023Andrei C. Coman, Christos Theodoropoulos, Marie-Francine Moens, James Henderson

Document-level relation extraction aims to identify relationships between entities within a document. Current methods rely on text-based encoders and employ various hand-coded pooling heuristics to aggregate information from entity mentions and associated contexts. In this paper, we replace these rigid pooling functions with explicit graph relations by leveraging the intrinsic graph processing capabilities of the Transformer model. We propose a joint text-graph Transformer model, and a graph-assisted declarative pooling (GADePo) specification of the input which provides explicit and high-level instructions for information aggregation. This allows the pooling process to be guided by domain-specific knowledge or desired outcomes but still learned by the Transformer, leading to more flexible and customizable pooling strategies. We extensively evaluate our method across diverse datasets and models, and show that our approach yields promising results that are comparable to those achieved by the hand-coded pooling functions.

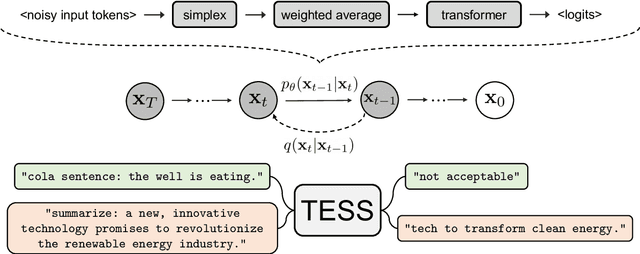

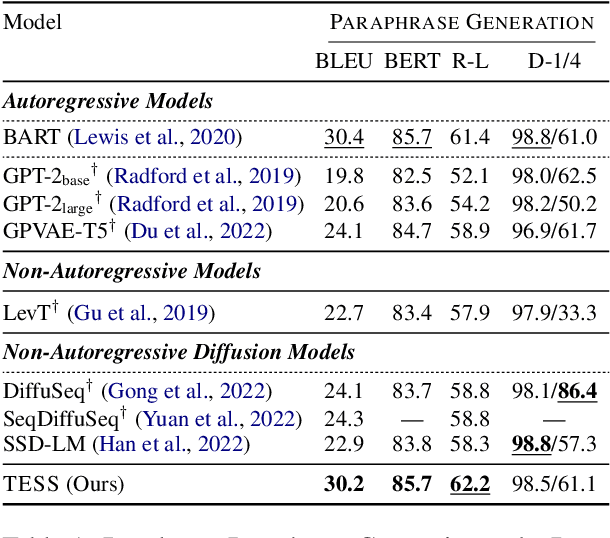

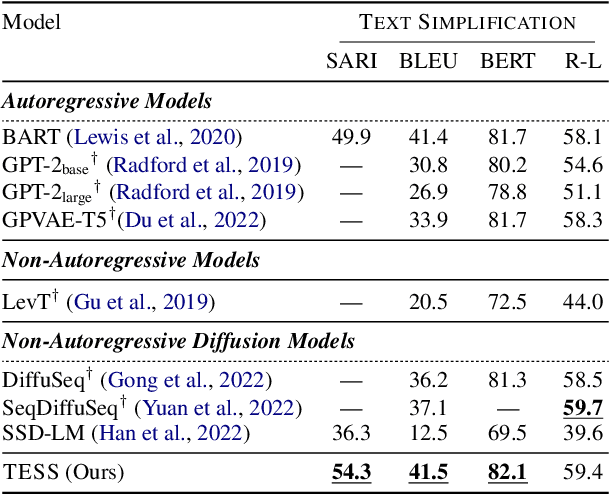

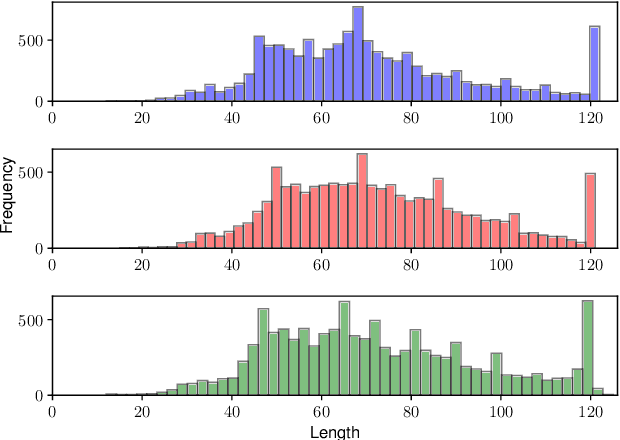

TESS: Text-to-Text Self-Conditioned Simplex Diffusion

May 15, 2023Rabeeh Karimi Mahabadi, Jaesung Tae, Hamish Ivison, James Henderson, Iz Beltagy, Matthew E. Peters, Arman Cohan

Diffusion models have emerged as a powerful paradigm for generation, obtaining strong performance in various domains with continuous-valued inputs. Despite the promises of fully non-autoregressive text generation, applying diffusion models to natural language remains challenging due to its discrete nature. In this work, we propose Text-to-text Self-conditioned Simplex Diffusion (TESS), a text diffusion model that is fully non-autoregressive, employs a new form of self-conditioning, and applies the diffusion process on the logit simplex space rather than the typical learned embedding space. Through extensive experiments on natural language understanding and generation tasks including summarization, text simplification, paraphrase generation, and question generation, we demonstrate that TESS outperforms state-of-the-art non-autoregressive models and is competitive with pretrained autoregressive sequence-to-sequence models.

RQUGE: Reference-Free Metric for Evaluating Question Generation by Answering the Question

Nov 09, 2022Alireza Mohammadshahi, Thomas Scialom, Majid Yazdani, Pouya Yanki, Angela Fan, James Henderson, Marzieh Saeidi

Existing metrics for evaluating the quality of automatically generated questions such as BLEU, ROUGE, BERTScore, and BLEURT compare the reference and predicted questions, providing a high score when there is a considerable lexical overlap or semantic similarity between the candidate and the reference questions. This approach has two major shortcomings. First, we need expensive human-provided reference questions. Second, it penalises valid questions that may not have high lexical or semantic similarity to the reference questions. In this paper, we propose a new metric, RQUGE, based on the answerability of the candidate question given the context. The metric consists of a question-answering and a span scorer module, in which we use pre-trained models from the existing literature, and therefore, our metric can be used without further training. We show that RQUGE has a higher correlation with human judgment without relying on the reference question. RQUGE is shown to be significantly more robust to several adversarial corruptions. Additionally, we illustrate that we can significantly improve the performance of QA models on out-of-domain datasets by fine-tuning on the synthetic data generated by a question generation model and re-ranked by RQUGE.

A Variational AutoEncoder for Transformers with Nonparametric Variational Information Bottleneck

Aug 12, 2022James Henderson, Fabio Fehr

We propose a VAE for Transformers by developing a variational information bottleneck regulariser for Transformer embeddings. We formalise the embedding space of Transformer encoders as mixture probability distributions, and use Bayesian nonparametrics to derive a nonparametric variational information bottleneck (NVIB) for such attention-based embeddings. The variable number of mixture components supported by nonparametric methods captures the variable number of vectors supported by attention, and the exchangeability of our nonparametric distributions captures the permutation invariance of attention. This allows NVIB to regularise the number of vectors accessible with attention, as well as the amount of information in individual vectors. By regularising the cross-attention of a Transformer encoder-decoder with NVIB, we propose a nonparametric variational autoencoder (NVAE). Initial experiments on training a NVAE on natural language text show that the induced embedding space has the desired properties of a VAE for Transformers.

Multilingual Extraction and Categorization of Lexical Collocations with Graph-aware Transformers

May 23, 2022Luis Espinosa-Anke, Alexander Shvets, Alireza Mohammadshahi, James Henderson, Leo Wanner

Recognizing and categorizing lexical collocations in context is useful for language learning, dictionary compilation and downstream NLP. However, it is a challenging task due to the varying degrees of frozenness lexical collocations exhibit. In this paper, we put forward a sequence tagging BERT-based model enhanced with a graph-aware transformer architecture, which we evaluate on the task of collocation recognition in context. Our results suggest that explicitly encoding syntactic dependencies in the model architecture is helpful, and provide insights on differences in collocation typification in English, Spanish and French.

What Do Compressed Multilingual Machine Translation Models Forget?

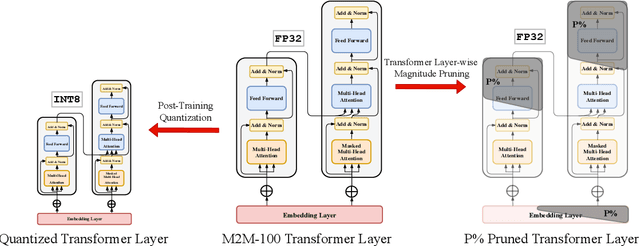

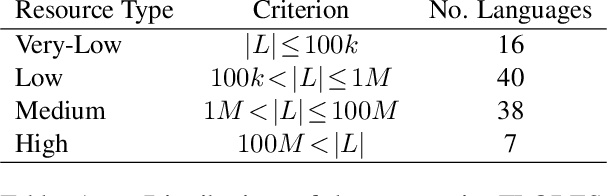

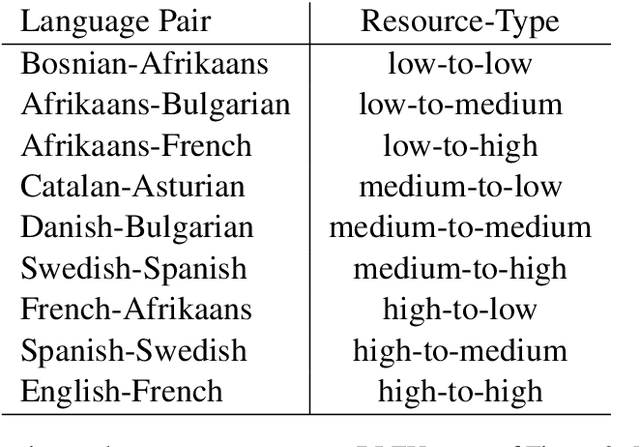

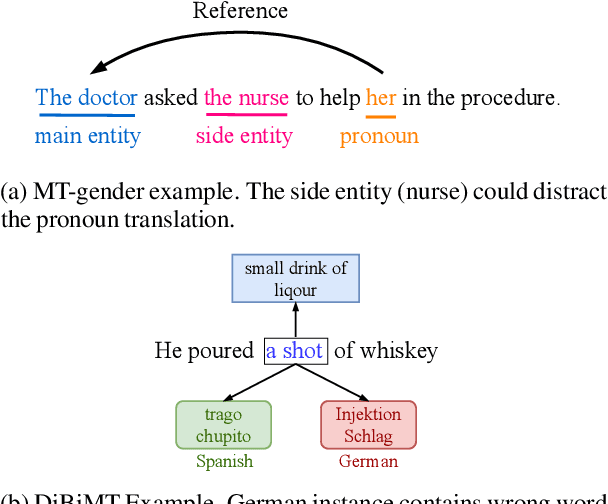

May 22, 2022Alireza Mohammadshahi, Vassilina Nikoulina, Alexandre Berard, Caroline Brun, James Henderson, Laurent Besacier

Recently, very large pre-trained models achieve state-of-the-art results in various natural language processing (NLP) tasks, but their size makes it more challenging to apply them in resource-constrained environments. Compression techniques allow to drastically reduce the size of the model and therefore its inference time with negligible impact on top-tier metrics. However, the general performance hides a drastic performance drop on under-represented features, which could result in the amplification of biases encoded by the model. In this work, we analyze the impacts of compression methods on Multilingual Neural Machine Translation models (MNMT) for various language groups and semantic features by extensive analysis of compressed models on different NMT benchmarks, e.g. FLORES-101, MT-Gender, and DiBiMT. Our experiments show that the performance of under-represented languages drops significantly, while the average BLEU metric slightly decreases. Interestingly, the removal of noisy memorization with the compression leads to a significant improvement for some medium-resource languages. Finally, we demonstrate that the compression amplifies intrinsic gender and semantic biases, even in high-resource languages.

PERFECT: Prompt-free and Efficient Few-shot Learning with Language Models

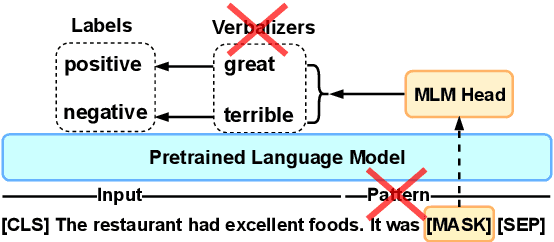

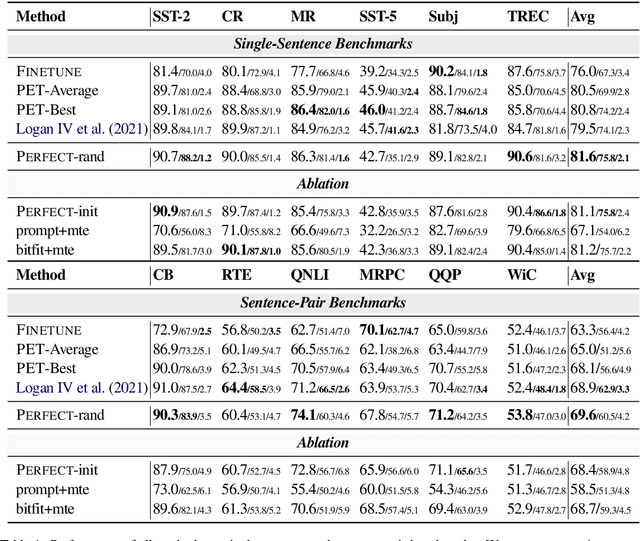

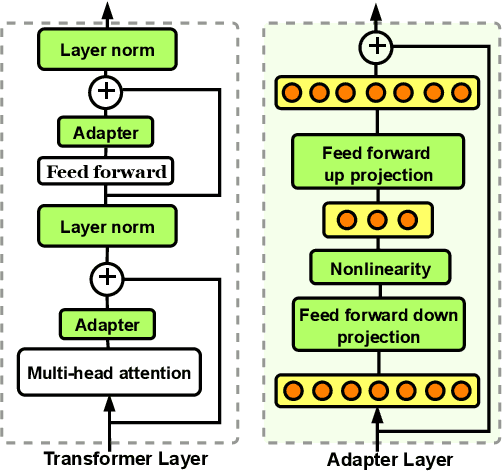

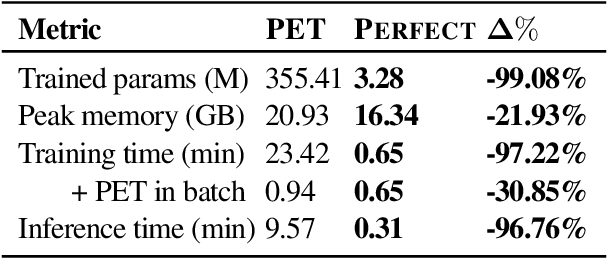

Apr 03, 2022Rabeeh Karimi Mahabadi, Luke Zettlemoyer, James Henderson, Marzieh Saeidi, Lambert Mathias, Veselin Stoyanov, Majid Yazdani

Current methods for few-shot fine-tuning of pretrained masked language models (PLMs) require carefully engineered prompts and verbalizers for each new task to convert examples into a cloze-format that the PLM can score. In this work, we propose PERFECT, a simple and efficient method for few-shot fine-tuning of PLMs without relying on any such handcrafting, which is highly effective given as few as 32 data points. PERFECT makes two key design choices: First, we show that manually engineered task prompts can be replaced with task-specific adapters that enable sample-efficient fine-tuning and reduce memory and storage costs by roughly factors of 5 and 100, respectively. Second, instead of using handcrafted verbalizers, we learn new multi-token label embeddings during fine-tuning, which are not tied to the model vocabulary and which allow us to avoid complex auto-regressive decoding. These embeddings are not only learnable from limited data but also enable nearly 100x faster training and inference. Experiments on a wide range of few-shot NLP tasks demonstrate that PERFECT, while being simple and efficient, also outperforms existing state-of-the-art few-shot learning methods. Our code is publicly available at https://github.com/rabeehk/perfect.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge