ELITR-Bench: A Meeting Assistant Benchmark for Long-Context Language Models

Mar 29, 2024Thibaut Thonet, Jos Rozen, Laurent Besacier

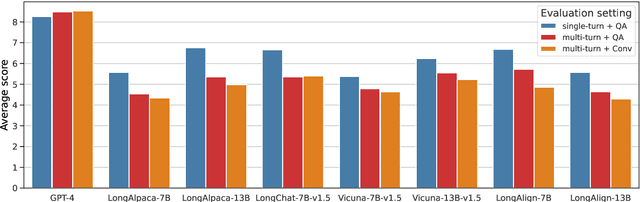

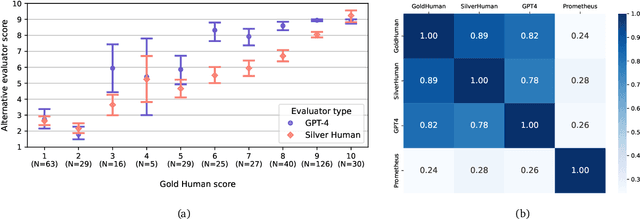

Research on Large Language Models (LLMs) has recently witnessed an increasing interest in extending models' context size to better capture dependencies within long documents. While benchmarks have been proposed to assess long-range abilities, existing efforts primarily considered generic tasks that are not necessarily aligned with real-world applications. In contrast, our work proposes a new benchmark for long-context LLMs focused on a practical meeting assistant scenario. In this scenario, the long contexts consist of transcripts obtained by automatic speech recognition, presenting unique challenges for LLMs due to the inherent noisiness and oral nature of such data. Our benchmark, named ELITR-Bench, augments the existing ELITR corpus' transcripts with 271 manually crafted questions and their ground-truth answers. Our experiments with recent long-context LLMs on ELITR-Bench highlight a gap between open-source and proprietary models, especially when questions are asked sequentially within a conversation. We also provide a thorough analysis of our GPT-4-based evaluation method, encompassing insights from a crowdsourcing study. Our findings suggest that while GPT-4's evaluation scores are correlated with human judges', its ability to differentiate among more than three score levels may be limited.

LeBenchmark 2.0: a Standardized, Replicable and Enhanced Framework for Self-supervised Representations of French Speech

Sep 11, 2023Titouan Parcollet, Ha Nguyen, Solene Evain, Marcely Zanon Boito, Adrien Pupier, Salima Mdhaffar, Hang Le, Sina Alisamir, Natalia Tomashenko, Marco Dinarelli, Shucong Zhang, Alexandre Allauzen, Maximin Coavoux, Yannick Esteve, Mickael Rouvier, Jerome Goulian, Benjamin Lecouteux, Francois Portet, Solange Rossato, Fabien Ringeval, Didier Schwab, Laurent Besacier

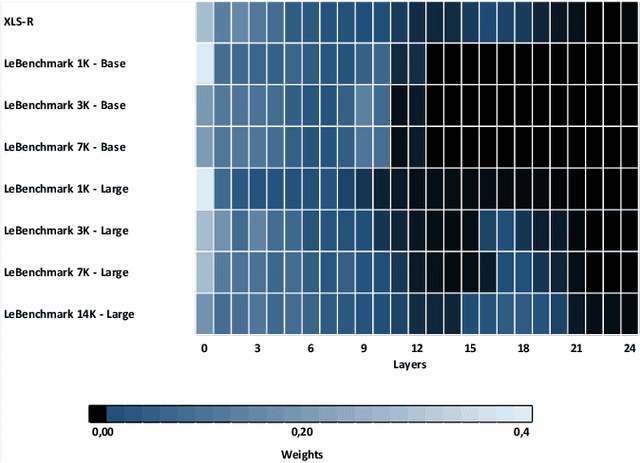

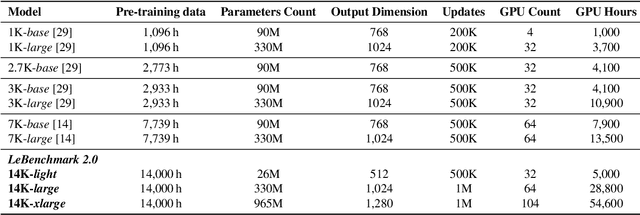

Self-supervised learning (SSL) is at the origin of unprecedented improvements in many different domains including computer vision and natural language processing. Speech processing drastically benefitted from SSL as most of the current domain-related tasks are now being approached with pre-trained models. This work introduces LeBenchmark 2.0 an open-source framework for assessing and building SSL-equipped French speech technologies. It includes documented, large-scale and heterogeneous corpora with up to 14,000 hours of heterogeneous speech, ten pre-trained SSL wav2vec 2.0 models containing from 26 million to one billion learnable parameters shared with the community, and an evaluation protocol made of six downstream tasks to complement existing benchmarks. LeBenchmark 2.0 also presents unique perspectives on pre-trained SSL models for speech with the investigation of frozen versus fine-tuned downstream models, task-agnostic versus task-specific pre-trained models as well as a discussion on the carbon footprint of large-scale model training.

Encoding Sentence Position in Context-Aware Neural Machine Translation with Concatenation

Feb 13, 2023Lorenzo Lupo, Marco Dinarelli, Laurent Besacier

Context-aware translation can be achieved by processing a concatenation of consecutive sentences with the standard translation approach. This paper investigates the intuitive idea of adopting segment embeddings for this task to help the Transformer discern the position of each sentence in the concatenation sequence. We compare various segment embeddings and propose novel methods to encode sentence position into token representations, showing that they do not benefit the vanilla concatenation approach except in a specific setting.

Focused Concatenation for Context-Aware Neural Machine Translation

Oct 24, 2022Lorenzo Lupo, Marco Dinarelli, Laurent Besacier

A straightforward approach to context-aware neural machine translation consists in feeding the standard encoder-decoder architecture with a window of consecutive sentences, formed by the current sentence and a number of sentences from its context concatenated to it. In this work, we propose an improved concatenation approach that encourages the model to focus on the translation of the current sentence, discounting the loss generated by target context. We also propose an additional improvement that strengthen the notion of sentence boundaries and of relative sentence distance, facilitating model compliance to the context-discounted objective. We evaluate our approach with both average-translation quality metrics and contrastive test sets for the translation of inter-sentential discourse phenomena, proving its superiority to the vanilla concatenation approach and other sophisticated context-aware systems.

ASR-Generated Text for Language Model Pre-training Applied to Speech Tasks

Jul 05, 2022Valentin Pelloin, Franck Dary, Nicolas Herve, Benoit Favre, Nathalie Camelin, Antoine Laurent, Laurent Besacier

We aim at improving spoken language modeling (LM) using very large amount of automatically transcribed speech. We leverage the INA (French National Audiovisual Institute) collection and obtain 19GB of text after applying ASR on 350,000 hours of diverse TV shows. From this, spoken language models are trained either by fine-tuning an existing LM (FlauBERT) or through training a LM from scratch. New models (FlauBERT-Oral) are shared with the community and evaluated for 3 downstream tasks: spoken language understanding, classification of TV shows and speech syntactic parsing. Results show that FlauBERT-Oral can be beneficial compared to its initial FlauBERT version demonstrating that, despite its inherent noisy nature, ASR-generated text can be used to build spoken language models.

BERT, can HE predict contrastive focus? Predicting and controlling prominence in neural TTS using a language model

Jul 04, 2022Brooke Stephenson, Laurent Besacier, Laurent Girin, Thomas Hueber

Several recent studies have tested the use of transformer language model representations to infer prosodic features for text-to-speech synthesis (TTS). While these studies have explored prosody in general, in this work, we look specifically at the prediction of contrastive focus on personal pronouns. This is a particularly challenging task as it often requires semantic, discursive and/or pragmatic knowledge to predict correctly. We collect a corpus of utterances containing contrastive focus and we evaluate the accuracy of a BERT model, finetuned to predict quantized acoustic prominence features, on these samples. We also investigate how past utterances can provide relevant information for this prediction. Furthermore, we evaluate the controllability of pronoun prominence in a TTS model conditioned on acoustic prominence features.

What Do Compressed Multilingual Machine Translation Models Forget?

May 22, 2022Alireza Mohammadshahi, Vassilina Nikoulina, Alexandre Berard, Caroline Brun, James Henderson, Laurent Besacier

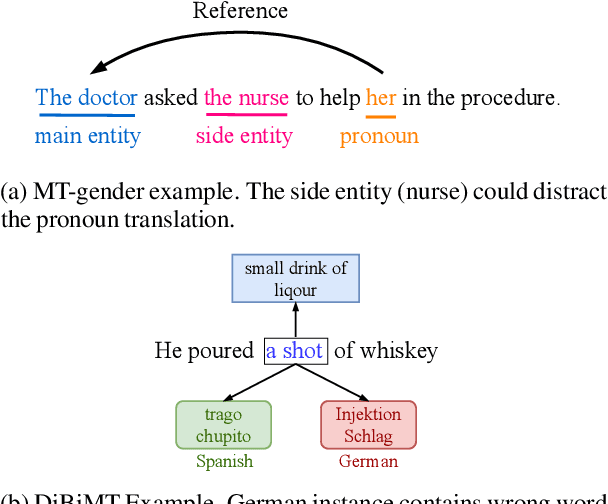

Recently, very large pre-trained models achieve state-of-the-art results in various natural language processing (NLP) tasks, but their size makes it more challenging to apply them in resource-constrained environments. Compression techniques allow to drastically reduce the size of the model and therefore its inference time with negligible impact on top-tier metrics. However, the general performance hides a drastic performance drop on under-represented features, which could result in the amplification of biases encoded by the model. In this work, we analyze the impacts of compression methods on Multilingual Neural Machine Translation models (MNMT) for various language groups and semantic features by extensive analysis of compressed models on different NMT benchmarks, e.g. FLORES-101, MT-Gender, and DiBiMT. Our experiments show that the performance of under-represented languages drops significantly, while the average BLEU metric slightly decreases. Interestingly, the removal of noisy memorization with the compression leads to a significant improvement for some medium-resource languages. Finally, we demonstrate that the compression amplifies intrinsic gender and semantic biases, even in high-resource languages.

A Study of Gender Impact in Self-supervised Models for Speech-to-Text Systems

Apr 04, 2022Marcely Zanon Boito, Laurent Besacier, Natalia Tomashenko, Yannick Estève

Self-supervised models for speech processing emerged recently as popular foundation blocks in speech processing pipelines. These models are pre-trained on unlabeled audio data and then used in speech processing downstream tasks such as automatic speech recognition (ASR) or speech translation (ST). Since these models are now used in research and industrial systems alike, it becomes necessary to understand the impact caused by some features such as gender distribution within pre-training data. Using French as our investigation language, we train and compare gender-specific wav2vec 2.0 models against models containing different degrees of gender balance in their pre-training data. The comparison is performed by applying these models to two speech-to-text downstream tasks: ASR and ST. Our results show that the type of downstream integration matters. We observe lower overall performance using gender-specific pre-training before fine-tuning an end-to-end ASR system. However, when self-supervised models are used as feature extractors, the overall ASR and ST results follow more complex patterns, in which the balanced pre-trained model is not necessarily the best option. Lastly, our crude 'fairness' metric, the relative performance difference measured between female and male test sets, does not display a strong variation from balanced to gender-specific pre-trained wav2vec 2.0 models.

Multilingual Unsupervised Neural Machine Translation with Denoising Adapters

Oct 20, 2021Ahmet Üstün, Alexandre Bérard, Laurent Besacier, Matthias Gallé

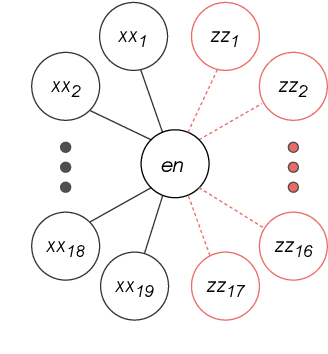

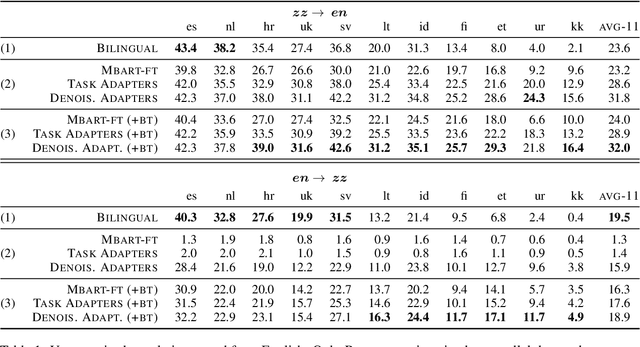

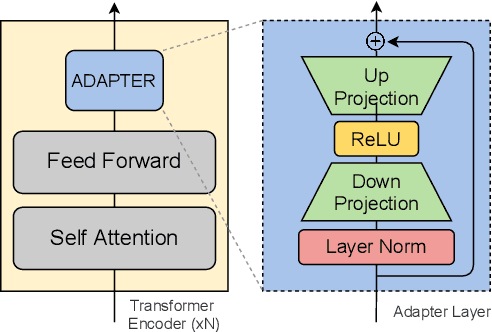

We consider the problem of multilingual unsupervised machine translation, translating to and from languages that only have monolingual data by using auxiliary parallel language pairs. For this problem the standard procedure so far to leverage the monolingual data is back-translation, which is computationally costly and hard to tune. In this paper we propose instead to use denoising adapters, adapter layers with a denoising objective, on top of pre-trained mBART-50. In addition to the modularity and flexibility of such an approach we show that the resulting translations are on-par with back-translating as measured by BLEU, and furthermore it allows adding unseen languages incrementally.

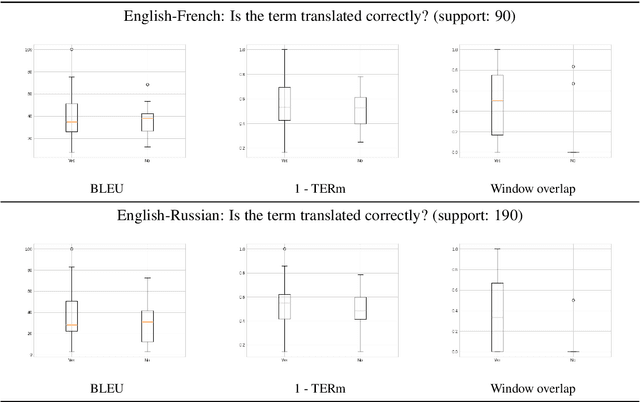

On the Evaluation of Machine Translation for Terminology Consistency

Jun 24, 2021Md Mahfuz ibn Alam, Antonios Anastasopoulos, Laurent Besacier, James Cross, Matthias Gallé, Philipp Koehn, Vassilina Nikoulina

As neural machine translation (NMT) systems become an important part of professional translator pipelines, a growing body of work focuses on combining NMT with terminologies. In many scenarios and particularly in cases of domain adaptation, one expects the MT output to adhere to the constraints provided by a terminology. In this work, we propose metrics to measure the consistency of MT output with regards to a domain terminology. We perform studies on the COVID-19 domain over 5 languages, also performing terminology-targeted human evaluation. We open-source the code for computing all proposed metrics: https://github.com/mahfuzibnalam/terminology_evaluation

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge