Machine Vision Based Assessment of Fall Color Changes in Apple Trees: Exploring Relationship with Leaf Nitrogen Concentration

Apr 23, 2024Achyut Paudel, Jostan Brown, Priyanka Upadhyaya, Atif Bilal Asad, Safal Kshetri, Manoj Karkee, Joseph R. Davidson, Cindy Grimm, Ashley Thompson

Apple trees being deciduous trees, shed leaves each year which is preceded by the change in color of leaves from green to yellow (also known as senescence) during the fall season. The rate and timing of color change are affected by the number of factors including nitrogen (N) deficiencies. The green color of leaves is highly dependent on the chlorophyll content, which in turn depends on the nitrogen concentration in the leaves. The assessment of the leaf color can give vital information on the nutrient status of the tree. The use of a machine vision based system to capture and quantify these timings and changes in leaf color can be a great tool for that purpose. \par This study is based on data collected during the fall of 2021 and 2023 at a commercial orchard using a ground-based stereo-vision sensor for five weeks. The point cloud obtained from the sensor was segmented to get just the tree in the foreground. The study involved the segmentation of the trees in a natural background using point cloud data and quantification of the color using a custom-defined metric, \textit{yellowness index}, varying from $-1$ to $+1$ ($-1$ being completely green and $+1$ being completely yellow), which gives the proportion of yellow leaves on a tree. The performance of K-means based algorithm and gradient boosting algorithm were compared for \textit{yellowness index} calculation. The segmentation method proposed in the study was able to estimate the \textit{yellowness index} on the trees with $R^2 = 0.72$. The results showed that the metric was able to capture the gradual color transition from green to yellow over the study duration. It was also observed that the trees with lower nitrogen showed the color transition to yellow earlier than the trees with higher nitrogen. The onset of color transition during both years aligned with the $29^{th}$ week post-full bloom.

Comparing YOLOv8 and Mask RCNN for object segmentation in complex orchard environments

Dec 27, 2023Ranjan Sapkota, Dawood Ahmed, Manoj Karkee

Instance segmentation, an important image processing operation for automation in agriculture, is used to precisely delineate individual objects of interest within images, which provides foundational information for various automated or robotic tasks such as selective harvesting and precision pruning. This study compares the one-stage YOLOv8 and the two-stage Mask R-CNN machine learning models for instance segmentation under varying orchard conditions across two datasets. Dataset 1, collected in dormant season, includes images of dormant apple trees, which were used to train multi-object segmentation models delineating tree branches and trunks. Dataset 2, collected in the early growing season, includes images of apple tree canopies with green foliage and immature (green) apples (also called fruitlet), which were used to train single-object segmentation models delineating only immature green apples. The results showed that YOLOv8 performed better than Mask R-CNN, achieving good precision and near-perfect recall across both datasets at a confidence threshold of 0.5. Specifically, for Dataset 1, YOLOv8 achieved a precision of 0.90 and a recall of 0.95 for all classes. In comparison, Mask R-CNN demonstrated a precision of 0.81 and a recall of 0.81 for the same dataset. With Dataset 2, YOLOv8 achieved a precision of 0.93 and a recall of 0.97. Mask R-CNN, in this single-class scenario, achieved a precision of 0.85 and a recall of 0.88. Additionally, the inference times for YOLOv8 were 10.9 ms for multi-class segmentation (Dataset 1) and 7.8 ms for single-class segmentation (Dataset 2), compared to 15.6 ms and 12.8 ms achieved by Mask R-CNN's, respectively.

Robotic Pollination of Apples in Commercial Orchards

Nov 21, 2023Ranjan Sapkota, Dawood Ahmed, Salik Khanal, Uddhav Bhattarai, Changki Mo, Matthew D. Whiting, Manoj Karkee

This research presents a novel, robotic pollination system designed for targeted pollination of apple flowers in modern fruiting wall orchards. Developed in response to the challenges of global colony collapse disorder, climate change, and the need for sustainable alternatives to traditional pollinators, the system utilizes a commercial manipulator, a vision system, and a spray nozzle for pollen application. Initial tests in April 2022 pollinated 56% of the target flower clusters with at least one fruit with a cycle time of 6.5 s. Significant improvements were made in 2023, with the system accurately detecting 91% of available flowers and pollinating 84% of target flowers with a reduced cycle time of 4.8 s. This system showed potential for precision artificial pollination that can also minimize the need for labor-intensive field operations such as flower and fruitlet thinning.

Design, Modeling, and Control of a Low-Cost and Rapid Response Soft-Growing Manipulator for Orchard Operations

Nov 01, 2023Ryan Dorosh, Justin Allen, Zixuan He, Christopher Ninatanta, Jack Coleman, Jack Spieker, Ethan Tuck, Jordan Kurtz, Qin Zhang, Matthew D. Whiting, Jiecai Luo, Manoj Karkee, Ming Luo

Tree fruit growers around the world are facing labor shortages for critical operations, including harvest and pruning. There is a great interest in developing robotic solutions for these labor-intensive tasks, but current efforts have been prohibitively costly, slow, or require a reconfiguration of the orchard in order to function. In this paper, we introduce an alternative approach to robotics using a novel and low-cost soft-growing robotic platform. Our platform features the ability to extend up to 1.2 m linearly at a maximum speed of 0.27 m/s. The soft-growing robotic arm can operate with a terminal payload of up to 1.4 kg (4.4 N), more than sufficient for carrying an apple. This platform decouples linear and steering motions to simplify path planning and the controller design for targeting. We anticipate our platform being relatively simple to maintain compared to rigid robotic arms. Herein we also describe and experimentally verify the platform's kinematic model, including the prediction of the relationship between the steering angle and the angular positions of the three steering motors. Information from the model enables the position controller to guide the end effector to the targeted positions faster and with higher stability than without this information. Overall, our research show promise for using soft-growing robotic platforms in orchard operations.

Real-time Strawberry Detection Based on Improved YOLOv5s Architecture for Robotic Harvesting in open-field environment

Sep 01, 2023Zixuan He, Salik Ram Khanal, Xin Zhang, Manoj Karkee, Qin Zhang

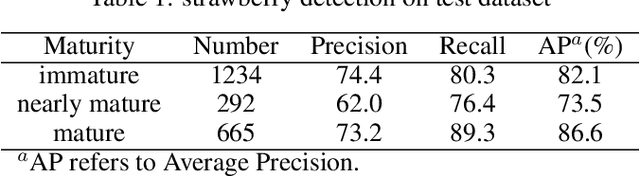

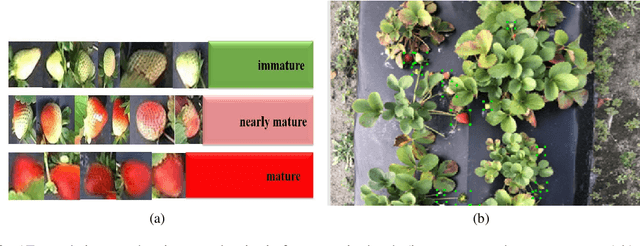

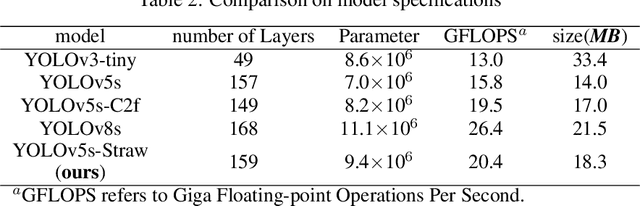

This study proposed a YOLOv5-based custom object detection model to detect strawberries in an outdoor environment. The original architecture of the YOLOv5s was modified by replacing the C3 module with the C2f module in the backbone network, which provided a better feature gradient flow. Secondly, the Spatial Pyramid Pooling Fast in the final layer of the backbone network of YOLOv5s was combined with Cross Stage Partial Net to improve the generalization ability over the strawberry dataset in this study. The proposed architecture was named YOLOv5s-Straw. The RGB images dataset of the strawberry canopy with three maturity classes (immature, nearly mature, and mature) was collected in open-field environment and augmented through a series of operations including brightness reduction, brightness increase, and noise adding. To verify the superiority of the proposed method for strawberry detection in open-field environment, four competitive detection models (YOLOv3-tiny, YOLOv5s, YOLOv5s-C2f, and YOLOv8s) were trained, and tested under the same computational environment and compared with YOLOv5s-Straw. The results showed that the highest mean average precision of 80.3% was achieved using the proposed architecture whereas the same was achieved with YOLOv3-tiny, YOLOv5s, YOLOv5s-C2f, and YOLOv8s were 73.4%, 77.8%, 79.8%, 79.3%, respectively. Specifically, the average precision of YOLOv5s-Straw was 82.1% in the immature class, 73.5% in the nearly mature class, and 86.6% in the mature class, which were 2.3% and 3.7%, respectively, higher than that of the latest YOLOv8s. The model included 8.6*10^6 network parameters with an inference speed of 18ms per image while the inference speed of YOLOv8s had a slower inference speed of 21.0ms and heavy parameters of 11.1*10^6, which indicates that the proposed model is fast enough for real time strawberry detection and localization for the robotic picking.

Machine Vision-Based Crop-Load Estimation Using YOLOv8

Apr 26, 2023Dawood Ahmed, Ranjan Sapkota, Martin Churuvija, Manoj Karkee

Labor shortages in fruit crop production have prompted the development of mechanized and automated machines as alternatives to labor-intensive orchard operations such as harvesting, pruning, and thinning. Agricultural robots capable of identifying tree canopy parts and estimating geometric and topological parameters, such as branch diameter, length, and angles, can optimize crop yields through automated pruning and thinning platforms. In this study, we proposed a machine vision system to estimate canopy parameters in apple orchards and determine an optimal number of fruit for individual branches, providing a foundation for robotic pruning, flower thinning, and fruitlet thinning to achieve desired yield and quality.Using color and depth information from an RGB-D sensor (Microsoft Azure Kinect DK), a YOLOv8-based instance segmentation technique was developed to identify trunks and branches of apple trees during the dormant season. Principal Component Analysis was applied to estimate branch diameter (used to calculate limb cross-sectional area, or LCSA) and orientation. The estimated branch diameter was utilized to calculate LCSA, which served as an input for crop-load estimation, with larger LCSA values indicating a higher potential fruit-bearing capacity.RMSE for branch diameter estimation was 2.08 mm, and for crop-load estimation, 3.95. Based on commercial apple orchard management practices, the target crop-load (number of fruit) for each segmented branch was estimated with a mean absolute error (MAE) of 2.99 (ground truth crop-load was 6 apples per LCSA). This study demonstrated a promising workflow with high performance in identifying trunks and branches of apple trees in dynamic commercial orchard environments and integrating farm management practices into automated decision-making.

Machine Vision System for Early-stage Apple Flowers and Flower Clusters Detection for Precision Thinning and Pollination

Apr 19, 2023Salik Ram Khanal, Ranjan Sapkota, Dawood Ahmed, Uddhav Bhattarai, Manoj Karkee

Early-stage identification of fruit flowers that are in both opened and unopened condition in an orchard environment is significant information to perform crop load management operations such as flower thinning and pollination using automated and robotic platforms. These operations are important in tree-fruit agriculture to enhance fruit quality, manage crop load, and enhance the overall profit. The recent development in agricultural automation suggests that this can be done using robotics which includes machine vision technology. In this article, we proposed a vision system that detects early-stage flowers in an unstructured orchard environment using YOLOv5 object detection algorithm. For the robotics implementation, the position of a cluster of the flower blossom is important to navigate the robot and the end effector. The centroid of individual flowers (both open and unopen) was identified and associated with flower clusters via K-means clustering. The accuracy of the opened and unopened flower detection is achieved up to mAP of 81.9% in commercial orchard images.

Design, Integration, and Field Evaluation of a Robotic Blossom Thinning System for Tree Fruit Crops

Apr 11, 2023Uddhav Bhattarai, Qin Zhang, Manoj Karkee

The US apple industry relies heavily on semi-skilled manual labor force for essential field operations such as training, pruning, blossom and green fruit thinning, and harvesting. Blossom thinning is one of the crucial crop load management practices to achieve desired crop load, fruit quality, and return bloom. While several techniques such as chemical, and mechanical thinning are available for large-scale blossom thinning such approaches often yield unpredictable thinning results and may cause damage the canopy, spurs, and leaf tissue. Hence, growers still depend on laborious, labor intensive and expensive manual hand blossom thinning for desired thinning outcomes. This research presents a robotic solution for blossom thinning in apple orchards using a computer vision system with artificial intelligence, a six degrees of freedom robotic manipulator, and an electrically actuated miniature end-effector for robotic blossom thinning. The integrated robotic system was evaluated in a commercial apple orchard which showed promising results for targeted and selective blossom thinning. Two thinning approaches, center and boundary thinning, were investigated to evaluate the system ability to remove varying proportion of flowers from apple flower clusters. During boundary thinning the end effector was actuated around the cluster boundary while center thinning involved end-effector actuation only at the cluster centroid for a fixed duration of 2 seconds. The boundary thinning approach thinned 67.2% of flowers from the targeted clusters with a cycle time of 9.0 seconds per cluster, whereas center thinning approach thinned 59.4% of flowers with a cycle time of 7.2 seconds per cluster. When commercially adopted, the proposed system could help address problems faced by apple growers with current hand, chemical, and mechanical blossom thinning approaches.

Swin-transformer-yolov5 For Real-time Wine Grape Bunch Detection

Aug 30, 2022Shenglian Lu, Xiaoyu Liu, Zixaun He, Manoj Karkee, Xin Zhang

In this research, an integrated detection model, Swin-transformer-YOLOv5 or Swin-T-YOLOv5, was proposed for real-time wine grape bunch detection to inherit the advantages from both YOLOv5 and Swin-transformer. The research was conducted on two different grape varieties of Chardonnay (always white berry skin) and Merlot (white or white-red mix berry skin when immature; red when matured) from July to September in 2019. To verify the superiority of Swin-T-YOLOv5, its performance was compared against several commonly used/competitive object detectors, including Faster R-CNN, YOLOv3, YOLOv4, and YOLOv5. All models were assessed under different test conditions, including two different weather conditions (sunny and cloudy), two different berry maturity stages (immature and mature), and three different sunlight directions/intensities (morning, noon, and afternoon) for a comprehensive comparison. Additionally, the predicted number of grape bunches by Swin-T-YOLOv5 was further compared with ground truth values, including both in-field manual counting and manual labeling during the annotation process. Results showed that the proposed Swin-T-YOLOv5 outperformed all other studied models for grape bunch detection, with up to 97% of mean Average Precision (mAP) and 0.89 of F1-score when the weather was cloudy. This mAP was approximately 44%, 18%, 14%, and 4% greater than Faster R-CNN, YOLOv3, YOLOv4, and YOLOv5, respectively. Swin-T-YOLOv5 achieved its lowest mAP (90%) and F1-score (0.82) when detecting immature berries, where the mAP was approximately 40%, 5%, 3%, and 1% greater than the same. Furthermore, Swin-T-YOLOv5 performed better on Chardonnay variety with achieved up to 0.91 of R2 and 2.36 root mean square error (RMSE) when comparing the predictions with ground truth. However, it underperformed on Merlot variety with achieved only up to 0.70 of R2 and 3.30 of RMSE.

An autonomous robot for pruning modern, planar fruit trees

Jun 14, 2022Alexander You, Nidhi Parayil, Josyula Gopala Krishna, Uddhav Bhattarai, Ranjan Sapkota, Dawood Ahmed, Matthew Whiting, Manoj Karkee, Cindy M. Grimm, Joseph R. Davidson

Dormant pruning of fruit trees is an important task for maintaining tree health and ensuring high-quality fruit. Due to decreasing labor availability, pruning is a prime candidate for robotic automation. However, pruning also represents a uniquely difficult problem for robots, requiring robust systems for perception, pruning point determination, and manipulation that must operate under variable lighting conditions and in complex, highly unstructured environments. In this paper, we introduce a system for pruning sweet cherry trees (in a planar tree architecture called an upright fruiting offshoot configuration) that integrates various subsystems from our previous work on perception and manipulation. The resulting system is capable of operating completely autonomously and requires minimal control of the environment. We validate the performance of our system through field trials in a sweet cherry orchard, ultimately achieving a cutting success rate of 58%. Though not fully robust and requiring improvements in throughput, our system is the first to operate on fruit trees and represents a useful base platform to be improved in the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge