Multi-Objective Hyperparameter Optimization -- An Overview

Jun 15, 2022Florian Karl, Tobias Pielok, Julia Moosbauer, Florian Pfisterer, Stefan Coors, Martin Binder, Lennart Schneider, Janek Thomas, Jakob Richter, Michel Lang, Eduardo C. Garrido-Merchán, Juergen Branke, Bernd Bischl

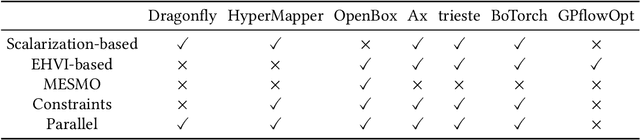

Hyperparameter optimization constitutes a large part of typical modern machine learning workflows. This arises from the fact that machine learning methods and corresponding preprocessing steps often only yield optimal performance when hyperparameters are properly tuned. But in many applications, we are not only interested in optimizing ML pipelines solely for predictive accuracy; additional metrics or constraints must be considered when determining an optimal configuration, resulting in a multi-objective optimization problem. This is often neglected in practice, due to a lack of knowledge and readily available software implementations for multi-objective hyperparameter optimization. In this work, we introduce the reader to the basics of multi- objective hyperparameter optimization and motivate its usefulness in applied ML. Furthermore, we provide an extensive survey of existing optimization strategies, both from the domain of evolutionary algorithms and Bayesian optimization. We illustrate the utility of MOO in several specific ML applications, considering objectives such as operating conditions, prediction time, sparseness, fairness, interpretability and robustness.

Automated Benchmark-Driven Design and Explanation of Hyperparameter Optimizers

Nov 29, 2021Julia Moosbauer, Martin Binder, Lennart Schneider, Florian Pfisterer, Marc Becker, Michel Lang, Lars Kotthoff, Bernd Bischl

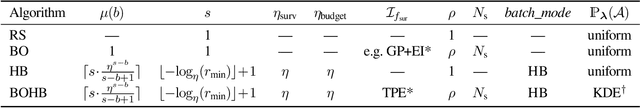

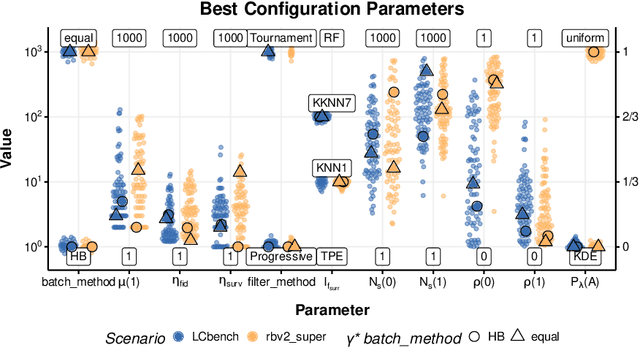

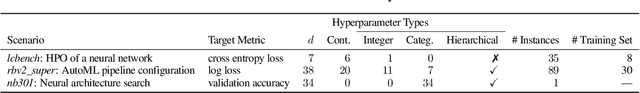

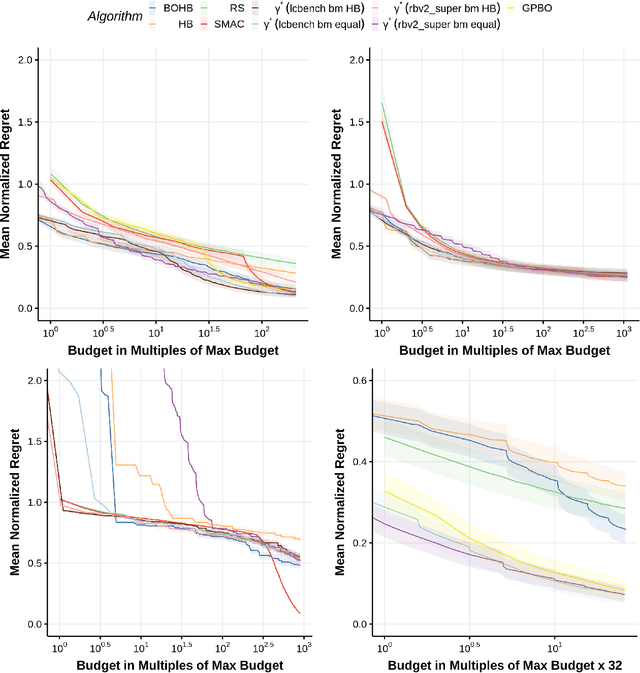

Automated hyperparameter optimization (HPO) has gained great popularity and is an important ingredient of most automated machine learning frameworks. The process of designing HPO algorithms, however, is still an unsystematic and manual process: Limitations of prior work are identified and the improvements proposed are -- even though guided by expert knowledge -- still somewhat arbitrary. This rarely allows for gaining a holistic understanding of which algorithmic components are driving performance, and carries the risk of overlooking good algorithmic design choices. We present a principled approach to automated benchmark-driven algorithm design applied to multifidelity HPO (MF-HPO): First, we formalize a rich space of MF-HPO candidates that includes, but is not limited to common HPO algorithms, and then present a configurable framework covering this space. To find the best candidate automatically and systematically, we follow a programming-by-optimization approach and search over the space of algorithm candidates via Bayesian optimization. We challenge whether the found design choices are necessary or could be replaced by more naive and simpler ones by performing an ablation analysis. We observe that using a relatively simple configuration, in some ways simpler than established methods, performs very well as long as some critical configuration parameters have the right value.

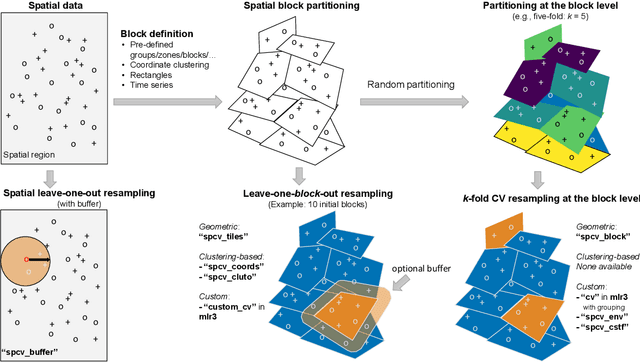

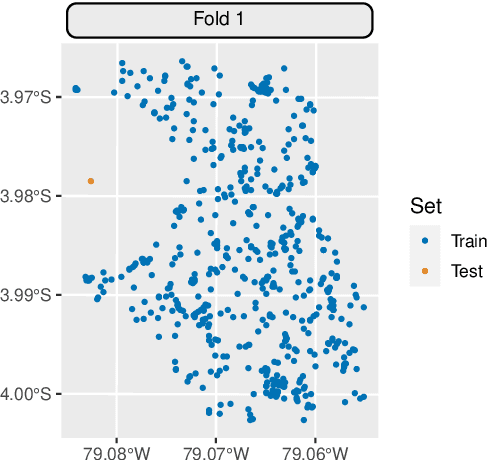

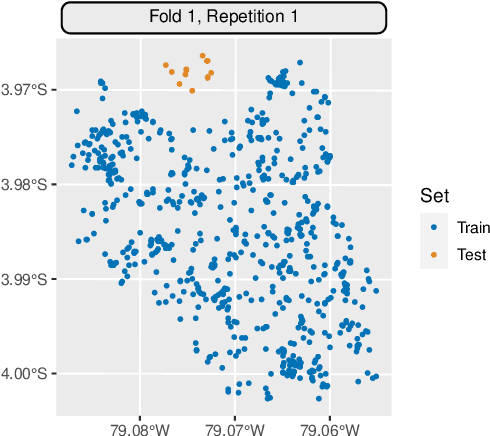

Mlr3spatiotempcv: Spatiotemporal resampling methods for machine learning in R

Oct 25, 2021Patrick Schratz, Marc Becker, Michel Lang, Alexander Brenning

Spatial and spatiotemporal machine-learning models require a suitable framework for their model assessment, model selection, and hyperparameter tuning, in order to avoid error estimation bias and over-fitting. This contribution reviews the state-of-the-art in spatial and spatiotemporal CV, and introduces the \proglang{R} package mlr3spatiotempcv as an extension package of the machine-learning framework \textbf{mlr3}. Currently various \proglang{R} packages implementing different spatiotemporal partitioning strategies exist: \pkg{blockCV}, \pkg{CAST}, \pkg{kmeans} and \pkg{sperrorest}. The goal of \pkg{mlr3spatiotempcv} is to gather the available spatiotemporal resampling methods in \proglang{R} and make them available to users through a simple and common interface. This is made possible by integrating the package directly into the \pkg{mlr3} machine-learning framework, which already has support for generic non-spatiotemporal resampling methods such as random partitioning. One advantage is the use of a consistent nomenclature in an overarching machine-learning toolkit instead of a varying package-specific syntax, making it easier for users to choose from a variety of spatiotemporal resampling methods. This package avoids giving recommendations which method to use in practice as this decision depends on the predictive task at hand, the autocorrelation within the data, and the spatial structure of the sampling design or geographic objects being studied.

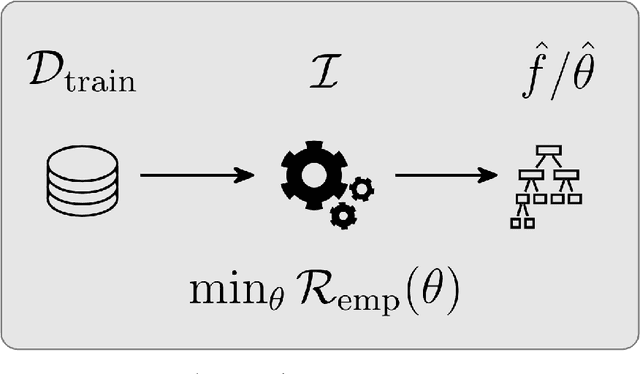

Hyperparameter Optimization: Foundations, Algorithms, Best Practices and Open Challenges

Jul 14, 2021Bernd Bischl, Martin Binder, Michel Lang, Tobias Pielok, Jakob Richter, Stefan Coors, Janek Thomas, Theresa Ullmann, Marc Becker, Anne-Laure Boulesteix, Difan Deng, Marius Lindauer

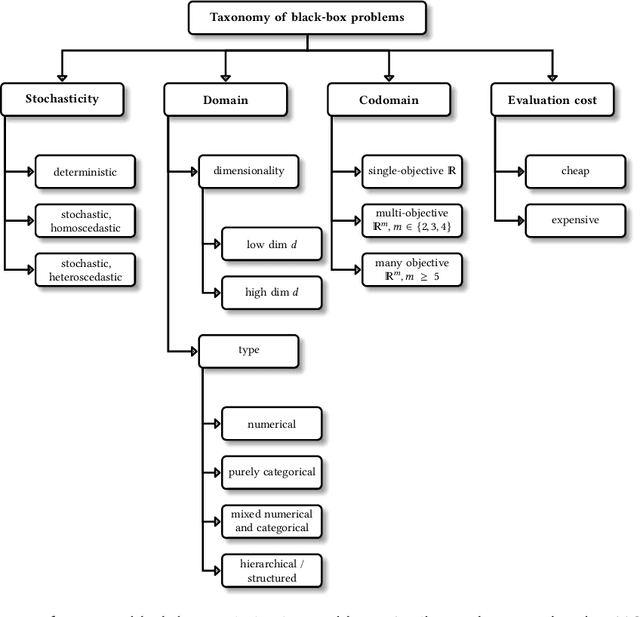

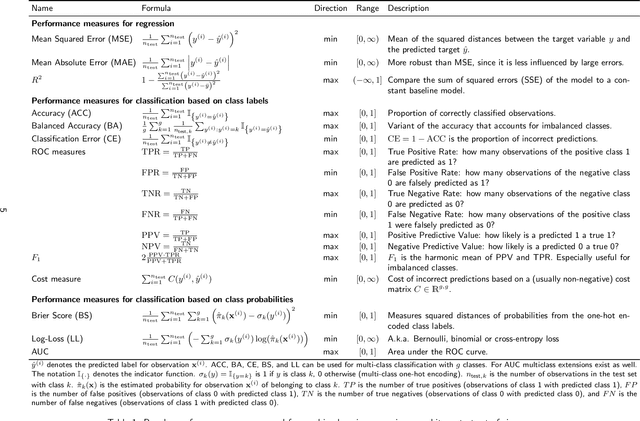

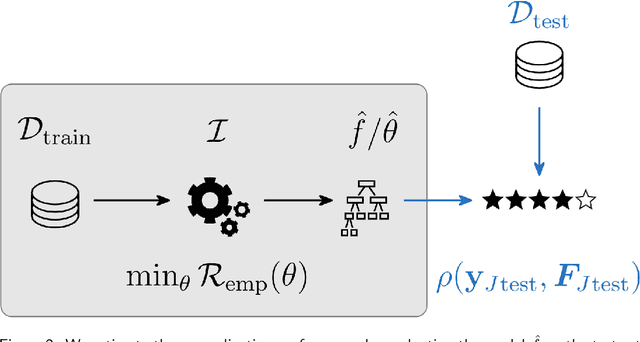

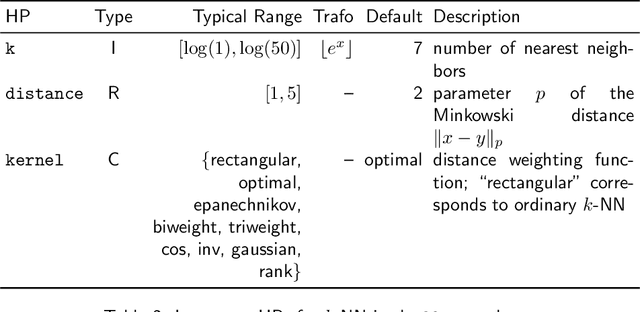

Most machine learning algorithms are configured by one or several hyperparameters that must be carefully chosen and often considerably impact performance. To avoid a time consuming and unreproducible manual trial-and-error process to find well-performing hyperparameter configurations, various automatic hyperparameter optimization (HPO) methods, e.g., based on resampling error estimation for supervised machine learning, can be employed. After introducing HPO from a general perspective, this paper reviews important HPO methods such as grid or random search, evolutionary algorithms, Bayesian optimization, Hyperband and racing. It gives practical recommendations regarding important choices to be made when conducting HPO, including the HPO algorithms themselves, performance evaluation, how to combine HPO with ML pipelines, runtime improvements, and parallelization.

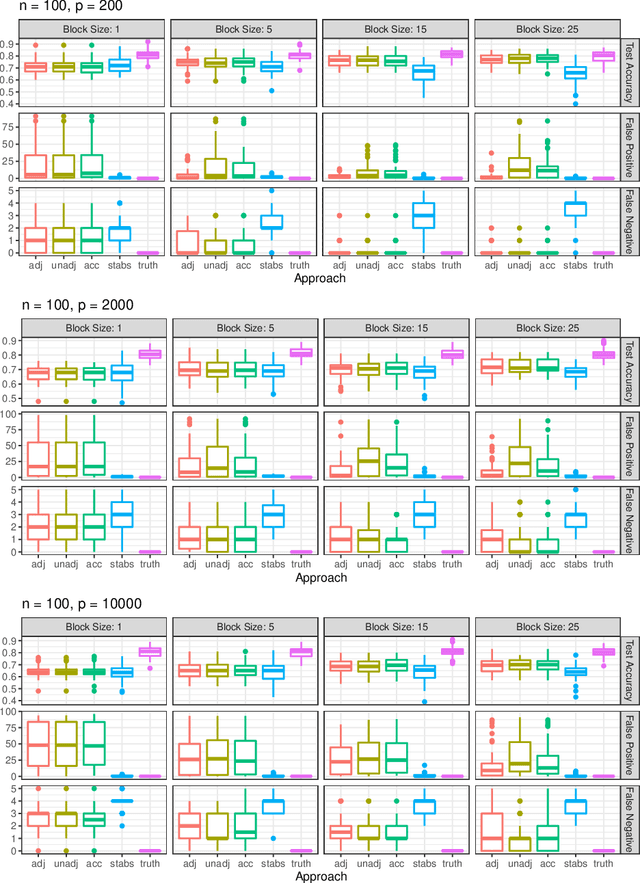

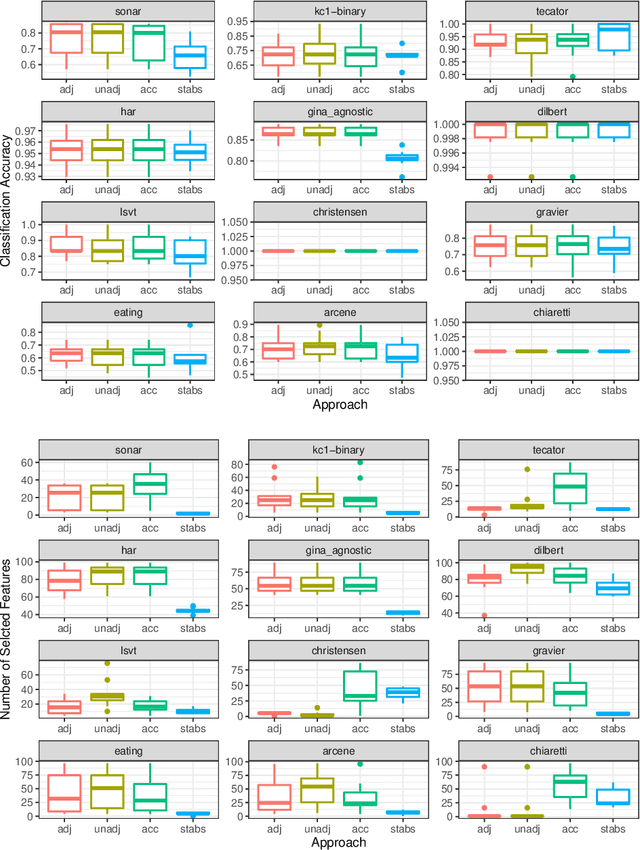

Employing an Adjusted Stability Measure for Multi-Criteria Model Fitting on Data Sets with Similar Features

Jun 15, 2021Andrea Bommert, Jörg Rahnenführer, Michel Lang

Fitting models with high predictive accuracy that include all relevant but no irrelevant or redundant features is a challenging task on data sets with similar (e.g. highly correlated) features. We propose the approach of tuning the hyperparameters of a predictive model in a multi-criteria fashion with respect to predictive accuracy and feature selection stability. We evaluate this approach based on both simulated and real data sets and we compare it to the standard approach of single-criteria tuning of the hyperparameters as well as to the state-of-the-art technique "stability selection". We conclude that our approach achieves the same or better predictive performance compared to the two established approaches. Considering the stability during tuning does not decrease the predictive accuracy of the resulting models. Our approach succeeds at selecting the relevant features while avoiding irrelevant or redundant features. The single-criteria approach fails at avoiding irrelevant or redundant features and the stability selection approach fails at selecting enough relevant features for achieving acceptable predictive accuracy. For our approach, for data sets with many similar features, the feature selection stability must be evaluated with an adjusted stability measure, that is, a measure that considers similarities between features. For data sets with only few similar features, an unadjusted stability measure suffices and is faster to compute.

mlr3proba: Machine Learning Survival Analysis in R

Aug 18, 2020Raphael Sonabend, Franz J. Király, Andreas Bender, Bernd Bischl, Michel Lang

As machine learning has become increasingly popular over the last few decades, so too has the number of machine learning interfaces for implementing these models. However, no consistent interface for evaluation and modelling of survival analysis has emerged despite its vital importance in many fields, including medicine, economics, and engineering. \texttt{mlr3proba} is part of the \texttt{mlr3} ecosystem of machine learning packages for R and facilitates \texttt{mlr3}'s general model tuning and benchmarking by providing a multitude of performance measures and learners for survival analysis with a clean and systematic infrastructure for their evaluation. \texttt{mlr3proba} provides a comprehensive machine learning interface for survival analysis, which allows survival modelling to finally be up to the state-of-art.

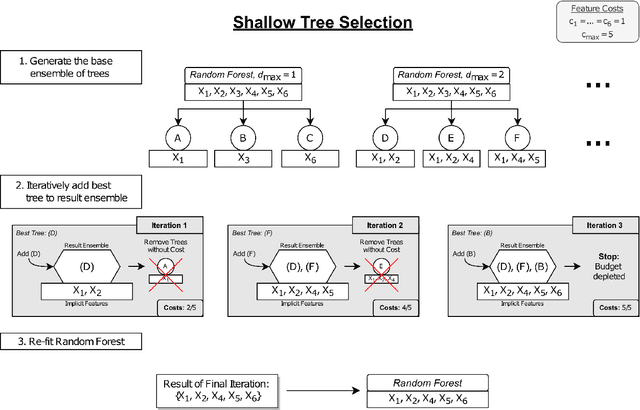

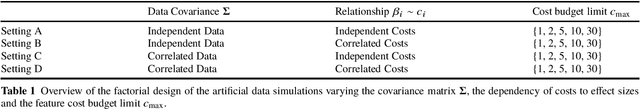

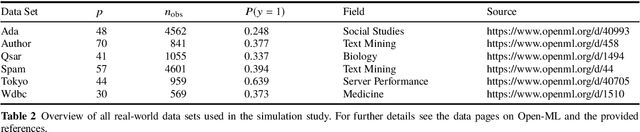

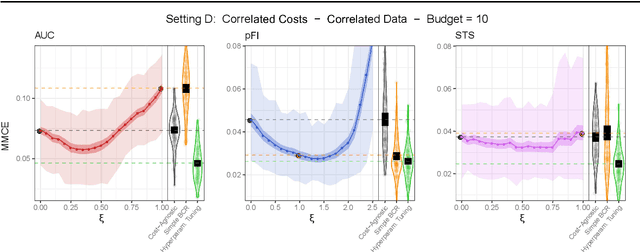

Feature Selection Methods for Cost-Constrained Classification in Random Forests

Aug 17, 2020Rudolf Jagdhuber, Michel Lang, Jörg Rahnenführer

Cost-sensitive feature selection describes a feature selection problem, where features raise individual costs for inclusion in a model. These costs allow to incorporate disfavored aspects of features, e.g. failure rates of as measuring device, or patient harm, in the model selection process. Random Forests define a particularly challenging problem for feature selection, as features are generally entangled in an ensemble of multiple trees, which makes a post hoc removal of features infeasible. Feature selection methods therefore often either focus on simple pre-filtering methods, or require many Random Forest evaluations along their optimization path, which drastically increases the computational complexity. To solve both issues, we propose Shallow Tree Selection, a novel fast and multivariate feature selection method that selects features from small tree structures. Additionally, we also adapt three standard feature selection algorithms for cost-sensitive learning by introducing a hyperparameter-controlled benefit-cost ratio criterion (BCR) for each method. In an extensive simulation study, we assess this criterion, and compare the proposed methods to multiple performance-based baseline alternatives on four artificial data settings and seven real-world data settings. We show that all methods using a hyperparameterized BCR criterion outperform the baseline alternatives. In a direct comparison between the proposed methods, each method indicates strengths in certain settings, but no one-fits-all solution exists. On a global average, we could identify preferable choices among our BCR based methods. Nevertheless, we conclude that a practical analysis should never rely on a single method only, but always compare different approaches to obtain the best results.

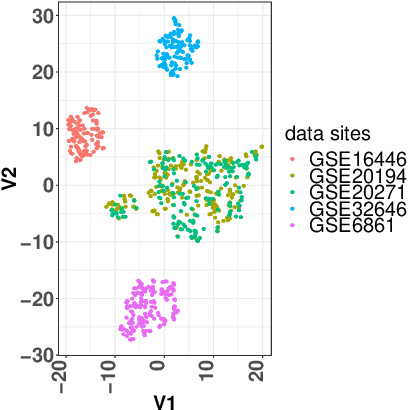

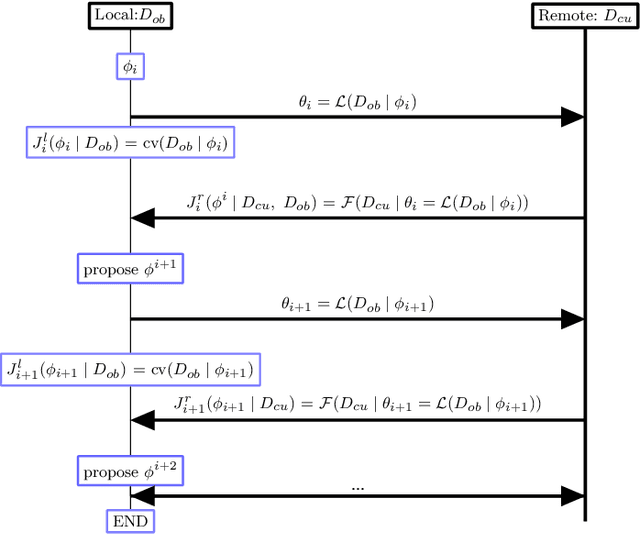

High Dimensional Restrictive Federated Model Selection with multi-objective Bayesian Optimization over shifted distributions

Feb 24, 2019Xudong Sun, Andrea Bommert, Florian Pfisterer, Jörg Rahnenführer, Michel Lang, Bernd Bischl

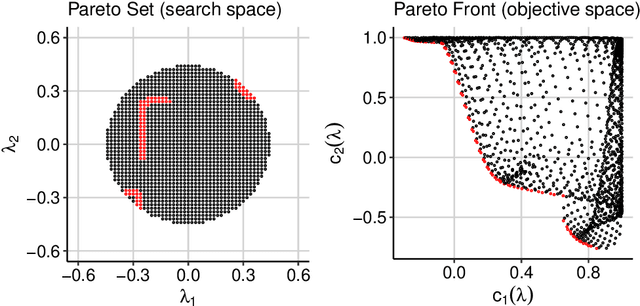

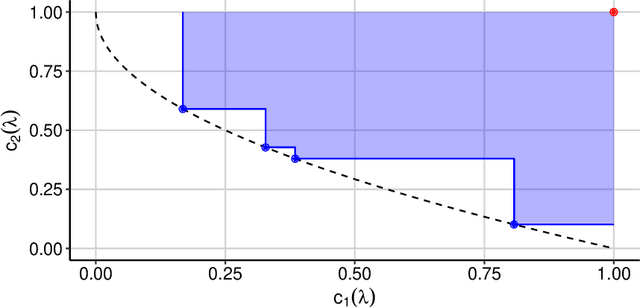

A novel machine learning optimization process coined Restrictive Federated Model Selection (RFMS) is proposed under the scenario, for example, when data from healthcare units can not leave the site it is situated on and it is forbidden to carry out training algorithms on remote data sites due to either technical or privacy and trust concerns. To carry out a clinical research under this scenario, an analyst could train a machine learning model only on local data site, but it is still possible to execute a statistical query at a certain cost in the form of sending a machine learning model to some of the remote data sites and get the performance measures as feedback, maybe due to prediction being usually much cheaper. Compared to federated learning, which is optimizing the model parameters directly by carrying out training across all data sites, RFMS trains model parameters only on one local data site but optimizes hyper-parameters across other data sites jointly since hyper-parameters play an important role in machine learning performance. The aim is to get a Pareto optimal model with respective to both local and remote unseen prediction losses, which could generalize well across data sites. In this work, we specifically consider high dimensional data with shifted distributions over data sites. As an initial investigation, Bayesian Optimization especially multi-objective Bayesian Optimization is used to guide an adaptive hyper-parameter optimization process to select models under the RFMS scenario. Empirical results show that solely using the local data site to tune hyper-parameters generalizes poorly across data sites, compared to methods that utilize the local and remote performances. Furthermore, in terms of dominated hypervolumes, multi-objective Bayesian Optimization algorithms show increased performance across multiple data sites among other candidates.

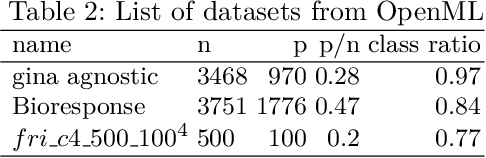

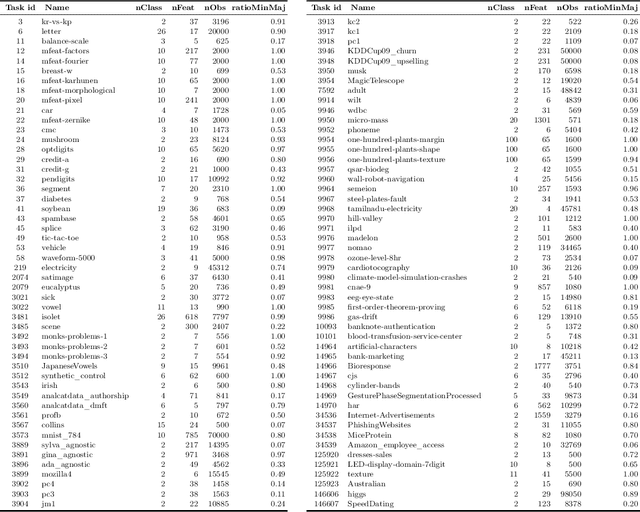

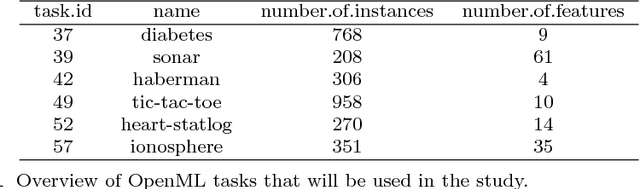

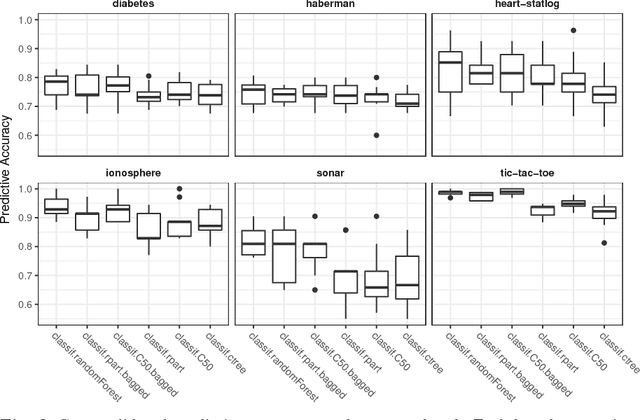

OpenML Benchmarking Suites and the OpenML100

Aug 11, 2017Bernd Bischl, Giuseppe Casalicchio, Matthias Feurer, Frank Hutter, Michel Lang, Rafael G. Mantovani, Jan N. van Rijn, Joaquin Vanschoren

We advocate the use of curated, comprehensive benchmark suites of machine learning datasets, backed by standardized OpenML-based interfaces and complementary software toolkits written in Python, Java and R. Major distinguishing features of OpenML benchmark suites are (a) ease of use through standardized data formats, APIs, and existing client libraries; (b) machine-readable meta-information regarding the contents of the suite; and (c) online sharing of results, enabling large scale comparisons. As a first such suite, we propose the OpenML100, a machine learning benchmark suite of 100~classification datasets carefully curated from the thousands of datasets available on OpenML.org.

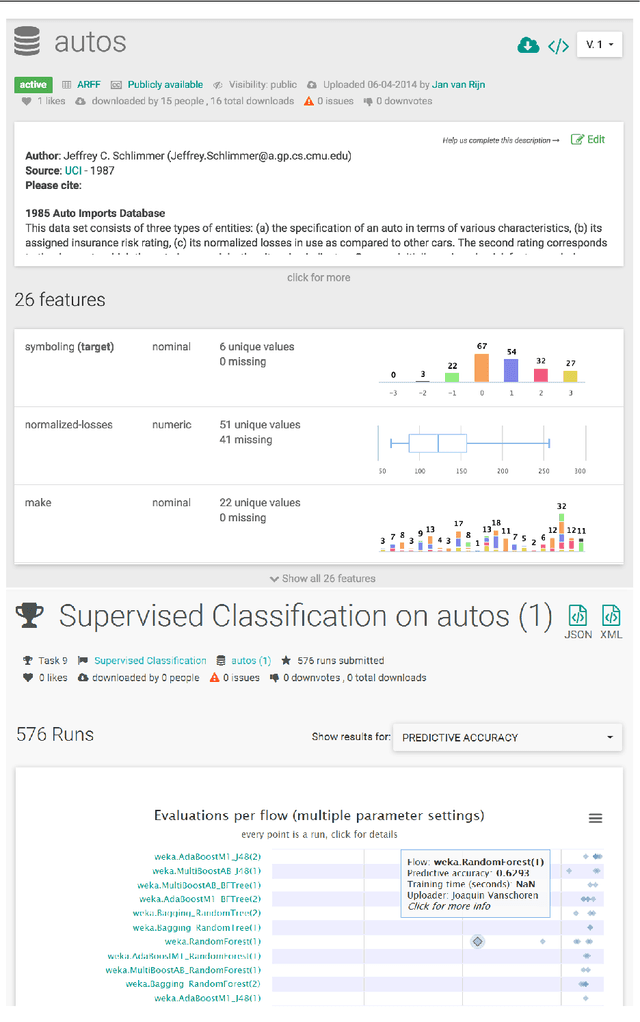

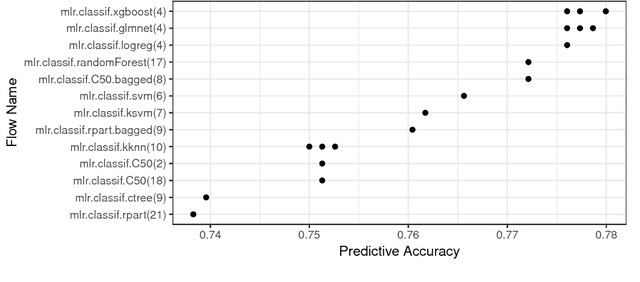

OpenML: An R Package to Connect to the Machine Learning Platform OpenML

May 04, 2017Giuseppe Casalicchio, Jakob Bossek, Michel Lang, Dominik Kirchhoff, Pascal Kerschke, Benjamin Hofner, Heidi Seibold, Joaquin Vanschoren, Bernd Bischl

OpenML is an online machine learning platform where researchers can easily share data, machine learning tasks and experiments as well as organize them online to work and collaborate more efficiently. In this paper, we present an R package to interface with the OpenML platform and illustrate its usage in combination with the machine learning R package mlr. We show how the OpenML package allows R users to easily search, download and upload data sets and machine learning tasks. Furthermore, we also show how to upload results of experiments, share them with others and download results from other users. Beyond ensuring reproducibility of results, the OpenML platform automates much of the drudge work, speeds up research, facilitates collaboration and increases the users' visibility online.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge