Model-based Deep Learning for Rate Split Multiple Access in Vehicular Communications

May 02, 2024Hanwen Zhang, Mingzhe Chen, Alireza Vahid, Haijian Sun

Rate split multiple access (RSMA) has been proven as an effective communication scheme for 5G and beyond, especially in vehicular scenarios. However, RSMA requires complicated iterative algorithms for proper resource allocation, which cannot fulfill the stringent latency requirement in resource constrained vehicles. Although data driven approaches can alleviate this issue, they suffer from poor generalizability and scarce training data. In this paper, we propose a fractional programming (FP) based deep unfolding (DU) approach to address resource allocation problem for a weighted sum rate optimization in RSMA. By carefully designing the penalty function, we couple the variable update with projected gradient descent algorithm (PGD). Following the structure of PGD, we embed few learnable parameters in each layer of the DU network. Through extensive simulation, we have shown that the proposed model-based neural networks has similar performance as optimal results given by traditional algorithm but with much lower computational complexity, less training data, and higher resilience to test set data and out-of-distribution (OOD) data.

Mapping Wireless Networks into Digital Reality through Joint Vertical and Horizontal Learning

Apr 22, 2024Zifan Zhang, Mingzhe Chen, Zhaohui Yang, Yuchen Liu

In recent years, the complexity of 5G and beyond wireless networks has escalated, prompting a need for innovative frameworks to facilitate flexible management and efficient deployment. The concept of digital twins (DTs) has emerged as a solution to enable real-time monitoring, predictive configurations, and decision-making processes. While existing works primarily focus on leveraging DTs to optimize wireless networks, a detailed mapping methodology for creating virtual representations of network infrastructure and properties is still lacking. In this context, we introduce VH-Twin, a novel time-series data-driven framework that effectively maps wireless networks into digital reality. VH-Twin distinguishes itself through complementary vertical twinning (V-twinning) and horizontal twinning (H-twinning) stages, followed by a periodic clustering mechanism used to virtualize network regions based on their distinct geological and wireless characteristics. Specifically, V-twinning exploits distributed learning techniques to initialize a global twin model collaboratively from virtualized network clusters. H-twinning, on the other hand, is implemented with an asynchronous mapping scheme that dynamically updates twin models in response to network or environmental changes. Leveraging real-world wireless traffic data within a cellular wireless network, comprehensive experiments are conducted to verify that VH-Twin can effectively construct, deploy, and maintain network DTs. Parametric analysis also offers insights into how to strike a balance between twinning efficiency and model accuracy at scale.

Positioning Using Wireless Networks: Applications, Recent Progress and Future Challenges

Mar 18, 2024Yang Yang, Mingzhe Chen, Yufei Blankenship, Jemin Lee, Zabih Ghassemlooy, Julian Cheng, Shiwen Mao

Positioning has recently received considerable attention as a key enabler in emerging applications such as extended reality, unmanned aerial vehicles and smart environments. These applications require both data communication and high-precision positioning, and thus they are particularly well-suited to be offered in wireless networks (WNs). The purpose of this paper is to provide a comprehensive overview of existing works and new trends in the field of positioning techniques from both the academic and industrial perspectives. The paper provides a comprehensive overview of positioning in WNs, covering the background, applications, measurements, state-of-the-art technologies and future challenges. The paper outlines the applications of positioning from the perspectives of public facilities, enterprises and individual users. We investigate the key performance indicators and measurements of positioning systems, followed by the review of the key enabler techniques such as artificial intelligence/large models and adaptive systems. Next, we discuss a number of typical wireless positioning technologies. We extend our overview beyond the academic progress, to include the standardization efforts, and finally, we provide insight into the challenges that remain. The comprehensive overview of exisitng efforts and new trends in the field of positioning from both the academic and industrial communities would be a useful reference to researchers in the field.

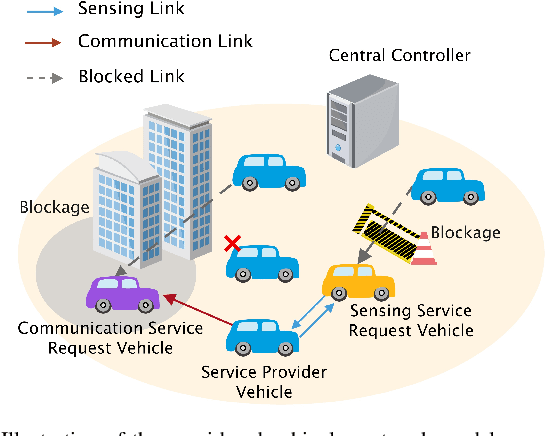

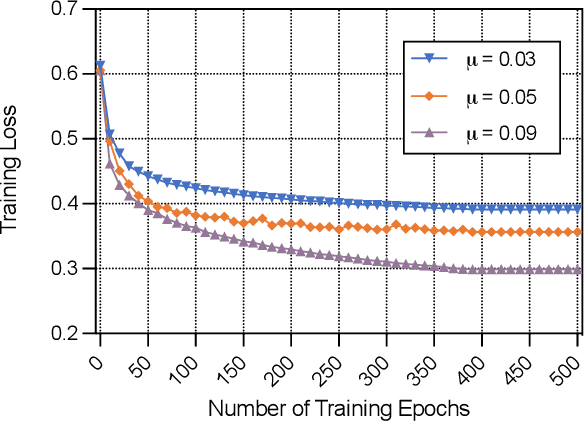

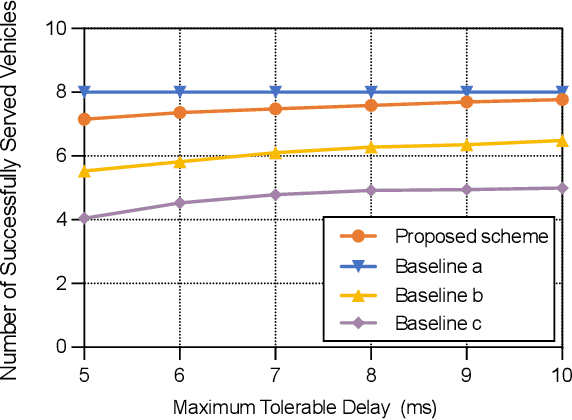

Jointly Optimizing Terahertz based Sensing and Communications in Vehicular Networks: A Dynamic Graph Neural Network Approach

Mar 17, 2024Xuefei Li, Mingzhe Chen, Ye Hu, Zhilong Zhang, Danpu Liu, Shiwen Mao

In this paper, the problem of vehicle service mode selection (sensing, communication, or both) and vehicle connections within terahertz (THz) enabled joint sensing and communications over vehicular networks is studied. The considered network consists of several service provider vehicles (SPVs) that can provide: 1) only sensing service, 2) only communication service, and 3) both services, sensing service request vehicles, and communication service request vehicles. Based on the vehicle network topology and their service accessibility, SPVs strategically select service request vehicles to provide sensing, communication, or both services. This problem is formulated as an optimization problem, aiming to maximize the number of successfully served vehicles by jointly determining the service mode of each SPV and its associated vehicles. To solve this problem, we propose a dynamic graph neural network (GNN) model that selects appropriate graph information aggregation functions according to the vehicle network topology, thus extracting more vehicle network information compared to traditional static GNNs that use fixed aggregation functions for different vehicle network topologies. Using the extracted vehicle network information, the service mode of each SPV and its served service request vehicles will be determined. Simulation results show that the proposed dynamic GNN based method can improve the number of successfully served vehicles by up to 17% and 28% compared to a GNN based algorithm with a fixed neural network model and a conventional optimization algorithm without using GNNs.

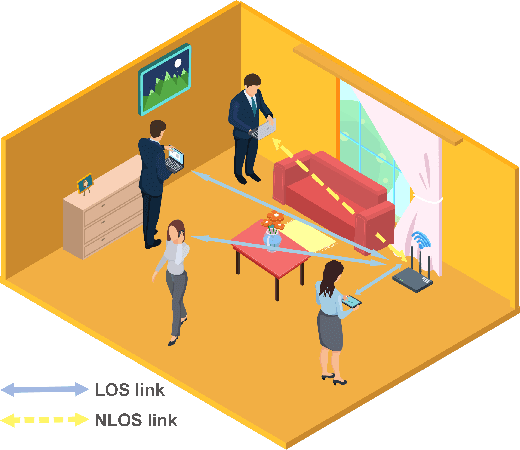

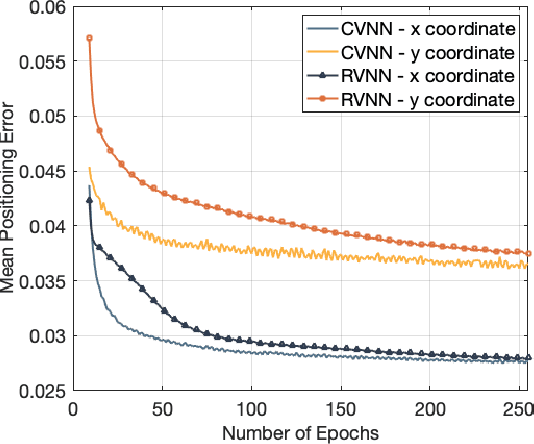

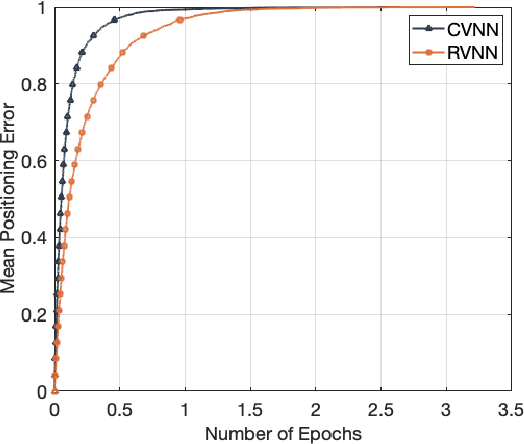

Complex-Valued Neural Network based Federated Learning for Multi-user Indoor Positioning Performance Optimization

Mar 01, 2024Hanzhi Yu, Mingzhe Chen, Yuchen Liu

In this article, the use of channel state information (CSI) for indoor positioning is studied. In the considered model, a server equipped with several antennas sends pilot signals to users, while each user uses the received pilot signals to estimate channel states for user positioning. To this end, we formulate the positioning problem as an optimization problem aiming to minimize the gap between the estimated positions and the ground truth positions of users. To solve this problem, we design a complex-valued neural network (CVNN) model based federated learning (FL) algorithm. Compared to standard real-valued centralized machine learning (ML) methods, our proposed algorithm has two main advantages. First, our proposed algorithm can directly process complex-valued CSI data without data transformation. Second, our proposed algorithm is a distributed ML method that does not require users to send their CSI data to the server. Since the output of our proposed algorithm is complex-valued which consists of the real and imaginary parts, we study the use of the CVNN to implement two learning tasks. First, the proposed algorithm directly outputs the estimated positions of a user. Here, the real and imaginary parts of an output neuron represent the 2D coordinates of the user. Second, the proposed method can output two CSI features (i.e., line-of-sight/non-line-of-sight transmission link classification and time of arrival (TOA) prediction) which can be used in traditional positioning algorithms. Simulation results demonstrate that our designed CVNN based FL can reduce the mean positioning error between the estimated position and the actual position by up to 36\%, compared to a RVNN based FL which requires to transform CSI data into real-valued data.

A Joint Communication and Computation Design for Probabilistic Semantic Communications

Feb 28, 2024Zhouxiang Zhao, Zhaohui Yang, Mingzhe Chen, Zhaoyang Zhang, H. Vincent Poor

In this paper, the problem of joint transmission and computation resource allocation for a multi-user probabilistic semantic communication (PSC) network is investigated. In the considered model, users employ semantic information extraction techniques to compress their large-sized data before transmitting them to a multi-antenna base station (BS). Our model represents large-sized data through substantial knowledge graphs, utilizing shared probability graphs between the users and the BS for efficient semantic compression. The resource allocation problem is formulated as an optimization problem with the objective of maximizing the sum of equivalent rate of all users, considering total power budget and semantic resource limit constraints. The computation load considered in the PSC network is formulated as a non-smooth piecewise function with respect to the semantic compression ratio. To tackle this non-convex non-smooth optimization challenge, a three-stage algorithm is proposed where the solutions for the receive beamforming matrix of the BS, transmit power of each user, and semantic compression ratio of each user are obtained stage by stage. Numerical results validate the effectiveness of our proposed scheme.

A Joint Communication and Computation Framework for Digital Twin over Wireless Networks

Feb 01, 2024Zhaohui Yang, Mingzhe Chen, Yuchen Liu, Zhaoyang Zhang

In this paper, the problem of low-latency communication and computation resource allocation for digital twin (DT) over wireless networks is investigated. In the considered model, multiple physical devices in the physical network (PN) needs to frequently offload the computation task related data to the digital network twin (DNT), which is generated and controlled by the central server. Due to limited energy budget of the physical devices, both computation accuracy and wireless transmission power must be considered during the DT procedure. This joint communication and computation problem is formulated as an optimization problem whose goal is to minimize the overall transmission delay of the system under total PN energy and DNT model accuracy constraints. To solve this problem, an alternating algorithm with iteratively solving device scheduling, power control, and data offloading subproblems. For the device scheduling subproblem, the optimal solution is obtained in closed form through the dual method. For the special case with one physical device, the optimal number of transmission times is reveled. Based on the theoretical findings, the original problem is transformed into a simplified problem and the optimal device scheduling can be found. Numerical results verify that the proposed algorithm can reduce the transmission delay of the system by up to 51.2\% compared to the conventional schemes.

A Survey on Indoor Visible Light Positioning Systems: Fundamentals, Applications, and Challenges

Jan 25, 2024Zhiyu Zhu, Yang Yang, Mingzhe Chen, Caili Guo, Julian Cheng, Shuguang Cui

The growing demand for location-based services in areas like virtual reality, robot control, and navigation has intensified the focus on indoor localization. Visible light positioning (VLP), leveraging visible light communications (VLC), becomes a promising indoor positioning technology due to its high accuracy and low cost. This paper provides a comprehensive survey of VLP systems. In particular, since VLC lays the foundation for VLP, we first present a detailed overview of the principles of VLC. The performance of each positioning algorithm is also compared in terms of various metrics such as accuracy, coverage, and orientation limitation. Beyond the physical layer studies, the network design for a VLP system is also investigated, including multi-access technologies resource allocation, and light-emitting diode (LED) placements. Next, the applications of the VLP systems are overviewed. Finally, this paper outlines open issues, challenges, and future research directions for the research field. In a nutshell, this paper constitutes the first holistic survey on VLP from state-of-the-art studies to practical uses.

Collaborative Reinforcement Learning Based Unmanned Aerial Vehicle (UAV) Trajectory Design for 3D UAV Tracking

Jan 22, 2024Yujiao Zhu, Mingzhe Chen, Sihua Wang, Ye Hu, Yuchen Liu, Changchuan Yin

In this paper, the problem of using one active unmanned aerial vehicle (UAV) and four passive UAVs to localize a 3D target UAV in real time is investigated. In the considered model, each passive UAV receives reflection signals from the target UAV, which are initially transmitted by the active UAV. The received reflection signals allow each passive UAV to estimate the signal transmission distance which will be transmitted to a base station (BS) for the estimation of the position of the target UAV. Due to the movement of the target UAV, each active/passive UAV must optimize its trajectory to continuously localize the target UAV. Meanwhile, since the accuracy of the distance estimation depends on the signal-to-noise ratio of the transmission signals, the active UAV must optimize its transmit power. This problem is formulated as an optimization problem whose goal is to jointly optimize the transmit power of the active UAV and trajectories of both active and passive UAVs so as to maximize the target UAV positioning accuracy. To solve this problem, a Z function decomposition based reinforcement learning (ZD-RL) method is proposed. Compared to value function decomposition based RL (VD-RL), the proposed method can find the probability distribution of the sum of future rewards to accurately estimate the expected value of the sum of future rewards thus finding better transmit power of the active UAV and trajectories for both active and passive UAVs and improving target UAV positioning accuracy. Simulation results show that the proposed ZD-RL method can reduce the positioning errors by up to 39.4% and 64.6%, compared to VD-RL and independent deep RL methods, respectively.

Joint User Scheduling and Computing Resource Allocation Optimization in Asynchronous Mobile Edge Computing Networks

Jan 21, 2024Yihan Cang, Ming Chen, Yijin Pan, Zhaohui Yang, Ye Hu, Haijian Sun, Mingzhe Chen

In this paper, the problem of joint user scheduling and computing resource allocation in asynchronous mobile edge computing (MEC) networks is studied. In such networks, edge devices will offload their computational tasks to an MEC server, using the energy they harvest from this server. To get their tasks processed on time using the harvested energy, edge devices will strategically schedule their task offloading, and compete for the computational resource at the MEC server. Then, the MEC server will execute these tasks asynchronously based on the arrival of the tasks. This joint user scheduling, time and computation resource allocation problem is posed as an optimization framework whose goal is to find the optimal scheduling and allocation strategy that minimizes the energy consumption of these mobile computing tasks. To solve this mixed-integer non-linear programming problem, the general benders decomposition method is adopted which decomposes the original problem into a primal problem and a master problem. Specifically, the primal problem is related to computation resource and time slot allocation, of which the optimal closed-form solution is obtained. The master problem regarding discrete user scheduling variables is constructed by adding optimality cuts or feasibility cuts according to whether the primal problem is feasible, which is a standard mixed-integer linear programming problem and can be efficiently solved. By iteratively solving the primal problem and master problem, the optimal scheduling and resource allocation scheme is obtained. Simulation results demonstrate that the proposed asynchronous computing framework reduces 87.17% energy consumption compared with conventional synchronous computing counterpart.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge