Counterfactually Fair Representation

Nov 09, 2023Zhiqun Zuo, Mohammad Mahdi Khalili, Xueru Zhang

The use of machine learning models in high-stake applications (e.g., healthcare, lending, college admission) has raised growing concerns due to potential biases against protected social groups. Various fairness notions and methods have been proposed to mitigate such biases. In this work, we focus on Counterfactual Fairness (CF), a fairness notion that is dependent on an underlying causal graph and first proposed by Kusner \textit{et al.}~\cite{kusner2017counterfactual}; it requires that the outcome an individual perceives is the same in the real world as it would be in a "counterfactual" world, in which the individual belongs to another social group. Learning fair models satisfying CF can be challenging. It was shown in \cite{kusner2017counterfactual} that a sufficient condition for satisfying CF is to \textbf{not} use features that are descendants of sensitive attributes in the causal graph. This implies a simple method that learns CF models only using non-descendants of sensitive attributes while eliminating all descendants. Although several subsequent works proposed methods that use all features for training CF models, there is no theoretical guarantee that they can satisfy CF. In contrast, this work proposes a new algorithm that trains models using all the available features. We theoretically and empirically show that models trained with this method can satisfy CF\footnote{The code repository for this work can be found in \url{https://github.com/osu-srml/CF_Representation_Learning}}.

Loss Balancing for Fair Supervised Learning

Nov 07, 2023Mohammad Mahdi Khalili, Xueru Zhang, Mahed Abroshan

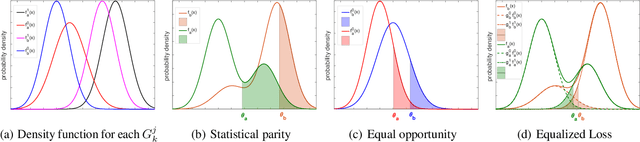

Supervised learning models have been used in various domains such as lending, college admission, face recognition, natural language processing, etc. However, they may inherit pre-existing biases from training data and exhibit discrimination against protected social groups. Various fairness notions have been proposed to address unfairness issues. In this work, we focus on Equalized Loss (EL), a fairness notion that requires the expected loss to be (approximately) equalized across different groups. Imposing EL on the learning process leads to a non-convex optimization problem even if the loss function is convex, and the existing fair learning algorithms cannot properly be adopted to find the fair predictor under the EL constraint. This paper introduces an algorithm that can leverage off-the-shelf convex programming tools (e.g., CVXPY) to efficiently find the global optimum of this non-convex optimization. In particular, we propose the ELminimizer algorithm, which finds the optimal fair predictor under EL by reducing the non-convex optimization to a sequence of convex optimization problems. We theoretically prove that our algorithm finds the global optimal solution under certain conditions. Then, we support our theoretical results through several empirical studies.

Federated Learning with Reduced Information Leakage and Computation

Oct 10, 2023Tongxin Yin, Xueru Zhang, Mohammad Mahdi Khalili, Mingyan Liu

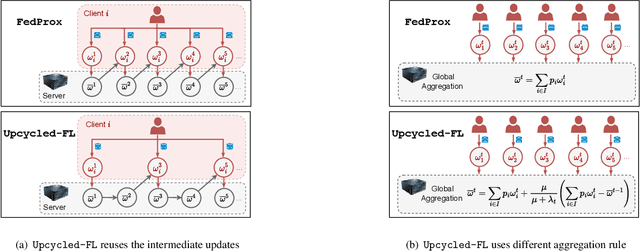

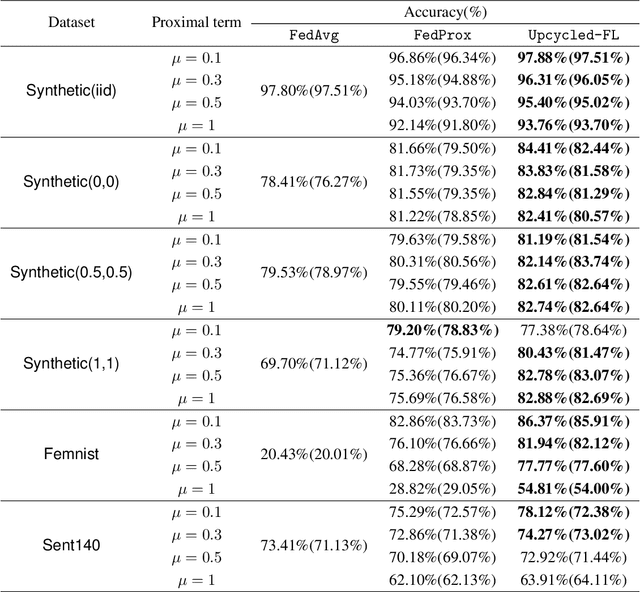

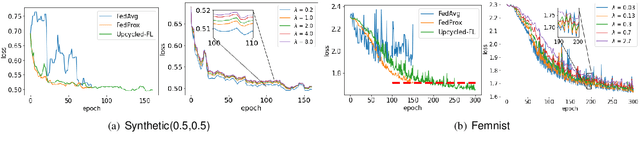

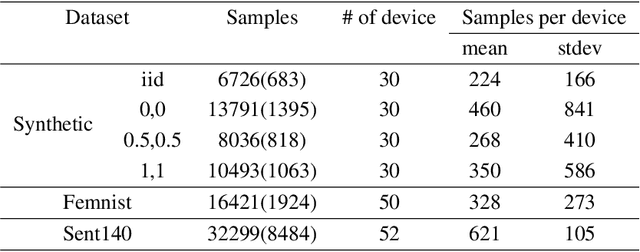

Federated learning (FL) is a distributed learning paradigm that allows multiple decentralized clients to collaboratively learn a common model without sharing local data. Although local data is not exposed directly, privacy concerns nonetheless exist as clients' sensitive information can be inferred from intermediate computations. Moreover, such information leakage accumulates substantially over time as the same data is repeatedly used during the iterative learning process. As a result, it can be particularly difficult to balance the privacy-accuracy trade-off when designing privacy-preserving FL algorithms. In this paper, we introduce Upcycled-FL, a novel federated learning framework with first-order approximation applied at every even iteration. Under this framework, half of the FL updates incur no information leakage and require much less computation. We first conduct the theoretical analysis on the convergence (rate) of Upcycled-FL, and then apply perturbation mechanisms to preserve privacy. Experiments on real-world data show that Upcycled-FL consistently outperforms existing methods over heterogeneous data, and significantly improves privacy-accuracy trade-off while reducing 48% of the training time on average.

Symbolic Metamodels for Interpreting Black-boxes Using Primitive Functions

Feb 09, 2023Mahed Abroshan, Saumitra Mishra, Mohammad Mahdi Khalili

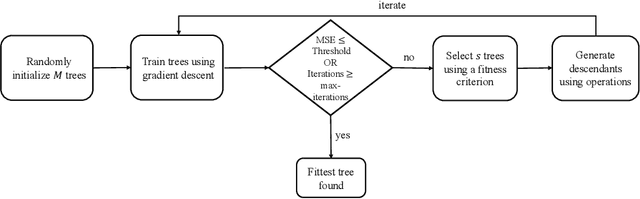

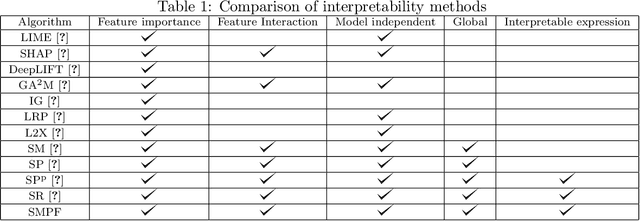

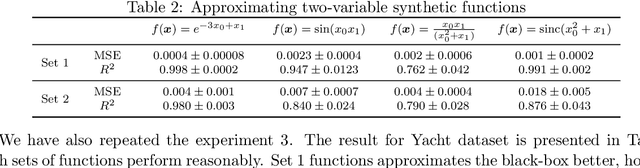

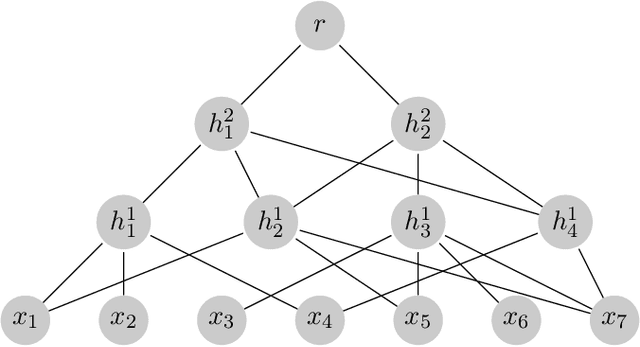

One approach for interpreting black-box machine learning models is to find a global approximation of the model using simple interpretable functions, which is called a metamodel (a model of the model). Approximating the black-box with a metamodel can be used to 1) estimate instance-wise feature importance; 2) understand the functional form of the model; 3) analyze feature interactions. In this work, we propose a new method for finding interpretable metamodels. Our approach utilizes Kolmogorov superposition theorem, which expresses multivariate functions as a composition of univariate functions (our primitive parameterized functions). This composition can be represented in the form of a tree. Inspired by symbolic regression, we use a modified form of genetic programming to search over different tree configurations. Gradient descent (GD) is used to optimize the parameters of a given configuration. Our method is a novel memetic algorithm that uses GD not only for training numerical constants but also for the training of building blocks. Using several experiments, we show that our method outperforms recent metamodeling approaches suggested for interpreting black-boxes.

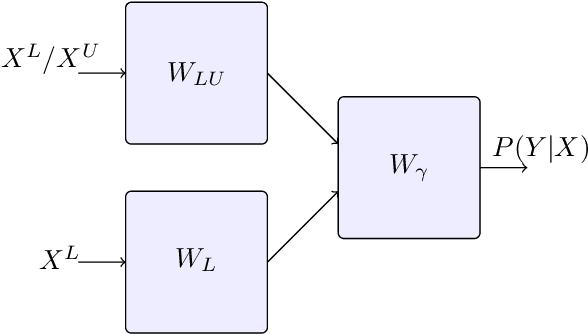

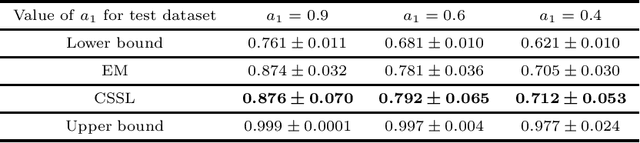

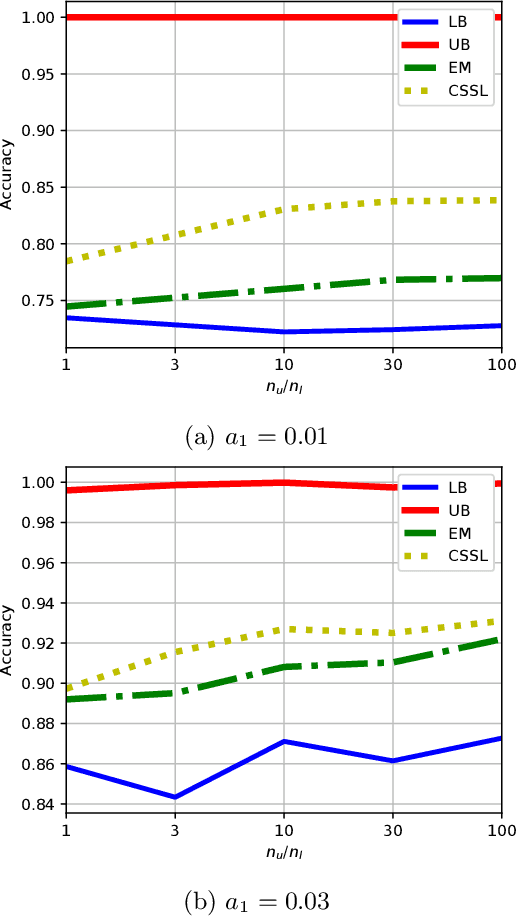

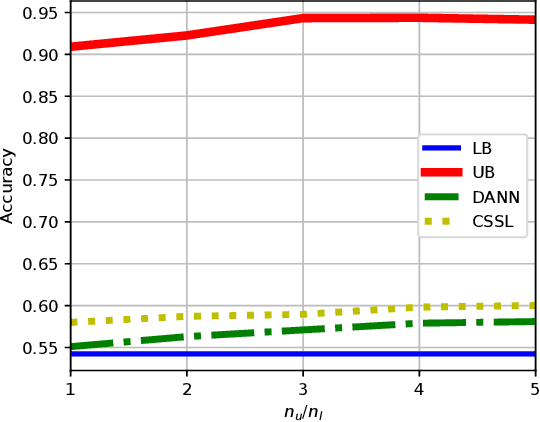

An Information-theoretical Approach to Semi-supervised Learning under Covariate-shift

Feb 24, 2022Gholamali Aminian, Mahed Abroshan, Mohammad Mahdi Khalili, Laura Toni, Miguel R. D. Rodrigues

A common assumption in semi-supervised learning is that the labeled, unlabeled, and test data are drawn from the same distribution. However, this assumption is not satisfied in many applications. In many scenarios, the data is collected sequentially (e.g., healthcare) and the distribution of the data may change over time often exhibiting so-called covariate shifts. In this paper, we propose an approach for semi-supervised learning algorithms that is capable of addressing this issue. Our framework also recovers some popular methods, including entropy minimization and pseudo-labeling. We provide new information-theoretical based generalization error upper bounds inspired by our novel framework. Our bounds are applicable to both general semi-supervised learning and the covariate-shift scenario. Finally, we show numerically that our method outperforms previous approaches proposed for semi-supervised learning under the covariate shift.

Fair Sequential Selection Using Supervised Learning Models

Oct 26, 2021Mohammad Mahdi Khalili, Xueru Zhang, Mahed Abroshan

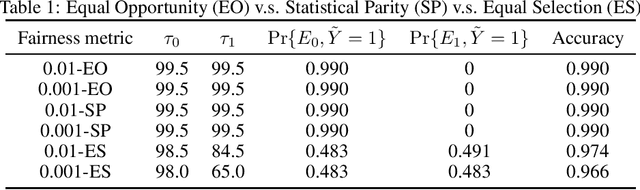

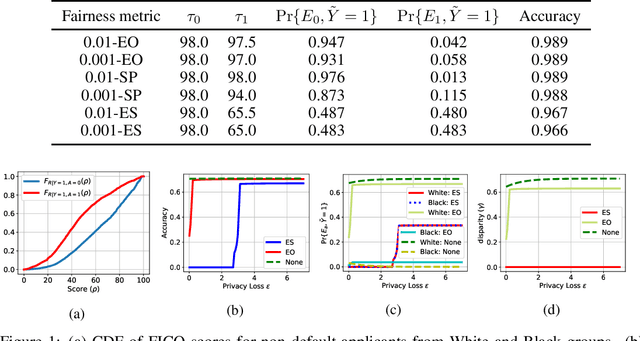

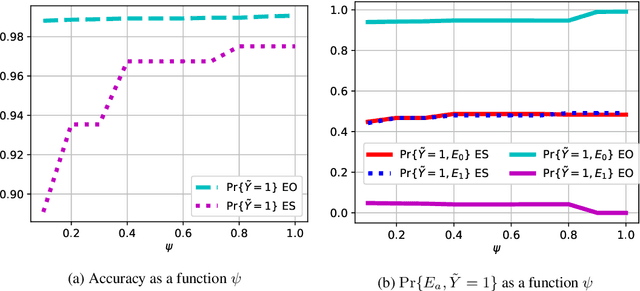

We consider a selection problem where sequentially arrived applicants apply for a limited number of positions/jobs. At each time step, a decision maker accepts or rejects the given applicant using a pre-trained supervised learning model until all the vacant positions are filled. In this paper, we discuss whether the fairness notions (e.g., equal opportunity, statistical parity, etc.) that are commonly used in classification problems are suitable for the sequential selection problems. In particular, we show that even with a pre-trained model that satisfies the common fairness notions, the selection outcomes may still be biased against certain demographic groups. This observation implies that the fairness notions used in classification problems are not suitable for a selection problem where the applicants compete for a limited number of positions. We introduce a new fairness notion, ``Equal Selection (ES),'' suitable for sequential selection problems and propose a post-processing approach to satisfy the ES fairness notion. We also consider a setting where the applicants have privacy concerns, and the decision maker only has access to the noisy version of sensitive attributes. In this setting, we can show that the perfect ES fairness can still be attained under certain conditions.

Improving Fairness and Privacy in Selection Problems

Dec 07, 2020Mohammad Mahdi Khalili, Xueru Zhang, Mahed Abroshan, Somayeh Sojoudi

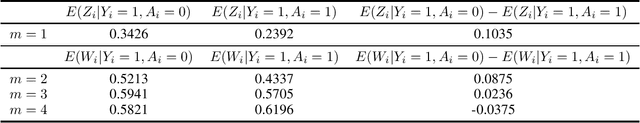

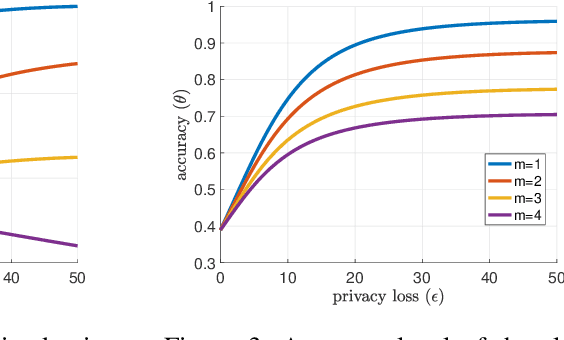

Supervised learning models have been increasingly used for making decisions about individuals in applications such as hiring, lending, and college admission. These models may inherit pre-existing biases from training datasets and discriminate against protected attributes (e.g., race or gender). In addition to unfairness, privacy concerns also arise when the use of models reveals sensitive personal information. Among various privacy notions, differential privacy has become popular in recent years. In this work, we study the possibility of using a differentially private exponential mechanism as a post-processing step to improve both fairness and privacy of supervised learning models. Unlike many existing works, we consider a scenario where a supervised model is used to select a limited number of applicants as the number of available positions is limited. This assumption is well-suited for various scenarios, such as job application and college admission. We use ``equal opportunity'' as the fairness notion and show that the exponential mechanisms can make the decision-making process perfectly fair. Moreover, the experiments on real-world datasets show that the exponential mechanism can improve both privacy and fairness, with a slight decrease in accuracy compared to the model without post-processing.

Recycled ADMM: Improving the Privacy and Accuracy of Distributed Algorithms

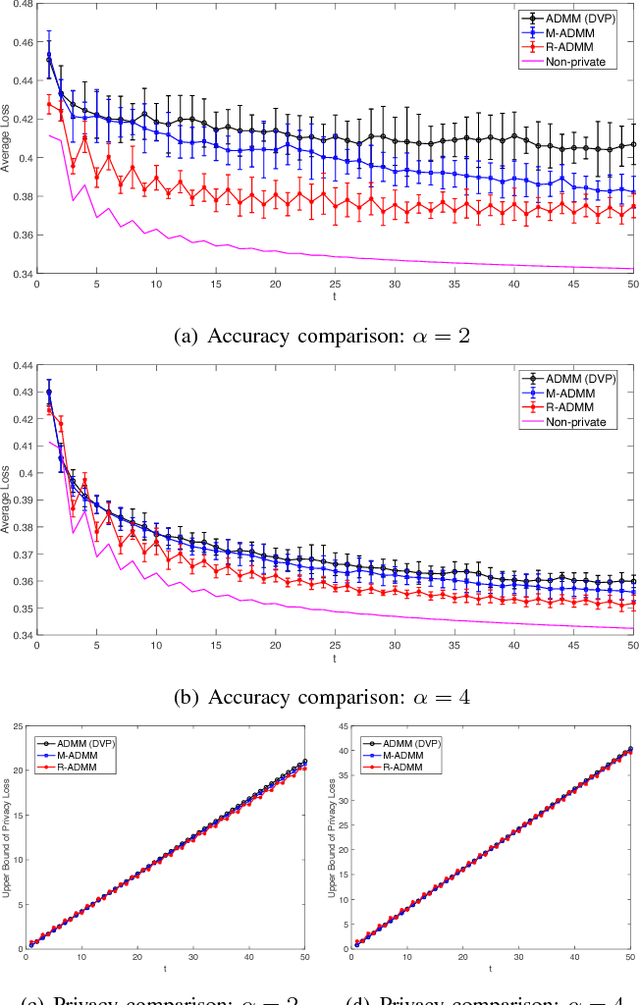

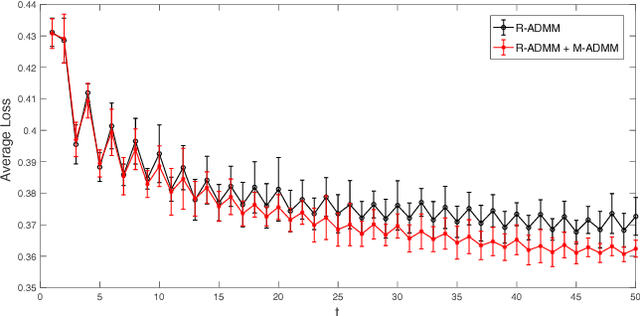

Oct 08, 2019Xueru Zhang, Mohammad Mahdi Khalili, Mingyan Liu

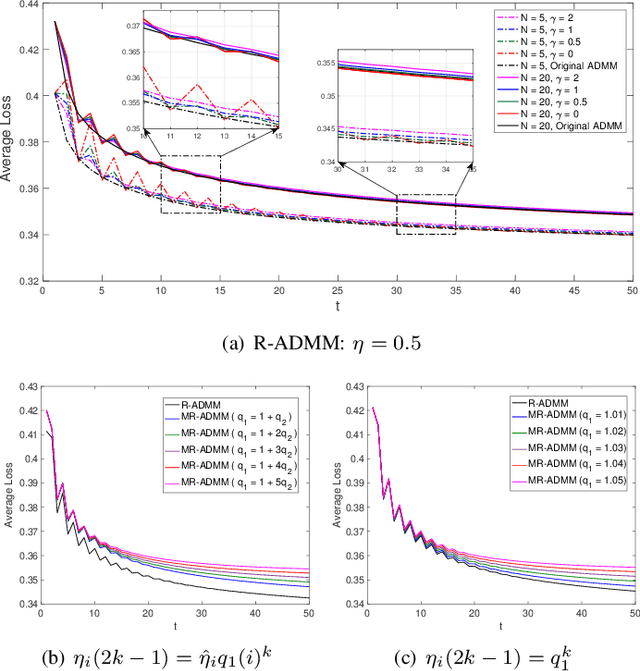

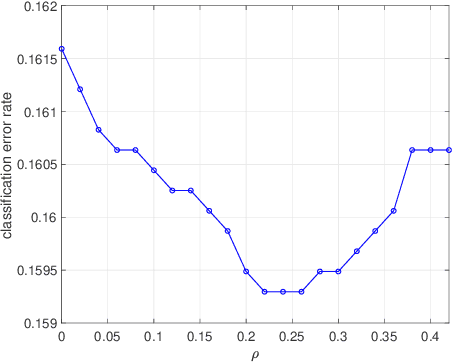

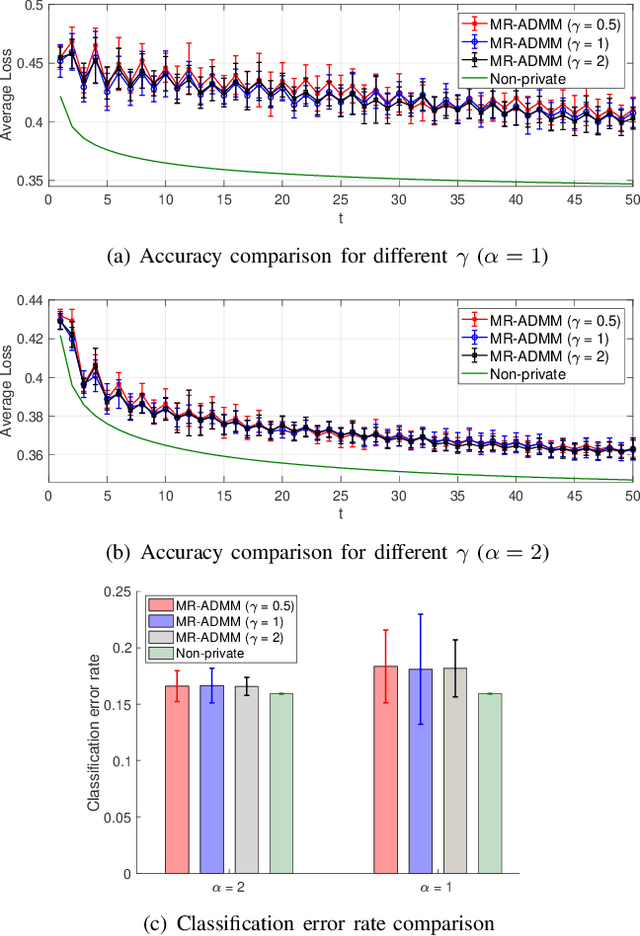

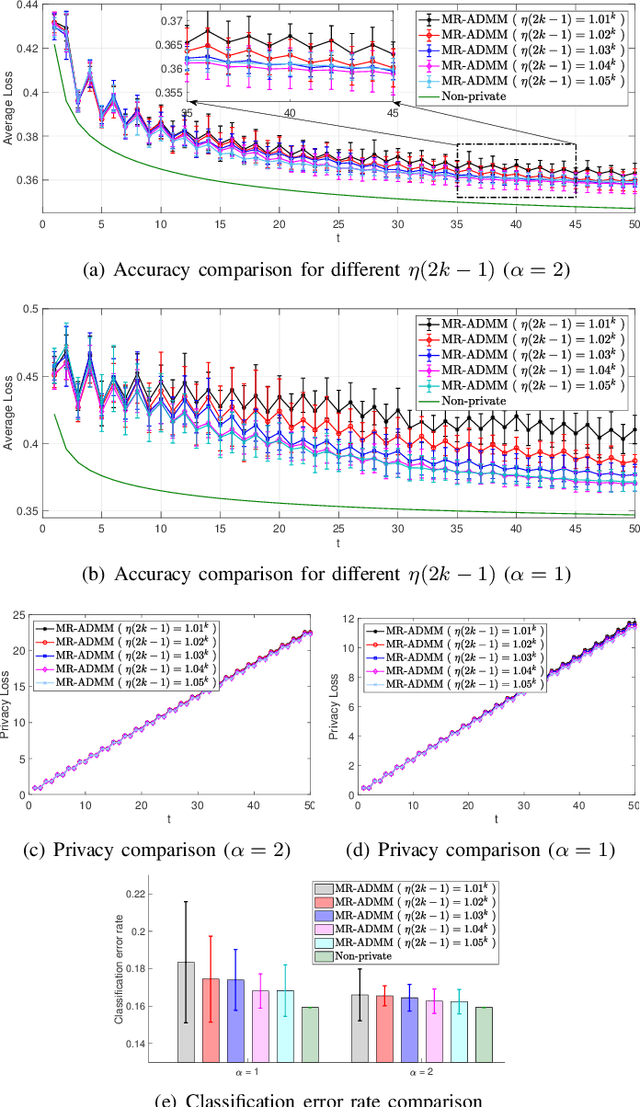

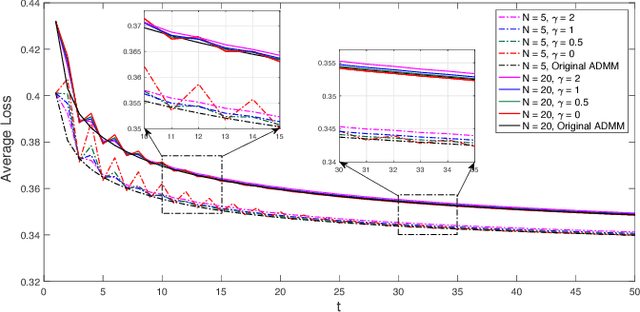

Alternating direction method of multiplier (ADMM) is a powerful method to solve decentralized convex optimization problems. In distributed settings, each node performs computation with its local data and the local results are exchanged among neighboring nodes in an iterative fashion. During this iterative process the leakage of data privacy arises and can accumulate significantly over many iterations, making it difficult to balance the privacy-accuracy tradeoff. We propose Recycled ADMM (R-ADMM), where a linear approximation is applied to every even iteration, its solution directly calculated using only results from the previous, odd iteration. It turns out that under such a scheme, half of the updates incur no privacy loss and require much less computation compared to the conventional ADMM. Moreover, R-ADMM can be further modified (MR-ADMM) such that each node independently determines its own penalty parameter over iterations. We obtain a sufficient condition for the convergence of both algorithms and provide the privacy analysis based on objective perturbation. It can be shown that the privacy-accuracy tradeoff can be improved significantly compared with conventional ADMM.

* arXiv admin note: substantial text overlap with arXiv:1810.03197

Long term impact of fair machine learning in sequential decision making: representation disparity and group retention

May 02, 2019Xueru Zhang, Mohammad Mahdi Khalili, Cem Tekin, Mingyan Liu

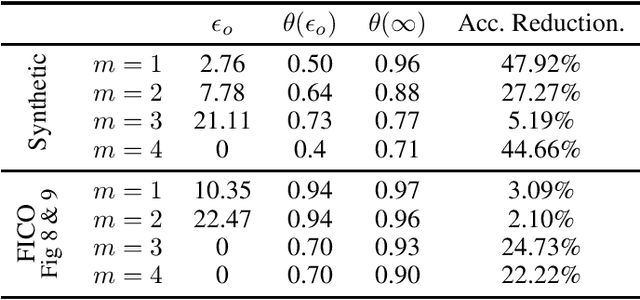

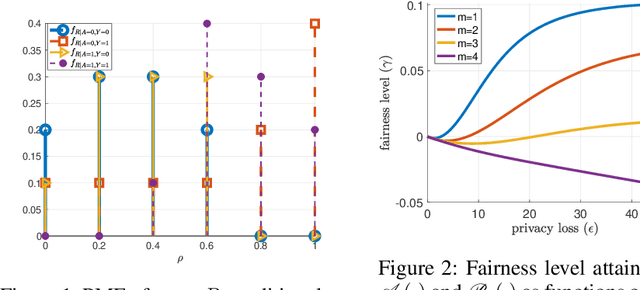

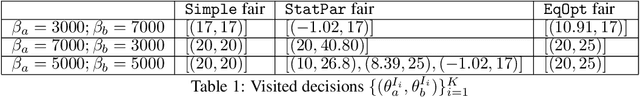

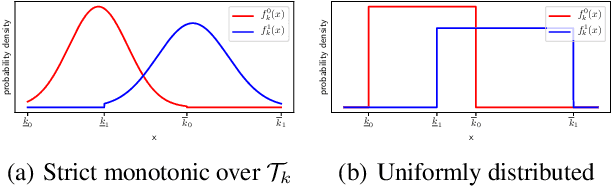

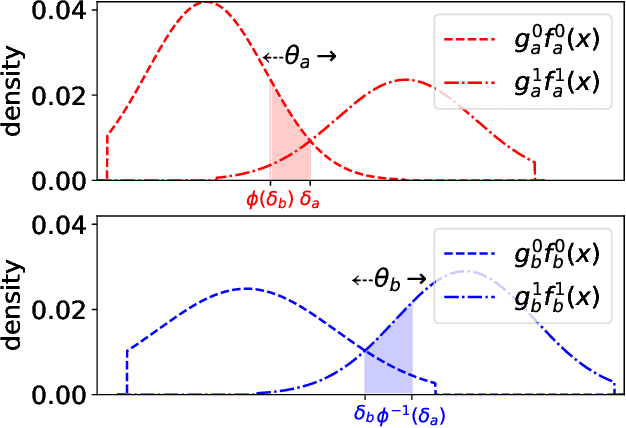

Machine learning models trained on data from multiple demographic groups can inherit representation disparity (Hashimoto et al., 2018) that may exist in the data: the group contributing less to the training process may suffer higher loss in model accuracy; this in turn can degrade population retention in these groups over time in terms of their contribution to the training process of future models, which then exacerbates representation disparity in the long run. In this study, we seek to understand the interplay between the model accuracy and the underlying group representation and how they evolve in a sequential decision setting over an infinite horizon, and how the use of fair machine learning plays a role in this process. Using a simple user dynamics (arrival and departure) model, we characterize the long-term property of using machine learning models under a set of fairness criteria imposed on each stage of the decision process, including the commonly used statistical parity and equal opportunity fairness. We show that under this particular arrival/departure model, both these criteria cause the representation disparity to worsen over time, resulting in groups diminishing entirely from the sample pool, while the criterion of equalized loss fares much better. Our results serve to highlight the fact that fairness cannot be defined outside the larger feedback loop where past actions taken by users (who are either subject to the decisions made by the algorithm or whose data are used to train the algorithm or both) will determine future observations and decisions.

Recycled ADMM: Improve Privacy and Accuracy with Less Computation in Distributed Algorithms

Oct 07, 2018Xueru Zhang, Mohammad Mahdi Khalili, Mingyan Liu

Alternating direction method of multiplier (ADMM) is a powerful method to solve decentralized convex optimization problems. In distributed settings, each node performs computation with its local data and the local results are exchanged among neighboring nodes in an iterative fashion. During this iterative process the leakage of data privacy arises and can accumulate significantly over many iterations, making it difficult to balance the privacy-utility tradeoff. In this study we propose Recycled ADMM (R-ADMM), where a linear approximation is applied to every even iteration, its solution directly calculated using only results from the previous, odd iteration. It turns out that under such a scheme, half of the updates incur no privacy loss and require much less computation compared to the conventional ADMM. We obtain a sufficient condition for the convergence of R-ADMM and provide the privacy analysis based on objective perturbation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge