Rethinking Multiple Instance Learning for Whole Slide Image Classification: A Bag-Level Classifier is a Good Instance-Level Teacher

Dec 02, 2023Hongyi Wang, Luyang Luo, Fang Wang, Ruofeng Tong, Yen-Wei Chen, Hongjie Hu, Lanfen Lin, Hao Chen

Multiple Instance Learning (MIL) has demonstrated promise in Whole Slide Image (WSI) classification. However, a major challenge persists due to the high computational cost associated with processing these gigapixel images. Existing methods generally adopt a two-stage approach, comprising a non-learnable feature embedding stage and a classifier training stage. Though it can greatly reduce the memory consumption by using a fixed feature embedder pre-trained on other domains, such scheme also results in a disparity between the two stages, leading to suboptimal classification accuracy. To address this issue, we propose that a bag-level classifier can be a good instance-level teacher. Based on this idea, we design Iteratively Coupled Multiple Instance Learning (ICMIL) to couple the embedder and the bag classifier at a low cost. ICMIL initially fix the patch embedder to train the bag classifier, followed by fixing the bag classifier to fine-tune the patch embedder. The refined embedder can then generate better representations in return, leading to a more accurate classifier for the next iteration. To realize more flexible and more effective embedder fine-tuning, we also introduce a teacher-student framework to efficiently distill the category knowledge in the bag classifier to help the instance-level embedder fine-tuning. Thorough experiments were conducted on four distinct datasets to validate the effectiveness of ICMIL. The experimental results consistently demonstrate that our method significantly improves the performance of existing MIL backbones, achieving state-of-the-art results. The code is available at: https://github.com/Dootmaan/ICMIL/tree/confidence_based

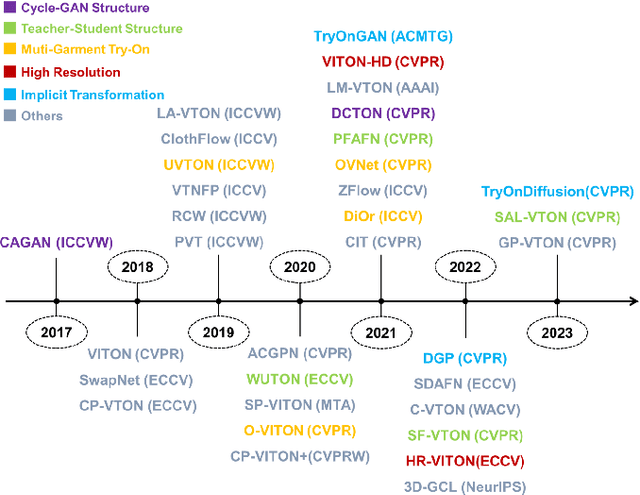

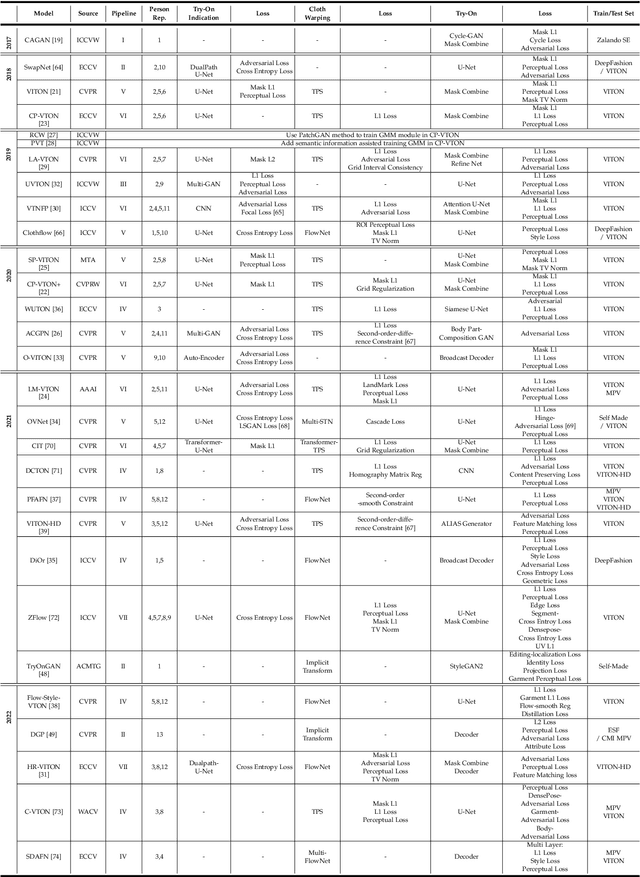

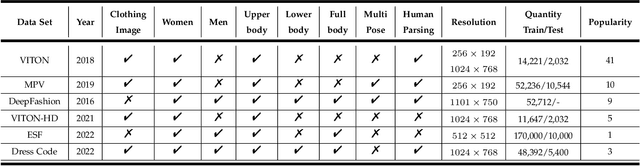

Image-Based Virtual Try-On: A Survey

Nov 08, 2023Dan Song, Xuanpu Zhang, Juan Zhou, Weizhi Nie, Ruofeng Tong, An-An Liu

Image-based virtual try-on aims to synthesize a naturally dressed person image with a clothing image, which revolutionizes online shopping and inspires related topics within image generation, showing both research significance and commercial potentials. However, there is a great gap between current research progress and commercial applications and an absence of comprehensive overview towards this field to accelerate the development. In this survey, we provide a comprehensive analysis of the state-of-the-art techniques and methodologies in aspects of pipeline architecture, person representation and key modules such as try-on indication, clothing warping and try-on stage. We propose a new semantic criteria with CLIP, and evaluate representative methods with uniformly implemented evaluation metrics on the same dataset. In addition to quantitative and qualitative evaluation of current open-source methods, we also utilize ControlNet to fine-tune a recent large image generation model (PBE) to show future potentials of large-scale models on image-based virtual try-on task. Finally, unresolved issues are revealed and future research directions are prospected to identify key trends and inspire further exploration. The uniformly implemented evaluation metrics, dataset and collected methods will be made public available at https://github.com/little-misfit/Survey-Of-Virtual-Try-On.

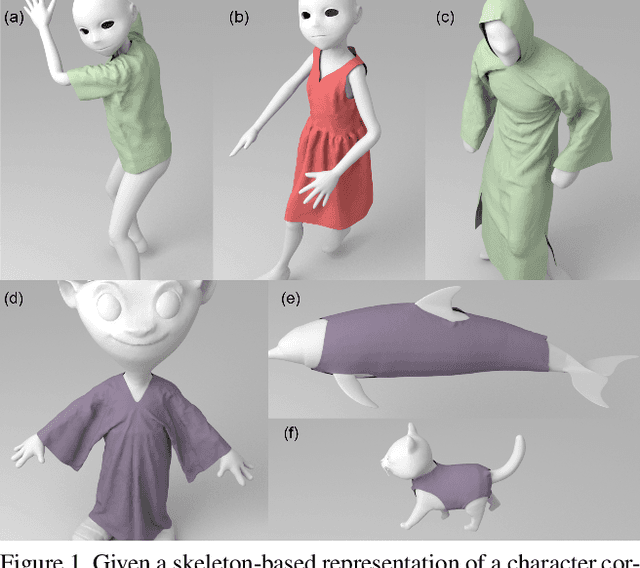

CTSN: Predicting Cloth Deformation for Skeleton-based Characters with a Two-stream Skinning Network

May 30, 2023Yudi Li, Min Tang, Yun Yang, Ruofeng Tong, Shuangcai Yang, Yao Li, Bailin An, Qilong Kou

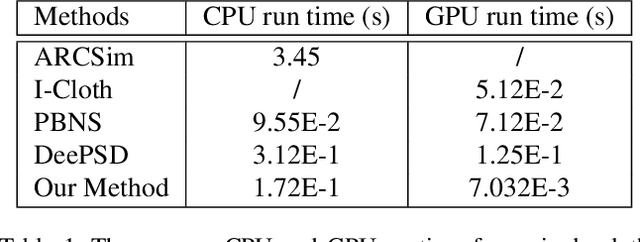

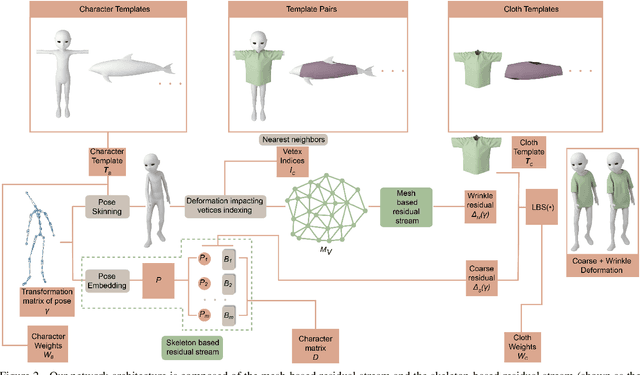

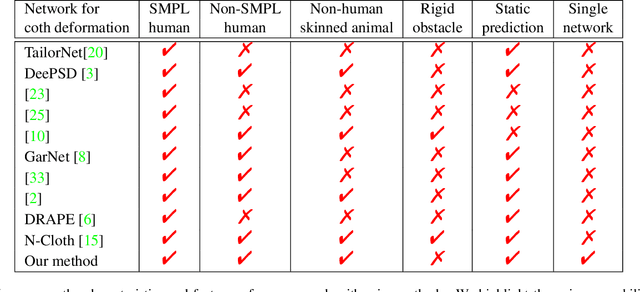

We present a novel learning method to predict the cloth deformation for skeleton-based characters with a two-stream network. The characters processed in our approach are not limited to humans, and can be other skeletal-based representations of non-human targets such as fish or pets. We use a novel network architecture which consists of skeleton-based and mesh-based residual networks to learn the coarse and wrinkle features as the overall residual from the template cloth mesh. Our network is used to predict the deformation for loose or tight-fitting clothing or dresses. We ensure that the memory footprint of our network is low, and thereby result in reduced storage and computational requirements. In practice, our prediction for a single cloth mesh for the skeleton-based character takes about 7 milliseconds on an NVIDIA GeForce RTX 3090 GPU. Compared with prior methods, our network can generate fine deformation results with details and wrinkles.

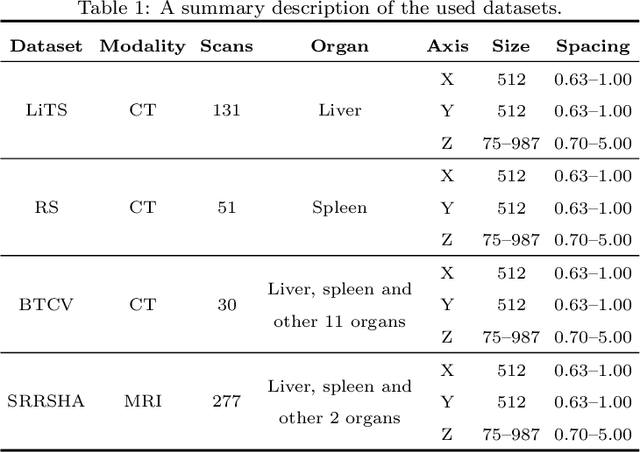

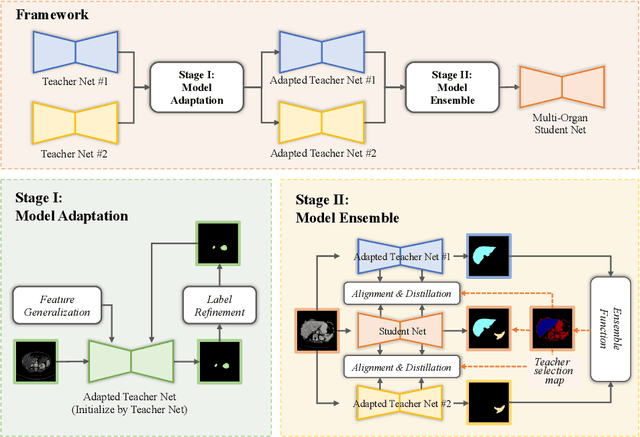

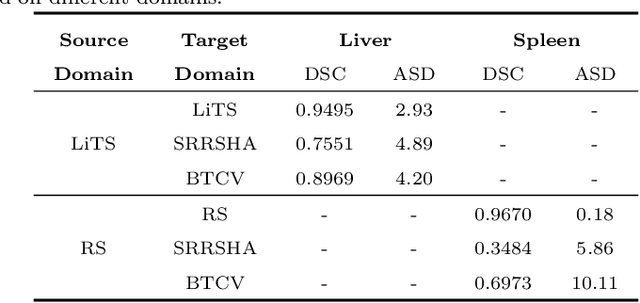

Tailored Multi-Organ Segmentation with Model Adaptation and Ensemble

Apr 14, 2023Jiahua Dong, Guohua Cheng, Yue Zhang, Chengtao Peng, Yu Song, Ruofeng Tong, Lanfen Lin, Yen-Wei Chen

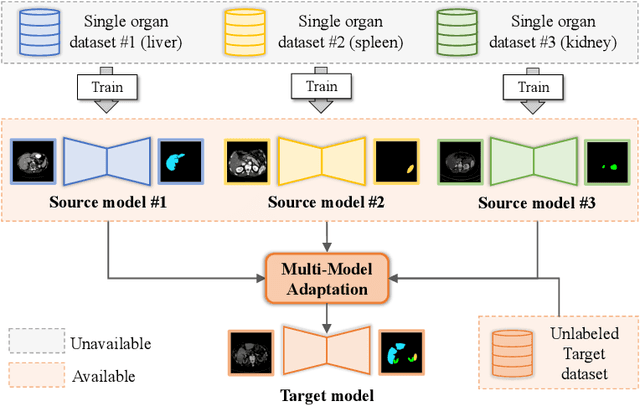

Multi-organ segmentation, which identifies and separates different organs in medical images, is a fundamental task in medical image analysis. Recently, the immense success of deep learning motivated its wide adoption in multi-organ segmentation tasks. However, due to expensive labor costs and expertise, the availability of multi-organ annotations is usually limited and hence poses a challenge in obtaining sufficient training data for deep learning-based methods. In this paper, we aim to address this issue by combining off-the-shelf single-organ segmentation models to develop a multi-organ segmentation model on the target dataset, which helps get rid of the dependence on annotated data for multi-organ segmentation. To this end, we propose a novel dual-stage method that consists of a Model Adaptation stage and a Model Ensemble stage. The first stage enhances the generalization of each off-the-shelf segmentation model on the target domain, while the second stage distills and integrates knowledge from multiple adapted single-organ segmentation models. Extensive experiments on four abdomen datasets demonstrate that our proposed method can effectively leverage off-the-shelf single-organ segmentation models to obtain a tailored model for multi-organ segmentation with high accuracy.

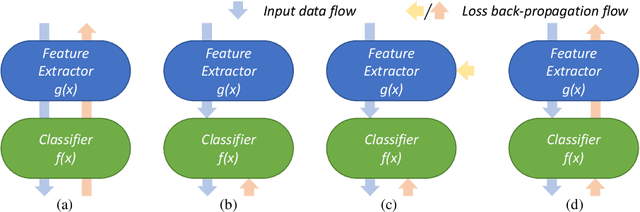

Iteratively Coupled Multiple Instance Learning from Instance to Bag Classifier for Whole Slide Image Classification

Mar 28, 2023Hongyi Wang, Luyang Luo, Fang Wang, Ruofeng Tong, Yen-Wei Chen, Hongjie Hu, Lanfen Lin, Hao Chen

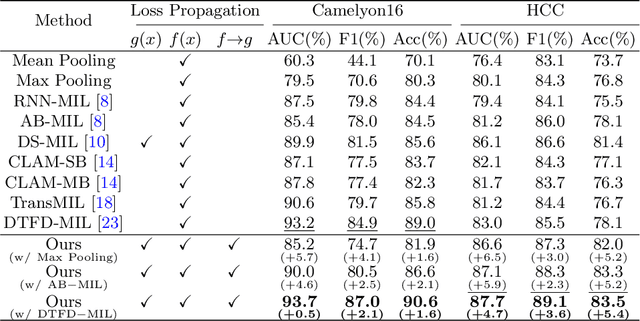

Whole Slide Image (WSI) classification remains a challenge due to their extremely high resolution and the absence of fine-grained labels. Presently, WSIs are usually classified as a Multiple Instance Learning (MIL) problem when only slide-level labels are available. MIL methods involve a patch embedding process and a bag-level classification process, but they are prohibitively expensive to be trained end-to-end. Therefore, existing methods usually train them separately, or directly skip the training of the embedder. Such schemes hinder the patch embedder's access to slide-level labels, resulting in inconsistencies within the entire MIL pipeline. To overcome this issue, we propose a novel framework called Iteratively Coupled MIL (ICMIL), which bridges the loss back-propagation process from the bag-level classifier to the patch embedder. In ICMIL, we use category information in the bag-level classifier to guide the patch-level fine-tuning of the patch feature extractor. The refined embedder then generates better instance representations for achieving a more accurate bag-level classifier. By coupling the patch embedder and bag classifier at a low cost, our proposed framework enables information exchange between the two processes, benefiting the entire MIL classification model. We tested our framework on two datasets using three different backbones, and our experimental results demonstrate consistent performance improvements over state-of-the-art MIL methods. Code will be made available upon acceptance.

Super-Resolution Based Patch-Free 3D Medical Image Segmentation with Self-Supervised Guidance

Oct 26, 2022Hongyi Wang, Lanfen Lin, Hongjie Hu, Qingqing Chen, Yinhao Li, Yutaro Iwamoto, Xian-Hua Han, Yen-Wei Chen, Ruofeng Tong

High resolution (HR) 3D medical image segmentation plays an important role in clinical diagnoses. However, HR images are difficult to be directly processed by mainstream graphical cards due to limited video memory. Therefore, most existing 3D medical image segmentation methods use patch-based models, which ignores global context information that is useful in accurate segmentation and has low inference efficiency. To address these problems, we propose a super-resolution (SR) guided patch-free 3D medical image segmentation framework that can realize HR segmentation with global information of low-resolution (LR) input. The framework contains two tasks: semantic segmentation (main task) and super resolution (auxiliary task). To balance the information loss with the LR input, we introduce a Self-Supervised Guidance Module (SGM), which employs a selective search method to crop a HR patch from the original image as restoration guidance. Multi-scale convolutional layers are used to mitigate the scale-inconsistency between the HR guidance features and the LR features. Moreover, we propose a Task-Fusion Module (TFM) to exploit the inter connections between segmentation and SR task. This module can also be used for Test Phase Fine-tuning (TPF), leading to a better model generalization ability. When predicting, only the main segmentation task is needed, while other modules can be removed to accelerate the inference. The experiments results on two different datasets show that our framework outperforms current patch-based and patch-free models. Our model also has a four times higher inference speed compared to traditional patch-based methods. Our codes are available at: https://github.com/Dootmaan/PFSeg-Full.

ScaleFormer: Revisiting the Transformer-based Backbones from a Scale-wise Perspective for Medical Image Segmentation

Jul 29, 2022Huimin Huang, Shiao Xie1, Lanfen Lin, Yutaro Iwamoto, Xianhua Han, Yen-Wei Chen, Ruofeng Tong

Recently, a variety of vision transformers have been developed as their capability of modeling long-range dependency. In current transformer-based backbones for medical image segmentation, convolutional layers were replaced with pure transformers, or transformers were added to the deepest encoder to learn global context. However, there are mainly two challenges in a scale-wise perspective: (1) intra-scale problem: the existing methods lacked in extracting local-global cues in each scale, which may impact the signal propagation of small objects; (2) inter-scale problem: the existing methods failed to explore distinctive information from multiple scales, which may hinder the representation learning from objects with widely variable size, shape and location. To address these limitations, we propose a novel backbone, namely ScaleFormer, with two appealing designs: (1) A scale-wise intra-scale transformer is designed to couple the CNN-based local features with the transformer-based global cues in each scale, where the row-wise and column-wise global dependencies can be extracted by a lightweight Dual-Axis MSA. (2) A simple and effective spatial-aware inter-scale transformer is designed to interact among consensual regions in multiple scales, which can highlight the cross-scale dependency and resolve the complex scale variations. Experimental results on different benchmarks demonstrate that our Scale-Former outperforms the current state-of-the-art methods. The code is publicly available at: https://github.com/ZJUGiveLab/ScaleFormer.

Efficient and Accurate Hyperspectral Pansharpening Using 3D VolumeNet and 2.5D Texture Transfer

Mar 08, 2022Yinao Li, Yutaro Iwamoto, Ryousuke Nakamura, Lanfen Lin, Ruofeng Tong, Yen-Wei Chen

Recently, convolutional neural networks (CNN) have obtained promising results in single-image SR for hyperspectral pansharpening. However, enhancing CNNs' representation ability with fewer parameters and a shorter prediction time is a challenging and critical task. In this paper, we propose a novel multi-spectral image fusion method using a combination of the previously proposed 3D CNN model VolumeNet and 2.5D texture transfer method using other modality high resolution (HR) images. Since a multi-spectral (MS) image consists of several bands and each band is a 2D image slice, MS images can be seen as 3D data. Thus, we use the previously proposed VolumeNet to fuse HR panchromatic (PAN) images and bicubic interpolated MS images. Because the proposed 3D VolumeNet can effectively improve the accuracy by expanding the receptive field of the model, and due to its lightweight structure, we can achieve better performance against the existing method without purchasing a large number of remote sensing images for training. In addition, VolumeNet can restore the high-frequency information lost in the HR MR image as much as possible, reducing the difficulty of feature extraction in the following step: 2.5D texture transfer. As one of the latest technologies, deep learning-based texture transfer has been demonstrated to effectively and efficiently improve the visual performance and quality evaluation indicators of image reconstruction. Different from the texture transfer processing of RGB image, we use HR PAN images as the reference images and perform texture transfer for each frequency band of MS images, which is named 2.5D texture transfer. The experimental results show that the proposed method outperforms the existing methods in terms of objective accuracy assessment, method efficiency, and visual subjective evaluation.

Attention-based Cross-Layer Domain Alignment for Unsupervised Domain Adaptation

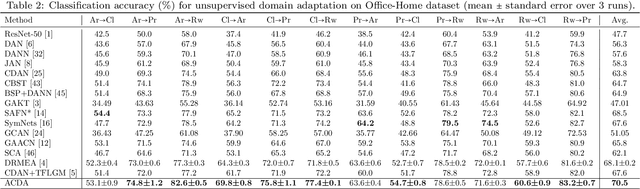

Feb 27, 2022Xu Ma, Junkun Yuan, Yen-wei Chen, Ruofeng Tong, Lanfen Lin

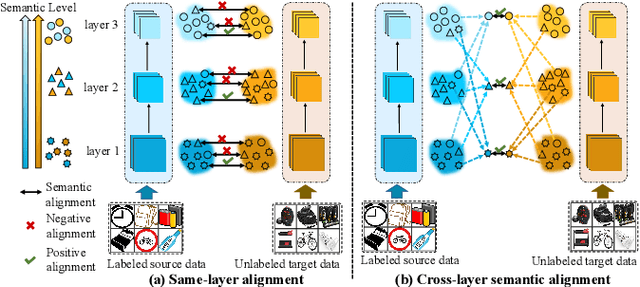

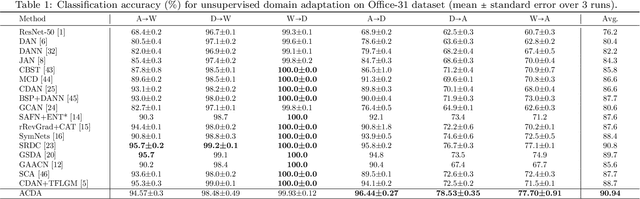

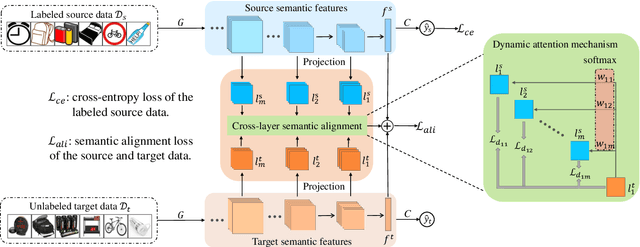

Unsupervised domain adaptation (UDA) aims to learn transferable knowledge from a labeled source domain and adapts a trained model to an unlabeled target domain. To bridge the gap between source and target domains, one prevailing strategy is to minimize the distribution discrepancy by aligning their semantic features extracted by deep models. The existing alignment-based methods mainly focus on reducing domain divergence in the same model layer. However, the same level of semantic information could distribute across model layers due to the domain shifts. To further boost model adaptation performance, we propose a novel method called Attention-based Cross-layer Domain Alignment (ACDA), which captures the semantic relationship between the source and target domains across model layers and calibrates each level of semantic information automatically through a dynamic attention mechanism. An elaborate attention mechanism is designed to reweight each cross-layer pair based on their semantic similarity for precise domain alignment, effectively matching each level of semantic information during model adaptation. Extensive experiments on multiple benchmark datasets consistently show that the proposed method ACDA yields state-of-the-art performance.

N-Cloth: Predicting 3D Cloth Deformation with Mesh-Based Networks

Dec 13, 2021Yudi Li, Min Tang, Yun Yang, Zi Huang, Ruofeng Tong, Shuangcai Yang, Yao Li, Dinesh Manocha

We present a novel mesh-based learning approach (N-Cloth) for plausible 3D cloth deformation prediction. Our approach is general and can handle cloth or obstacles represented by triangle meshes with arbitrary topology. We use graph convolution to transform the cloth and object meshes into a latent space to reduce the non-linearity in the mesh space. Our network can predict the target 3D cloth mesh deformation based on the state of the initial cloth mesh template and the target obstacle mesh. Our approach can handle complex cloth meshes with up to $100$K triangles and scenes with various objects corresponding to SMPL humans, Non-SMPL humans, or rigid bodies. In practice, our approach demonstrates good temporal coherence between successive input frames and can be used to generate plausible cloth simulation at $30-45$ fps on an NVIDIA GeForce RTX 3090 GPU. We highlight its benefits over prior learning-based methods and physically-based cloth simulators.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge