Singular Sturm-Liouville Problems with Zero Potential (q=0) and Singular Slow Feature Analysis

Nov 09, 2020Stefan Richthofer, Laurenz Wiskott

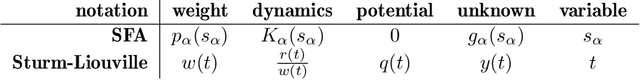

A Sturm-Liouville problem ($\lambda wy=(ry')'+qy$) is singular if its domain is unbounded or if $r$ or $w$ vanish at the boundary. Then it is difficult to tell whether profound results from regular Sturm-Liouville theory apply. Existing criteria are often difficult to apply, e.g. because they are formulated in terms of the solution function. We study the special case that the potential $q$ is zero under Neumann boundary conditions and give simple and explicit criteria, solely in terms of the coefficient functions, to assess whether various properties of the regular case apply. Specifically, these properties are discreteness of the spectrum (BD), self-adjointness, oscillation ($i$th solution has $i$ zeros) and that the $i$th eigenvalue equals the SFA delta value (the total energy) of the $i$th solution. We further prove that stationary points of each solution strictly interlace with its zeros (in singular or regular case, regardless of the boundary condition, for zero potential or if $q < \lambda w$ everywhere). If $\frac{r}{w}$ is bounded and of bounded variation, the criterion simplifies to requiring $\frac{|w'|}{w} \to \infty$ at singular boundary points. This research is motivated by Slow Feature Analysis (SFA), a data processing algorithm that extracts the slowest uncorrelated signals from a high-dimensional input signal and has notable success in computer vision, computational neuroscience and blind source separation. From [Sprekeler et al., 2014] it is known that for an important class of scenarios (statistically independent input), an analytic formulation of SFA reduces to a Sturm-Liouville problem with zero potential and Neumann boundary conditions. So far, the mathematical SFA theory has only considered the regular case, except for a special case that is solved by Hermite Polynomials. This work generalizes SFA theory to the singular case, i.e. open-space scenarios.

Global Navigation Using Predictable and Slow Feature Analysis in Multiroom Environments, Path Planning and Other Control Tasks

May 22, 2018Stefan Richthofer, Laurenz Wiskott

Extended Predictable Feature Analysis (PFAx) [Richthofer and Wiskott, 2017] is an extension of PFA [Richthofer and Wiskott, 2015] that allows generating a goal-directed control signal of an agent whose dynamics has previously been learned during a training phase in an unsupervised manner. PFAx hardly requires assumptions or prior knowledge of the agent's sensor or control mechanics, or of the environment. It selects features from a high-dimensional input by intrinsic predictability and organizes them into a reasonably low-dimensional model. While PFA obtains a well predictable model, PFAx yields a model ideally suited for manipulations with predictable outcome. This allows for goal-directed manipulation of an agent and thus for local navigation, i.e. for reaching states where intermediate actions can be chosen by a permanent descent of distance to the goal. The approach is limited when it comes to global navigation, e.g. involving obstacles or multiple rooms. In this article, we extend theoretical results from [Sprekeler and Wiskott, 2008], enabling PFAx to perform stable global navigation. So far, the most widely exploited characteristic of Slow Feature Analysis (SFA) was that slowness yields invariances. We focus on another fundamental characteristics of slow signals: They tend to yield monotonicity and one significant property of monotonicity is that local optimization is sufficient to find a global optimum. We present an SFA-based algorithm that structures an environment such that navigation tasks hierarchically decompose into subgoals. Each of these can be efficiently achieved by PFAx, yielding an overall global solution of the task. The algorithm needs to explore and process an environment only once and can then perform all sorts of navigation tasks efficiently. We support this algorithm by mathematical theory and apply it to different problems.

PFAx: Predictable Feature Analysis to Perform Control

Dec 02, 2017Stefan Richthofer, Laurenz Wiskott

Predictable Feature Analysis (PFA) (Richthofer, Wiskott, ICMLA 2015) is an algorithm that performs dimensionality reduction on high dimensional input signal. It extracts those subsignals that are most predictable according to a certain prediction model. We refer to these extracted signals as predictable features. In this work we extend the notion of PFA to take supplementary information into account for improving its predictions. Such information can be a multidimensional signal like the main input to PFA, but is regarded external. That means it won't participate in the feature extraction - no features get extracted or composed of it. Features will be exclusively extracted from the main input such that they are most predictable based on themselves and the supplementary information. We refer to this enhanced PFA as PFAx (PFA extended). Even more important than improving prediction quality is to observe the effect of supplementary information on feature selection. PFAx transparently provides insight how the supplementary information adds to prediction quality and whether it is valuable at all. Finally we show how to invert that relation and can generate the supplementary information such that it would yield a certain desired outcome of the main signal. We apply this to a setting inspired by reinforcement learning and let the algorithm learn how to control an agent in an environment. With this method it is feasible to locally optimize the agent's state, i.e. reach a certain goal that is near enough. We are preparing a follow-up paper that extends this method such that also global optimization is feasible.

Predictable Feature Analysis

Nov 11, 2013Stefan Richthofer, Laurenz Wiskott

Every organism in an environment, whether biological, robotic or virtual, must be able to predict certain aspects of its environment in order to survive or perform whatever task is intended. It needs a model that is capable of estimating the consequences of possible actions, so that planning, control, and decision-making become feasible. For scientific purposes, such models are usually created in a problem specific manner using differential equations and other techniques from control- and system-theory. In contrast to that, we aim for an unsupervised approach that builds up the desired model in a self-organized fashion. Inspired by Slow Feature Analysis (SFA), our approach is to extract sub-signals from the input, that behave as predictable as possible. These "predictable features" are highly relevant for modeling, because predictability is a desired property of the needed consequence-estimating model by definition. In our approach, we measure predictability with respect to a certain prediction model. We focus here on the solution of the arising optimization problem and present a tractable algorithm based on algebraic methods which we call Predictable Feature Analysis (PFA). We prove that the algorithm finds the globally optimal signal, if this signal can be predicted with low error. To deal with cases where the optimal signal has a significant prediction error, we provide a robust, heuristically motivated variant of the algorithm and verify it empirically. Additionally, we give formal criteria a prediction-model must meet to be suitable for measuring predictability in the PFA setting and also provide a suitable default-model along with a formal proof that it meets these criteria.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge