Large Deviation Analysis of Score-based Hypothesis Testing

Feb 03, 2024Enmao Diao, Taposh Banerjee, Vahid Tarokh

Score-based statistical models play an important role in modern machine learning, statistics, and signal processing. For hypothesis testing, a score-based hypothesis test is proposed in \cite{wu2022score}. We analyze the performance of this score-based hypothesis testing procedure and derive upper bounds on the probabilities of its Type I and II errors. We prove that the exponents of our error bounds are asymptotically (in the number of samples) tight for the case of simple null and alternative hypotheses. We calculate these error exponents explicitly in specific cases and provide numerical studies for various other scenarios of interest.

Robust Quickest Change Detection in Non-Stationary Processes

Oct 14, 2023Yingze Hou, Yousef Oleyaeimotlagh, Rahul Mishra, Hoda Bidkhori, Taposh Banerjee

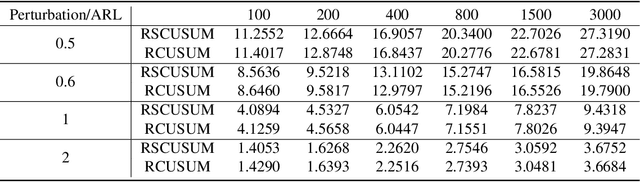

Optimal algorithms are developed for robust detection of changes in non-stationary processes. These are processes in which the distribution of the data after change varies with time. The decision-maker does not have access to precise information on the post-change distribution. It is shown that if the post-change non-stationary family has a distribution that is least favorable in a well-defined sense, then the algorithms designed using the least favorable distributions are robust and optimal. Non-stationary processes are encountered in public health monitoring and space and military applications. The robust algorithms are applied to real and simulated data to show their effectiveness.

Robust Quickest Change Detection for Unnormalized Models

Jun 08, 2023Suya Wu, Enmao Diao, Taposh Banerjee, Jie Ding, Vahid Tarokh

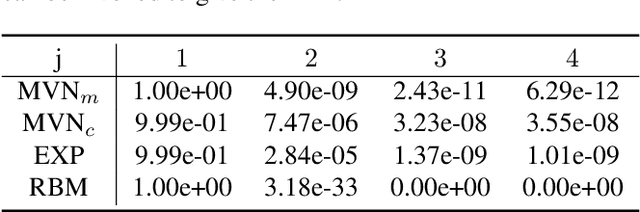

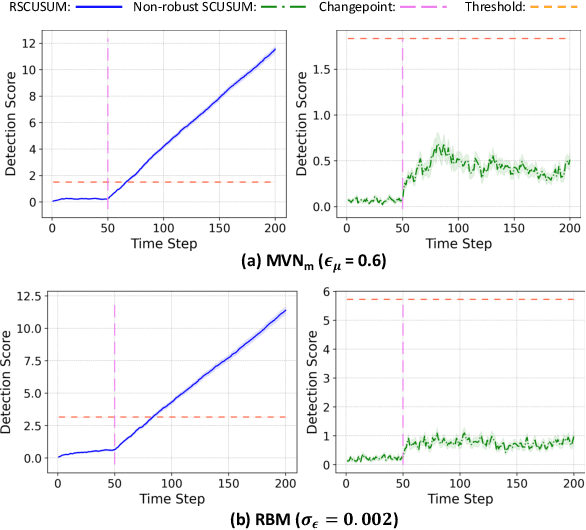

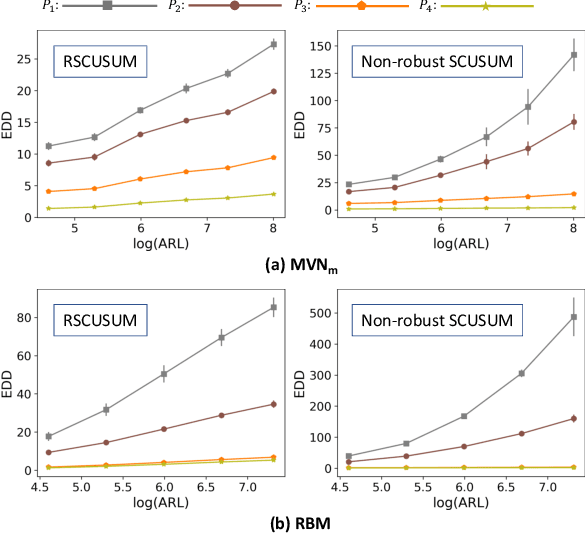

Detecting an abrupt and persistent change in the underlying distribution of online data streams is an important problem in many applications. This paper proposes a new robust score-based algorithm called RSCUSUM, which can be applied to unnormalized models and addresses the issue of unknown post-change distributions. RSCUSUM replaces the Kullback-Leibler divergence with the Fisher divergence between pre- and post-change distributions for computational efficiency in unnormalized statistical models and introduces a notion of the ``least favorable'' distribution for robust change detection. The algorithm and its theoretical analysis are demonstrated through simulation studies.

Reinforcement Learning with an Abrupt Model Change

Apr 22, 2023Wuxia Chen, Taposh Banerjee, Jemin George, Carl Busart

The problem of reinforcement learning is considered where the environment or the model undergoes a change. An algorithm is proposed that an agent can apply in such a problem to achieve the optimal long-time discounted reward. The algorithm is model-free and learns the optimal policy by interacting with the environment. It is shown that the proposed algorithm has strong optimality properties. The effectiveness of the algorithm is also demonstrated using simulation results. The proposed algorithm exploits a fundamental reward-detection trade-off present in these problems and uses a quickest change detection algorithm to detect the model change. Recommendations are provided for faster detection of model changes and for smart initialization strategies.

Quickest Change Detection in Statistically Periodic Processes with Unknown Post-Change Distribution

Mar 06, 2023Yousef Oleyaeimotlagh, Taposh Banerjee, Ahmad Taha, Eugene John

Algorithms are developed for the quickest detection of a change in statistically periodic processes. These are processes in which the statistical properties are nonstationary but repeat after a fixed time interval. It is assumed that the pre-change law is known to the decision maker but the post-change law is unknown. In this framework, three families of problems are studied: robust quickest change detection, joint quickest change detection and classification, and multislot quickest change detection. In the multislot problem, the exact slot within a period where a change may occur is unknown. Algorithms are proposed for each problem, and either exact optimality or asymptotic optimal in the low false alarm regime is proved for each of them. The developed algorithms are then used for anomaly detection in traffic data and arrhythmia detection and identification in electrocardiogram (ECG) data. The effectiveness of the algorithms is also demonstrated on simulated data.

Modeling and Quickest Detection of a Rapidly Approaching Object

Mar 04, 2023Tim Brucks, Taposh Banerjee, Rahul Mishra

The problem of detecting the presence of a signal that can lead to a disaster is studied. A decision-maker collects data sequentially over time. At some point in time, called the change point, the distribution of data changes. This change in distribution could be due to an event or a sudden arrival of an enemy object. If not detected quickly, this change has the potential to cause a major disaster. In space and military applications, the values of the measurements can stochastically grow with time as the enemy object moves closer to the target. A new class of stochastic processes, called exploding processes, is introduced to model stochastically growing data. An algorithm is proposed and shown to be asymptotically optimal as the mean time to a false alarm goes to infinity.

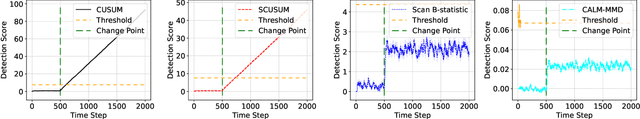

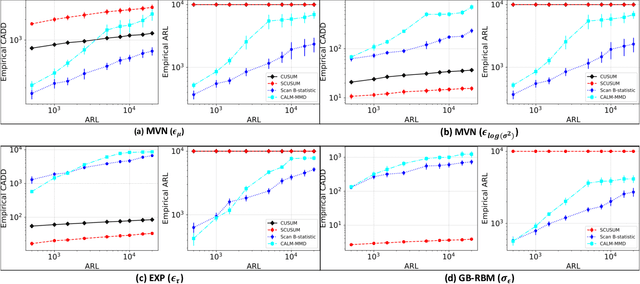

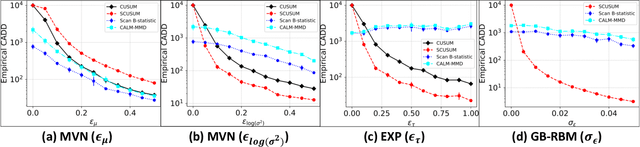

Quickest Change Detection for Unnormalized Statistical Models

Feb 01, 2023Suya Wu, Enmao Diao, Taposh Banerjee, Jie Ding, Vahid Tarokh

Classical quickest change detection algorithms require modeling pre-change and post-change distributions. Such an approach may not be feasible for various machine learning models because of the complexity of computing the explicit distributions. Additionally, these methods may suffer from a lack of robustness to model mismatch and noise. This paper develops a new variant of the classical Cumulative Sum (CUSUM) algorithm for the quickest change detection. This variant is based on Fisher divergence and the Hyv\"arinen score and is called the Score-based CUSUM (SCUSUM) algorithm. The SCUSUM algorithm allows the applications of change detection for unnormalized statistical models, i.e., models for which the probability density function contains an unknown normalization constant. The asymptotic optimality of the proposed algorithm is investigated by deriving expressions for average detection delay and the mean running time to a false alarm. Numerical results are provided to demonstrate the performance of the proposed algorithm.

Cross-subject Decoding of Eye Movement Goals from Local Field Potentials

Nov 21, 2019Marko Angjelichinoski, John Choi, Taposh Banerjee, Bijan Pesaran, Vahid Tarokh

Objective. We consider the cross-subject decoding problem from local field potential (LFP) activity, where training data collected from the pre-frontal cortex of a subject (source) is used to decode intended motor actions in another subject (destination). Approach. We propose a novel pre-processing technique, referred to as data centering, which is used to adapt the feature space of the source to the feature space of the destination. The key ingredients of data centering are the transfer functions used to model the deterministic component of the relationship between the source and destination feature spaces. We also develop an efficient data-driven estimation approach for linear transfer functions that uses the first and second order moments of the class-conditional distributions. Main result. We apply our techniques for cross-subject decoding of eye movement directions in an experiment where two macaque monkeys perform memory-guided visual saccades to one of eight target locations. The results show peak cross-subject decoding performance of $80\%$, which marks a substantial improvement over random choice decoder. Significance. The analyses presented herein demonstrate that the data centering is a viable novel technique for reliable cross-subject brain-computer interfacing.

Minimax-optimal decoding of movement goals from local field potentials using complex spectral features

Jan 29, 2019Marko Angjelichinoski, Taposh Banerjee, John Choi, Bijan Pesaran, Vahid Tarokh

We consider the problem of predicting eye movement goals from local field potentials (LFP) recorded through a multielectrode array in the macaque prefrontal cortex. The monkey is tasked with performing memory-guided saccades to one of eight targets during which LFP activity is recorded and used to train a decoder. Previous reports have mainly relied on the spectral amplitude of the LFPs as a feature in the decoding step to limited success, while neglecting the phase without proper theoretical justification. This paper formulates the problem of decoding eye movement intentions in a statistically optimal framework and uses Gaussian sequence modeling and Pinsker's theorem to generate minimax-optimal estimates of the LFP signals which are later used as features in the decoding step. The approach is shown to act as a low-pass filter and each LFP in the feature space is represented via its complex Fourier coefficients after appropriate shrinking such that higher frequency components are attenuated; this way, the phase information inherently present in the LFP signal is naturally embedded into the feature space. The proposed complex spectrum-based decoder achieves prediction accuracy of up to $94\%$ at superficial electrode depths near the surface of the prefrontal cortex, which marks a significant performance improvement over conventional power spectrum-based decoders.

Cyclostationary Statistical Models and Algorithms for Anomaly Detection Using Multi-Modal Data

Jul 02, 2018Taposh Banerjee, Gene Whipps, Prudhvi Gurram, Vahid Tarokh

A framework is proposed to detect anomalies in multi-modal data. A deep neural network-based object detector is employed to extract counts of objects and sub-events from the data. A cyclostationary model is proposed to model regular patterns of behavior in the count sequences. The anomaly detection problem is formulated as a problem of detecting deviations from learned cyclostationary behavior. Sequential algorithms are proposed to detect anomalies using the proposed model. The proposed algorithms are shown to be asymptotically efficient in a well-defined sense. The developed algorithms are applied to a multi-modal data consisting of CCTV imagery and social media posts to detect a 5K run in New York City.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge