Deep MAGSAC++

Nov 28, 2021Wei Tong, Jiri Matas, Daniel Barath

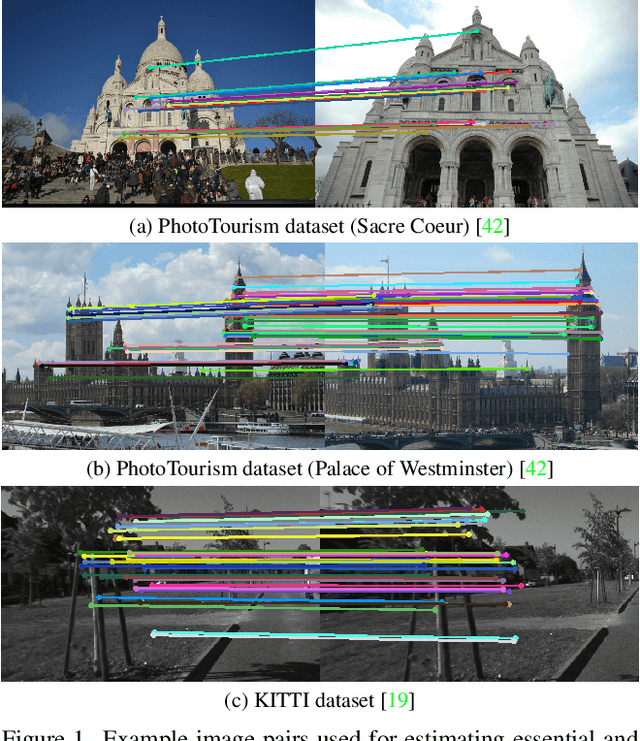

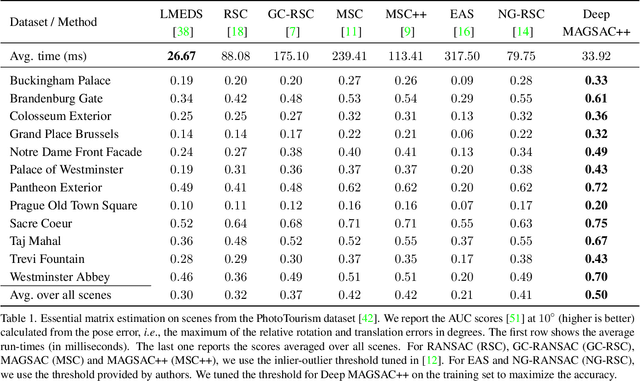

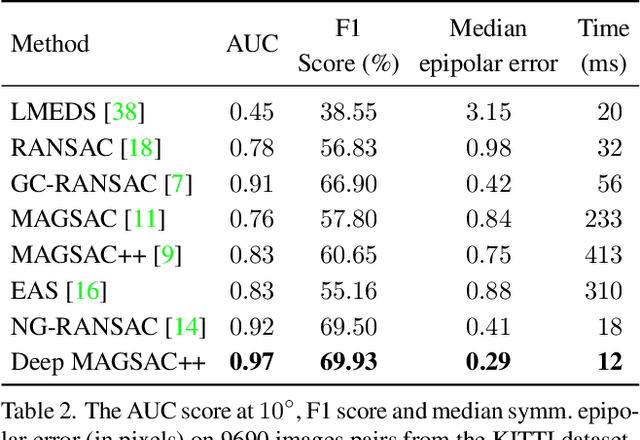

We propose Deep MAGSAC++ combining the advantages of traditional and deep robust estimators. We introduce a novel loss function that exploits the orientation and scale from partially affine covariant features, e.g., SIFT, in a geometrically justifiable manner. The new loss helps in learning higher-order information about the underlying scene geometry. Moreover, we propose a new sampler for RANSAC that always selects the sample with the highest probability of consisting only of inliers. After every unsuccessful iteration, the probabilities are updated in a principled way via a Bayesian approach. The prediction of the deep network is exploited as prior inside the sampler. Benefiting from the new loss, the proposed sampler, and a number of technical advancements, Deep MAGSAC++ is superior to the state-of-the-art both in terms of accuracy and run-time on thousands of image pairs from publicly available datasets for essential and fundamental matrix estimation.

QuesNet: A Unified Representation for Heterogeneous Test Questions

May 27, 2019Yu Yin, Qi Liu, Zhenya Huang, Enhong Chen, Wei Tong, Shijin Wang, Yu Su

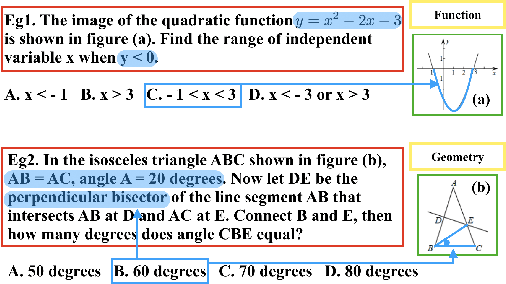

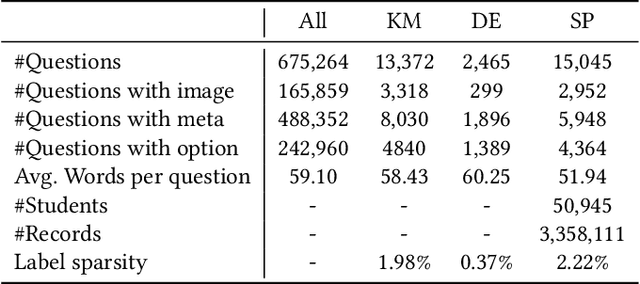

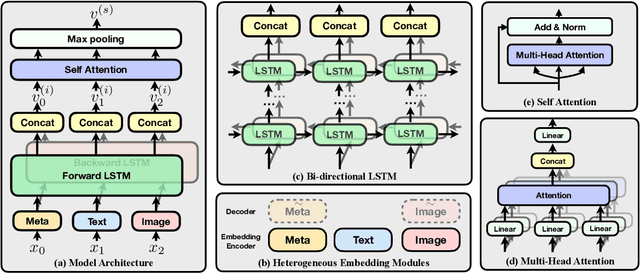

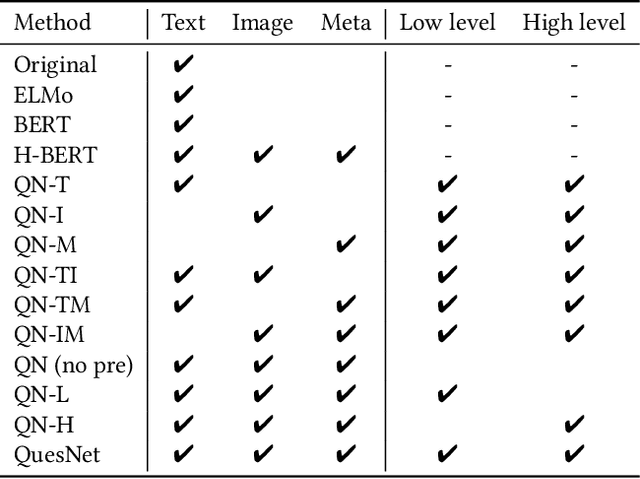

Understanding learning materials (e.g. test questions) is a crucial issue in online learning systems, which can promote many applications in education domain. Unfortunately, many supervised approaches suffer from the problem of scarce human labeled data, whereas abundant unlabeled resources are highly underutilized. To alleviate this problem, an effective solution is to use pre-trained representations for question understanding. However, existing pre-training methods in NLP area are infeasible to learn test question representations due to several domain-specific characteristics in education. First, questions usually comprise of heterogeneous data including content text, images and side information. Second, there exists both basic linguistic information as well as domain logic and knowledge. To this end, in this paper, we propose a novel pre-training method, namely QuesNet, for comprehensively learning question representations. Specifically, we first design a unified framework to aggregate question information with its heterogeneous inputs into a comprehensive vector. Then we propose a two-level hierarchical pre-training algorithm to learn better understanding of test questions in an unsupervised way. Here, a novel holed language model objective is developed to extract low-level linguistic features, and a domain-oriented objective is proposed to learn high-level logic and knowledge. Moreover, we show that QuesNet has good capability of being fine-tuned in many question-based tasks. We conduct extensive experiments on large-scale real-world question data, where the experimental results clearly demonstrate the effectiveness of QuesNet for question understanding as well as its superior applicability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge