Accelerating Transformer Pre-Training with 2:4 Sparsity

Apr 02, 2024Yuezhou Hu, Kang Zhao, Weiyu Huang, Jianfei Chen, Jun Zhu

Training large Transformers is slow, but recent innovations on GPU architecture gives us an advantage. NVIDIA Ampere GPUs can execute a fine-grained 2:4 sparse matrix multiplication twice as fast as its dense equivalent. In the light of this property, we comprehensively investigate the feasibility of accelerating feed-forward networks (FFNs) of Transformers in pre-training. First, we define a "flip rate" to monitor the stability of a 2:4 training process. Utilizing this metric, we suggest two techniques to preserve accuracy: to modify the sparse-refined straight-through estimator by applying the mask decay term on gradients, and to enhance the model's quality by a simple yet effective dense fine-tuning procedure near the end of pre-training. Besides, we devise two effective techniques to practically accelerate training: to calculate transposable 2:4 mask by convolution, and to accelerate gated activation functions by reducing GPU L2 cache miss. Experiments show that a combination of our methods reaches the best performance on multiple Transformers among different 2:4 training methods, while actual acceleration can be observed on different shapes of Transformer block.

Modeling Treatment Delays for Patients using Feature Label Pairs in a Time Series

Dec 03, 2018Weiyu Huang, Yunlong Wang, Li Zhou, Emily Zhao, Yilian Yuan, Alejandro Ribero

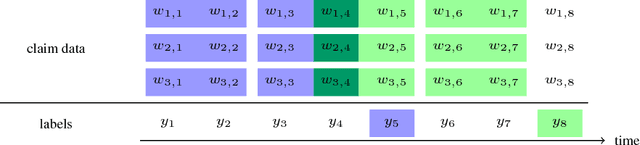

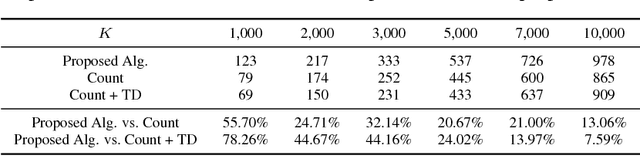

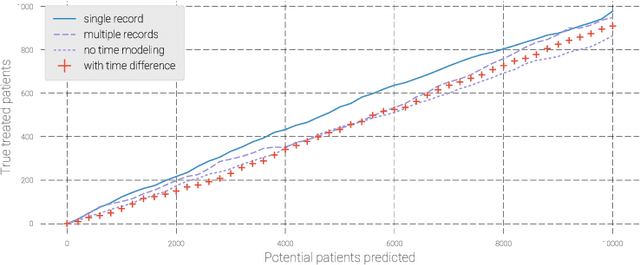

Pharmaceutical targeting is one of key inputs for making sales and marketing strategy planning. Targeting list is built on predicting physician's sales potential of certain type of patient. In this paper, we present a time-sensitive targeting framework leveraging time series model to predict patient's disease and treatment progression. We create time features by extracting service history within a certain period, and record whether the event happens in a look-forward period. Such feature-label pairs are examined across all time periods and all patients to train a model. It keeps the inherent order of services and evaluates features associated to the imminent future, which contribute to improved accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge