A Realistic Surgical Simulator for Non-Rigid and Contact-Rich Manipulation in Surgeries with the da Vinci Research Kit

Apr 08, 2024Yafei Ou, Sadra Zargarzadeh, Paniz Sedighi, Mahdi Tavakoli

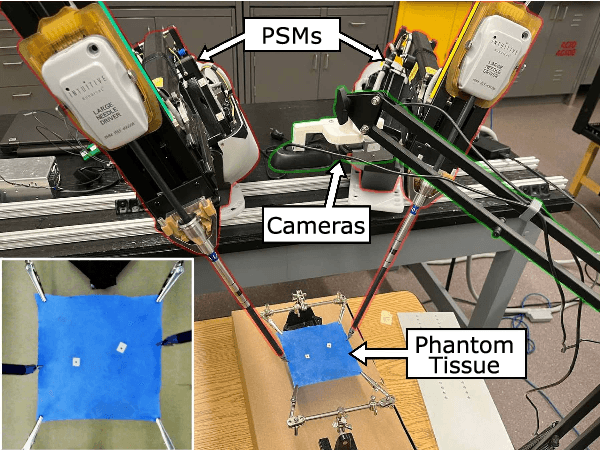

Realistic real-time surgical simulators play an increasingly important role in surgical robotics research, such as surgical robot learning and automation, and surgical skills assessment. Although there are a number of existing surgical simulators for research, they generally lack the ability to simulate the diverse types of objects and contact-rich manipulation tasks typically present in surgeries, such as tissue cutting and blood suction. In this work, we introduce CRESSim, a realistic surgical simulator based on PhysX 5 for the da Vinci Research Kit (dVRK) that enables simulating various contact-rich surgical tasks involving different surgical instruments, soft tissue, and body fluids. The real-world dVRK console and the master tool manipulator (MTM) robots are incorporated into the system to allow for teleoperation through virtual reality (VR). To showcase the advantages and potentials of the simulator, we present three examples of surgical tasks, including tissue grasping and deformation, blood suction, and tissue cutting. These tasks are performed using the simulated surgical instruments, including the large needle driver, suction irrigator, and curved scissor, through VR-based teleoperation.

Halo Reduction in Display Systems through Smoothed Local Histogram Equalization and Human Visual System Modeling

Feb 09, 2024Prasoon Ambalathankandy, Yafei Ou, Masayuki Ikebe

Halo artifacts significantly impact display quality. We propose a method to reduce halos in Local Histogram Equalization (LHE) algorithms by separately addressing dark and light variants. This approach results in visually natural images by exploring the relationship between lateral inhibition and halo artifacts in the human visual system.

Sparse Anatomical Prompt Semi-Supervised Learning with Masked Image Modeling for CBCT Tooth Segmentation

Feb 07, 2024Pengyu Dai, Yafei Ou, Yang Liu, Yue Zhao

Accurate tooth identification and segmentation in Cone Beam Computed Tomography (CBCT) dental images can significantly enhance the efficiency and precision of manual diagnoses performed by dentists. However, existing segmentation methods are mainly developed based on large data volumes training, on which their annotations are extremely time-consuming. Meanwhile, the teeth of each class in CBCT dental images being closely positioned, coupled with subtle inter-class differences, gives rise to the challenge of indistinct boundaries when training model with limited data. To address these challenges, this study aims to propose a tasked-oriented Masked Auto-Encoder paradigm to effectively utilize large amounts of unlabeled data to achieve accurate tooth segmentation with limited labeled data. Specifically, we first construct a self-supervised pre-training framework of masked auto encoder to efficiently utilize unlabeled data to enhance the network performance. Subsequently, we introduce a sparse masked prompt mechanism based on graph attention to incorporate boundary information of the teeth, aiding the network in learning the anatomical structural features of teeth. To the best of our knowledge, we are pioneering the integration of the mask pre-training paradigm into the CBCT tooth segmentation task. Extensive experiments demonstrate both the feasibility of our proposed method and the potential of the boundary prompt mechanism.

A Psychological Study: Importance of Contrast and Luminance in Color to Grayscale Mapping

Feb 07, 2024Prasoon Ambalathankandy, Yafei Ou, Sae Kaneko, Masayuki Ikebe

Grayscale images are essential in image processing and computer vision tasks. They effectively emphasize luminance and contrast, highlighting important visual features, while also being easily compatible with other algorithms. Moreover, their simplified representation makes them efficient for storage and transmission purposes. While preserving contrast is important for maintaining visual quality, other factors such as preserving information relevant to the specific application or task at hand may be more critical for achieving optimal performance. To evaluate and compare different decolorization algorithms, we designed a psychological experiment. During the experiment, participants were instructed to imagine color images in a hypothetical "colorless world" and select the grayscale image that best resembled their mental visualization. We conducted a comparison between two types of algorithms: (i) perceptual-based simple color space conversion algorithms, and (ii) spatial contrast-based algorithms, including iteration-based methods. Our experimental findings indicate that CIELAB exhibited superior performance on average, providing further evidence for the effectiveness of perception-based decolorization algorithms. On the other hand, the spatial contrast-based algorithms showed relatively poorer performance, possibly due to factors such as DC-offset and artificial contrast generation. However, these algorithms demonstrated shorter selection times. Notably, no single algorithm consistently outperformed the others across all test images. In this paper, we will delve into a comprehensive discussion on the significance of contrast and luminance in color-to-grayscale mapping based on our experimental results and analysis.

Sim-to-Real Surgical Robot Learning and Autonomous Planning for Internal Tissue Points Manipulation using Reinforcement Learning

Jun 25, 2023Yafei Ou, Mahdi Tavakoli

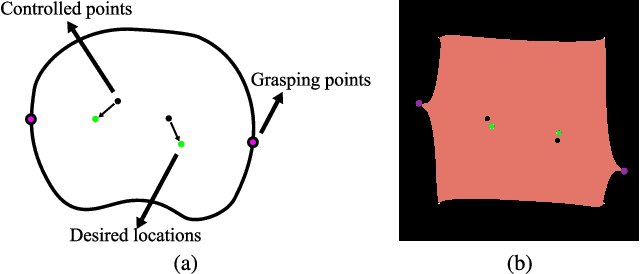

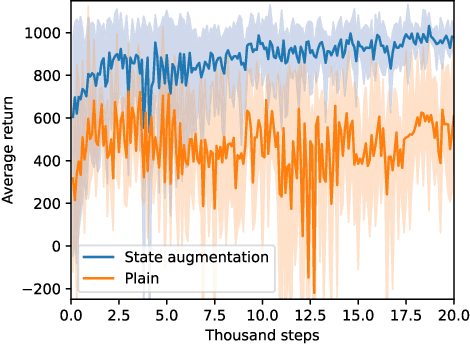

Indirect simultaneous positioning (ISP), where internal tissue points are placed at desired locations indirectly through the manipulation of boundary points, is a type of subtask frequently performed in robotic surgeries. Although challenging due to complex tissue dynamics, automating the task can potentially reduce the workload of surgeons. This paper presents a sim-to-real framework for learning to automate the task without interacting with a real environment, and for planning preoperatively to find the grasping points that minimize local tissue deformation. A control policy is learned using deep reinforcement learning (DRL) in the FEM-based simulation environment and transferred to real-world situation. Grasping points are planned in the simulator by utilizing the trained policy using Bayesian optimization (BO). Inconsistent simulation performance is overcome by formulating the problem as a state augmented Markov decision process (MDP). Experimental results show that the learned policy places the internal tissue points accurately, and that the planned grasping points yield small tissue deformation among the trials. The proposed learning and planning scheme is able to automate internal tissue point manipulation in surgeries and has the potential to be generalized to complex surgical scenarios.

* 8 pages, 8 figures

A Deep Registration Method for Accurate Quantification of Joint Space Narrowing Progression in Rheumatoid Arthritis

Apr 27, 2023Haolin Wang, Yafei Ou, Wanxuan Fang, Prasoon Ambalathankandy, Naoto Goto, Gen Ota, Masayuki Ikebe, Tamotsu Kamishima

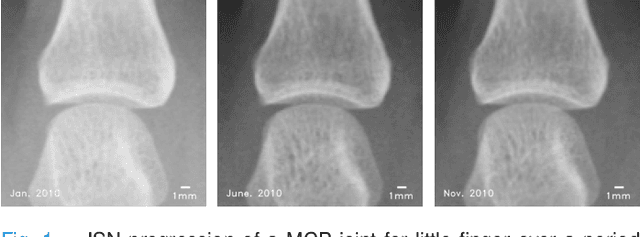

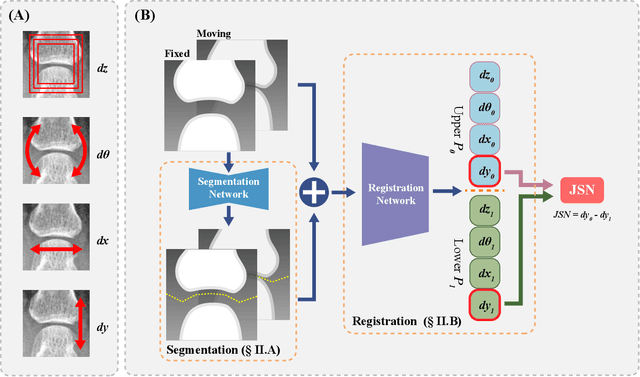

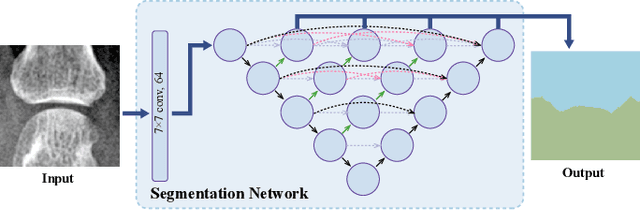

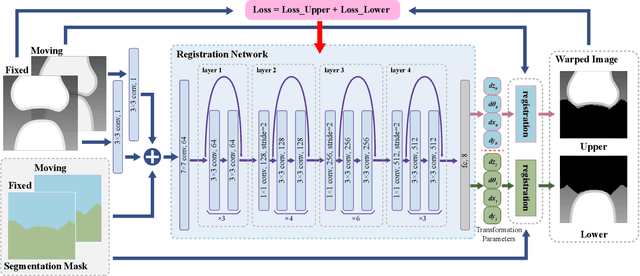

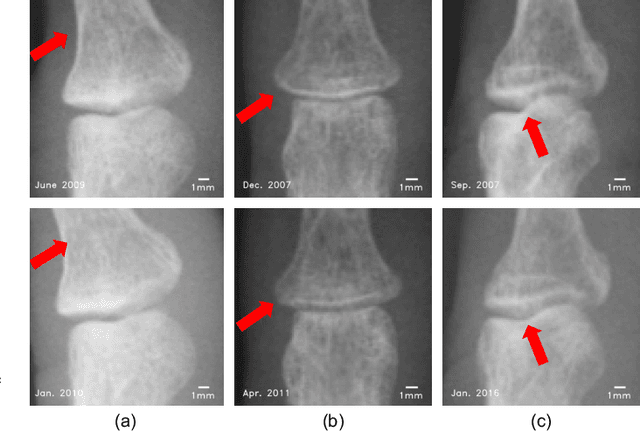

Rheumatoid arthritis (RA) is a chronic autoimmune inflammatory disease that results in progressive articular destruction and severe disability. Joint space narrowing (JSN) progression has been regarded as an important indicator for RA progression and has received sustained attention. In the diagnosis and monitoring of RA, radiology plays a crucial role to monitor joint space. A new framework for monitoring joint space by quantifying JSN progression through image registration in radiographic images has been developed. This framework offers the advantage of high accuracy, however, challenges do exist in reducing mismatches and improving reliability. In this work, a deep intra-subject rigid registration network is proposed to automatically quantify JSN progression in the early stage of RA. In our experiments, the mean-square error of Euclidean distance between moving and fixed image is 0.0031, standard deviation is 0.0661 mm, and the mismatching rate is 0.48\%. The proposed method has sub-pixel level accuracy, exceeding manual measurements by far, and is equipped with immune to noise, rotation, and scaling of joints. Moreover, this work provides loss visualization, which can aid radiologists and rheumatologists in assessing quantification reliability, with important implications for possible future clinical applications. As a result, we are optimistic that this proposed work will make a significant contribution to the automatic quantification of JSN progression in RA.

A Sub-pixel Accurate Quantification of Joint Space Narrowing Progression in Rheumatoid Arthritis

May 19, 2022Yafei Ou, Prasoon Ambalathankandy, Ryunosuke Furuya, Seiya Kawada, Tianyu Zeng, Yujie An, Tamotsu Kamishima, Kenichi Tamura, Masayuki Ikebe

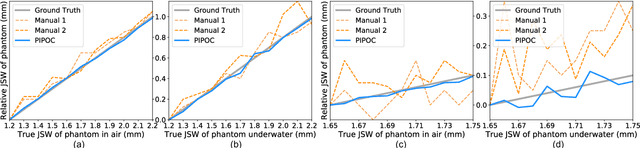

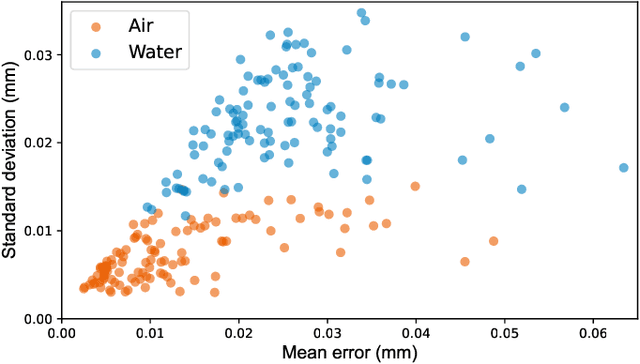

Rheumatoid arthritis (RA) is a chronic autoimmune disease that primarily affects peripheral synovial joints, like fingers, wrist and feet. Radiology plays a critical role in the diagnosis and monitoring of RA. Limited by the current spatial resolution of radiographic imaging, joint space narrowing (JSN) progression of RA with the same reason above can be less than one pixel per year with universal spatial resolution. Insensitive monitoring of JSN can hinder the radiologist/rheumatologist from making a proper and timely clinical judgment. In this paper, we propose a novel and sensitive method that we call partial image phase-only correlation which aims to automatically quantify JSN progression in the early stages of RA. The majority of the current literature utilizes the mean error, root-mean-square deviation and standard deviation to report the accuracy at pixel level. Our work measures JSN progression between a baseline and its follow-up finger joint images by using the phase spectrum in the frequency domain. Using this study, the mean error can be reduced to 0.0130mm when applied to phantom radiographs with ground truth, and 0.0519mm standard deviation for clinical radiography. With its sub-pixel accuracy far beyond manual measurement, we are optimistic that our work is promising for automatically quantifying JSN progression.

A color temperature-based high-speed decolorization: an empirical approach for tone mapping applications

Aug 31, 2021Prasoon Ambalathankandy, Yafei Ou, Masayuki Ikebe

Grayscale images are fundamental to many image processing applications like data compression, feature extraction, printing and tone mapping. However, some image information is lost when converting from color to grayscale. In this paper, we propose a light-weight and high-speed image decolorization method based on human perception of color temperatures. Chromatic aberration results from differential refraction of light depending on its wavelength. It causes some rays corresponding to cooler colors (like blue, green) to converge before the warmer colors (like red, orange). This phenomena creates a perception of warm colors "advancing" toward the eye, while the cool colors to be "receding" away. In this proposed color to gray conversion model, we implement a weighted blending function to combine red (perceived warm) and blue (perceived cool) channel. Our main contribution is threefold: First, we implement a high-speed color processing method using exact pixel by pixel processing, and we report a $5.7\times$ speed up when compared to other new algorithms. Second, our optimal color conversion method produces luminance in images that are comparable to other state of the art methods which we quantified using the objective metrics (E-score and C2G-SSIM) and a subjective user study. Third, we demonstrate that an effective luminance distribution can be achieved using our algorithm by using global and local tone mapping applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge