Incremental Bounded Model Checking of Artificial Neural Networks in CUDA

Jul 30, 2019Luiz H. Sena, Iury V. Bessa, Mikhail R. Gadelha, Lucas C. Cordeiro, Edjard Mota

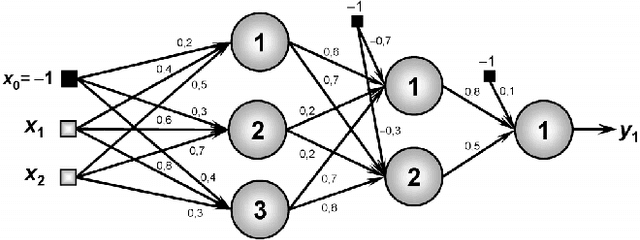

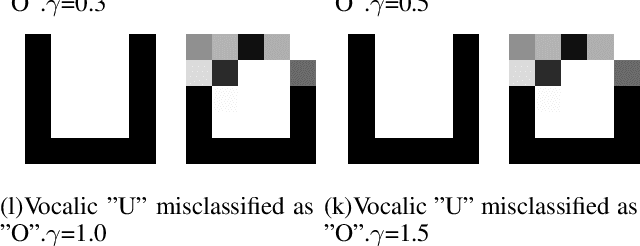

Artificial Neural networks (ANNs) are powerful computing systems employed for various applications due to their versatility to generalize and to respond to unexpected inputs/patterns. However, implementations of ANNs for safety-critical systems might lead to failures, which are hardly predicted in the design phase since ANNs are highly parallel and their parameters are hardly interpretable. Here we develop and evaluate a novel symbolic software verification framework based on incremental bounded model checking (BMC) to check for adversarial cases and coverage methods in multi-layer perceptron (MLP). In particular, we further develop the efficient SMT-based Context-Bounded Model Checker for Graphical Processing Units (ESBMC-GPU) in order to ensure the reliability of certain safety properties in which safety-critical systems can fail and make incorrect decisions, thereby leading to unwanted material damage or even put lives in danger. This paper marks the first symbolic verification framework to reason over ANNs implemented in CUDA. Our experimental results show that our approach implemented in ESBMC-GPU can successfully verify safety properties and covering methods in ANNs and correctly generate 28 adversarial cases in MLPs.

Self-organized inductive reasoning with NeMuS

Jun 16, 2019Leonardo Barreto, Edjard Mota

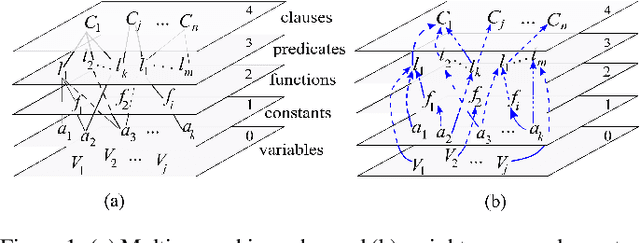

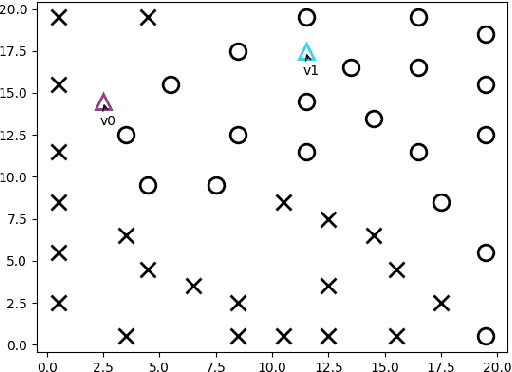

Neural Multi-Space (NeMuS) is a weighted multi-space representation for a portion of first-order logic designed for use with machine learning and neural network methods. It was demonstrated that it can be used to perform reasoning based on regions forming patterns of refutation and also in the process of inductive learning in ILP-like style. Initial experiments were carried out to investigate whether a self-organizing the approach is suitable to generate similar concept regions according to the attributes that form such concepts. We present the results and make an analysis of the suitability of the method in the process of inductive learning with NeMuS.

Efficient predicate invention using shared "NeMuS"

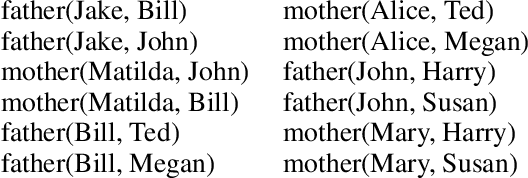

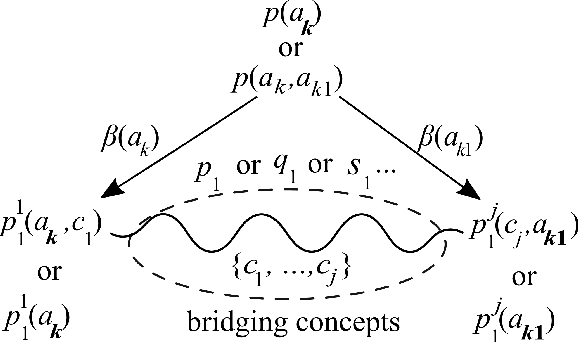

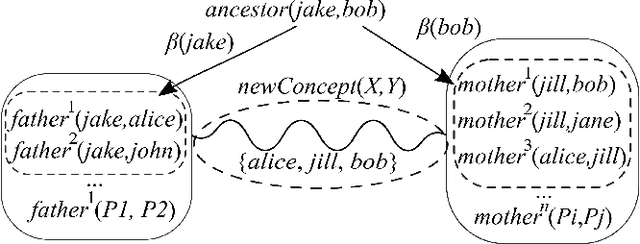

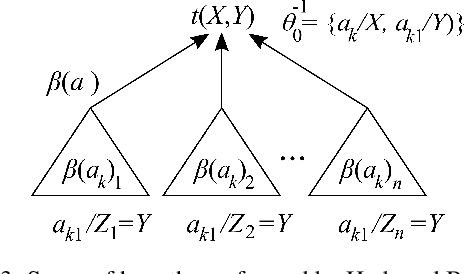

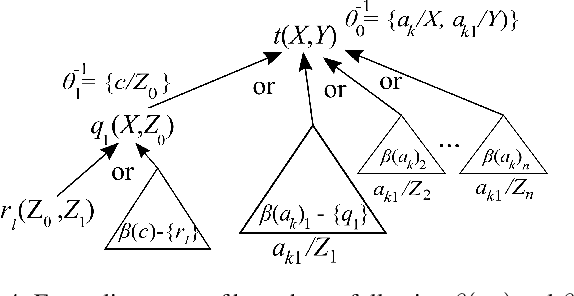

Jun 15, 2019Edjard Mota, Jacob M. Howe, Ana Schramm, Artur d'Avila Garcez

Amao is a cognitive agent framework that tackles the invention of predicates with a different strategy as compared to recent advances in Inductive Logic Programming (ILP) approaches like Meta-Intepretive Learning (MIL) technique. It uses a Neural Multi-Space (NeMuS) graph structure to anti-unify atoms from the Herbrand base, which passes in the inductive momentum check. Inductive Clause Learning (ICL), as it is called, is extended here by using the weights of logical components, already present in NeMuS, to support inductive learning by expanding clause candidates with anti-unified atoms. An efficient invention mechanism is achieved, including the learning of recursive hypotheses, while restricting the shape of the hypothesis by adding bias definitions or idiosyncrasies of the language.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge