PaintHuman: Towards High-fidelity Text-to-3D Human Texturing via Denoised Score Distillation

Oct 14, 2023Jianhui Yu, Hao Zhu, Liming Jiang, Chen Change Loy, Weidong Cai, Wayne Wu

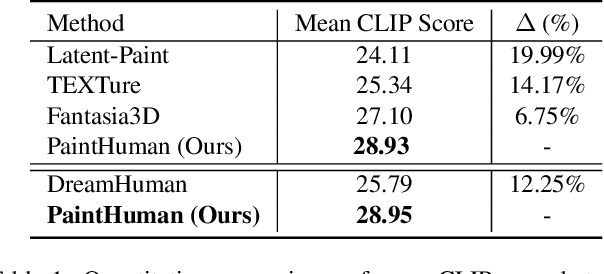

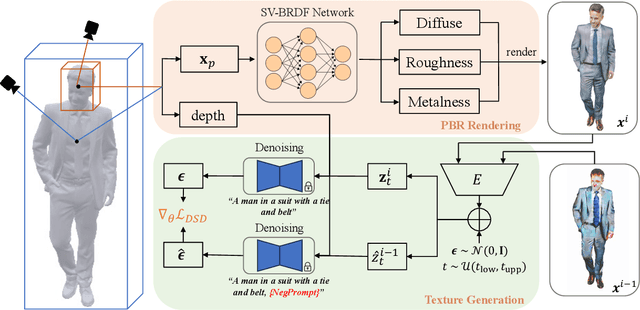

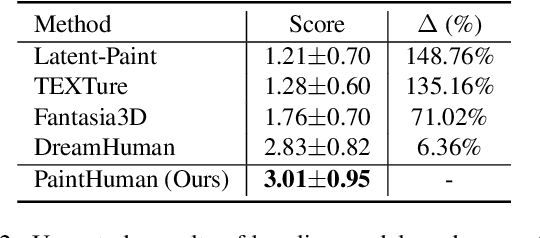

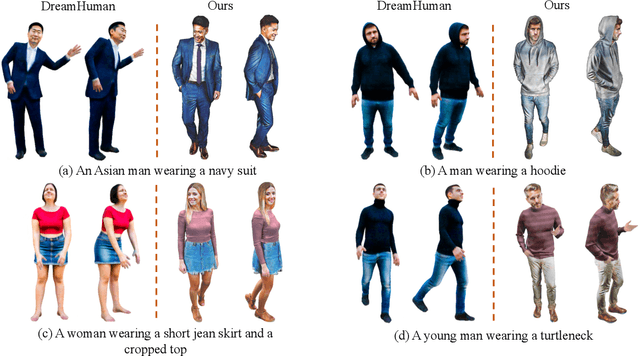

Recent advances in zero-shot text-to-3D human generation, which employ the human model prior (eg, SMPL) or Score Distillation Sampling (SDS) with pre-trained text-to-image diffusion models, have been groundbreaking. However, SDS may provide inaccurate gradient directions under the weak diffusion guidance, as it tends to produce over-smoothed results and generate body textures that are inconsistent with the detailed mesh geometry. Therefore, directly leverage existing strategies for high-fidelity text-to-3D human texturing is challenging. In this work, we propose a model called PaintHuman to addresses the challenges from two aspects. We first propose a novel score function, Denoised Score Distillation (DSD), which directly modifies the SDS by introducing negative gradient components to iteratively correct the gradient direction and generate high-quality textures. In addition, we use the depth map as a geometric guidance to ensure the texture is semantically aligned to human mesh surfaces. To guarantee the quality of rendered results, we employ geometry-aware networks to predict surface materials and render realistic human textures. Extensive experiments, benchmarked against state-of-the-art methods, validate the efficacy of our approach.

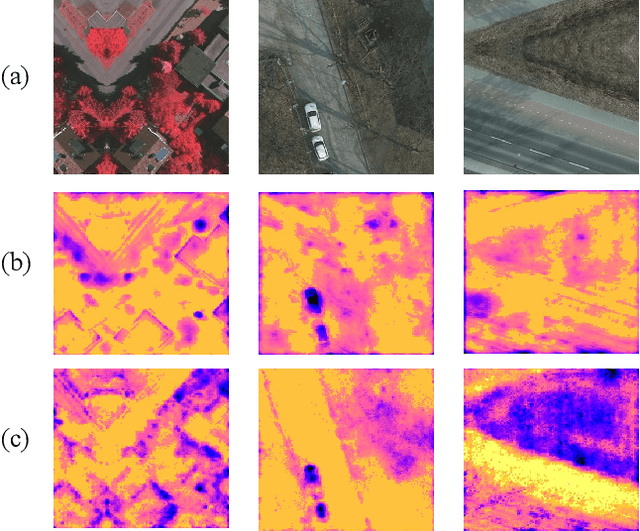

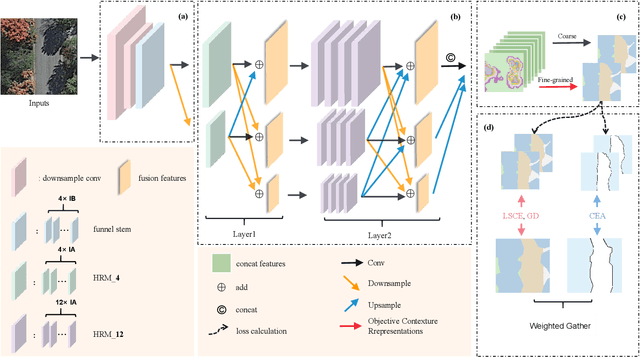

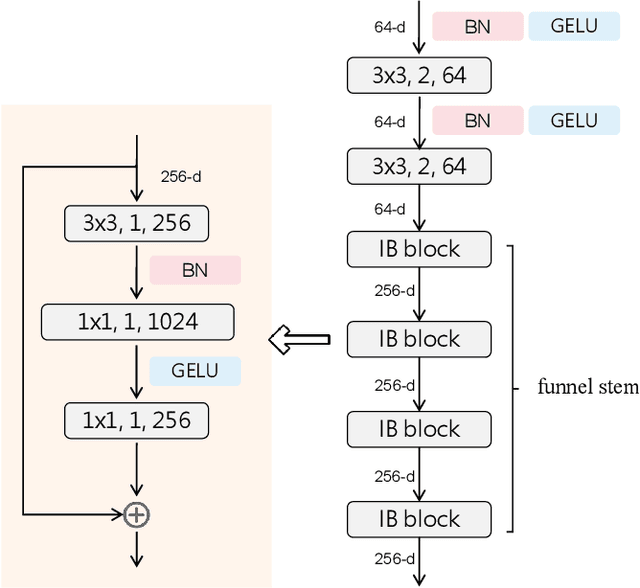

Hi-ResNet: A High-Resolution Remote Sensing Network for Semantic Segmentation

May 23, 2023Yuxia Chen, Pengcheng Fang, Jianhui Yu, Xiaoling Zhong, Xiaoming Zhang, Tianrui Li

High-resolution remote sensing (HRS) semantic segmentation extracts key objects from high-resolution coverage areas. However, objects of the same category within HRS images generally show significant differences in scale and shape across diverse geographical environments, making it difficult to fit the data distribution. Additionally, a complex background environment causes similar appearances of objects of different categories, which precipitates a substantial number of objects into misclassification as background. These issues make existing learning algorithms sub-optimal. In this work, we solve the above-mentioned problems by proposing a High-resolution remote sensing network (Hi-ResNet) with efficient network structure designs, which consists of a funnel module, a multi-branch module with stacks of information aggregation (IA) blocks, and a feature refinement module, sequentially, and Class-agnostic Edge Aware (CEA) loss. Specifically, we propose a funnel module to downsample, which reduces the computational cost, and extract high-resolution semantic information from the initial input image. Secondly, we downsample the processed feature images into multi-resolution branches incrementally to capture image features at different scales and apply IA blocks, which capture key latent information by leveraging attention mechanisms, for effective feature aggregation, distinguishing image features of the same class with variant scales and shapes. Finally, our feature refinement module integrate the CEA loss function, which disambiguates inter-class objects with similar shapes and increases the data distribution distance for correct predictions. With effective pre-training strategies, we demonstrated the superiority of Hi-ResNet over state-of-the-art methods on three HRS segmentation benchmarks.

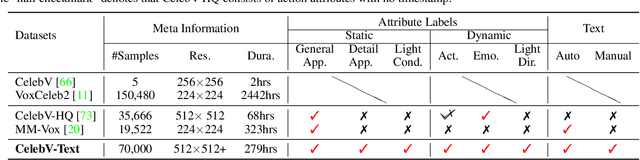

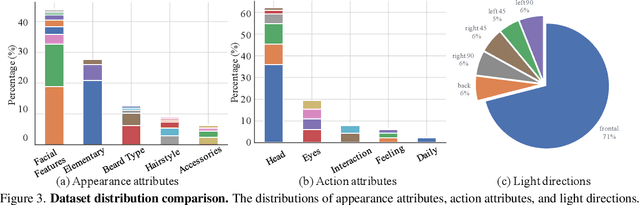

CelebV-Text: A Large-Scale Facial Text-Video Dataset

Mar 26, 2023Jianhui Yu, Hao Zhu, Liming Jiang, Chen Change Loy, Weidong Cai, Wayne Wu

Text-driven generation models are flourishing in video generation and editing. However, face-centric text-to-video generation remains a challenge due to the lack of a suitable dataset containing high-quality videos and highly relevant texts. This paper presents CelebV-Text, a large-scale, diverse, and high-quality dataset of facial text-video pairs, to facilitate research on facial text-to-video generation tasks. CelebV-Text comprises 70,000 in-the-wild face video clips with diverse visual content, each paired with 20 texts generated using the proposed semi-automatic text generation strategy. The provided texts are of high quality, describing both static and dynamic attributes precisely. The superiority of CelebV-Text over other datasets is demonstrated via comprehensive statistical analysis of the videos, texts, and text-video relevance. The effectiveness and potential of CelebV-Text are further shown through extensive self-evaluation. A benchmark is constructed with representative methods to standardize the evaluation of the facial text-to-video generation task. All data and models are publicly available.

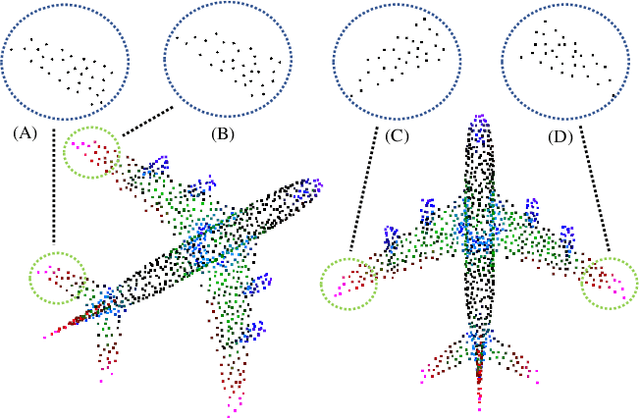

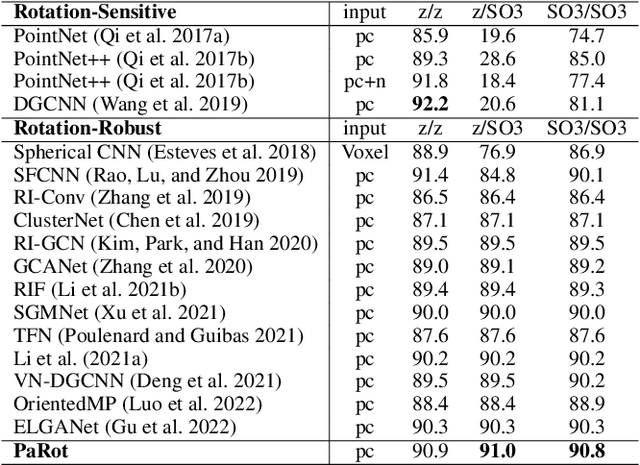

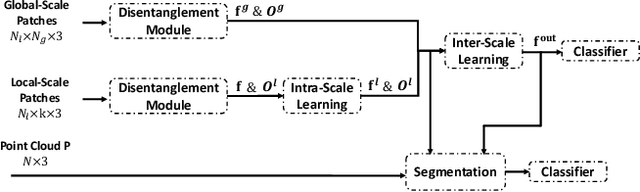

PaRot: Patch-Wise Rotation-Invariant Network via Feature Disentanglement and Pose Restoration

Feb 06, 2023Dingxin Zhang, Jianhui Yu, Chaoyi Zhang, Weidong Cai

Recent interest in point cloud analysis has led rapid progress in designing deep learning methods for 3D models. However, state-of-the-art models are not robust to rotations, which remains an unknown prior to real applications and harms the model performance. In this work, we introduce a novel Patch-wise Rotation-invariant network (PaRot), which achieves rotation invariance via feature disentanglement and produces consistent predictions for samples with arbitrary rotations. Specifically, we design a siamese training module which disentangles rotation invariance and equivariance from patches defined over different scales, e.g., the local geometry and global shape, via a pair of rotations. However, our disentangled invariant feature loses the intrinsic pose information of each patch. To solve this problem, we propose a rotation-invariant geometric relation to restore the relative pose with equivariant information for patches defined over different scales. Utilising the pose information, we propose a hierarchical module which implements intra-scale and inter-scale feature aggregation for 3D shape learning. Moreover, we introduce a pose-aware feature propagation process with the rotation-invariant relative pose information embedded. Experiments show that our disentanglement module extracts high-quality rotation-robust features and the proposed lightweight model achieves competitive results in rotated 3D object classification and part segmentation tasks. Our project page is released at: https://patchrot.github.io/.

Rethinking Rotation Invariance with Point Cloud Registration

Dec 31, 2022Jianhui Yu, Chaoyi Zhang, Weidong Cai

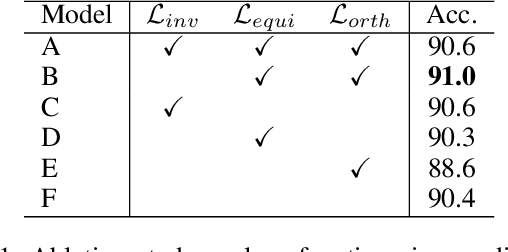

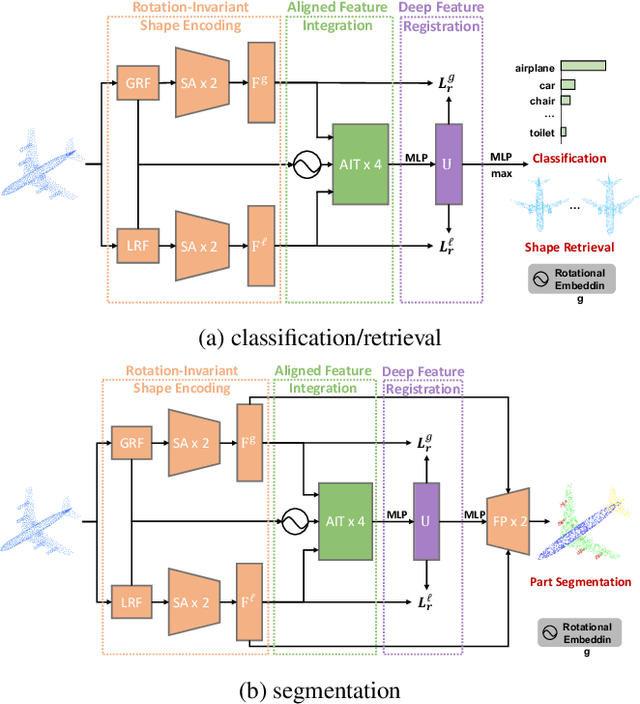

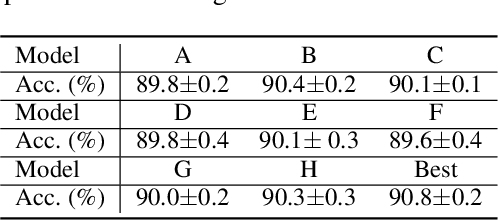

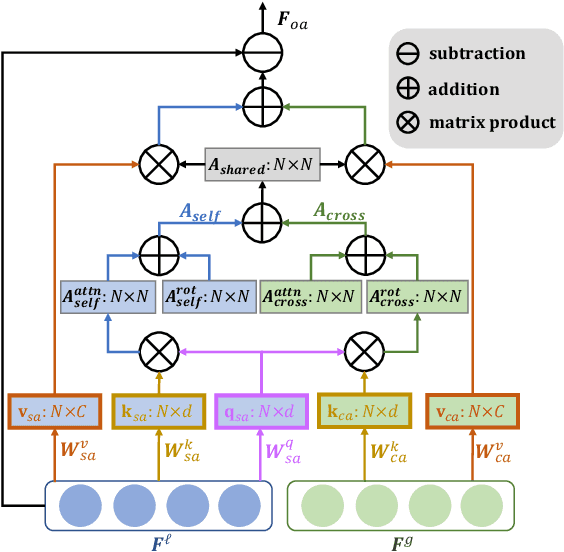

Recent investigations on rotation invariance for 3D point clouds have been devoted to devising rotation-invariant feature descriptors or learning canonical spaces where objects are semantically aligned. Examinations of learning frameworks for invariance have seldom been looked into. In this work, we review rotation invariance in terms of point cloud registration and propose an effective framework for rotation invariance learning via three sequential stages, namely rotation-invariant shape encoding, aligned feature integration, and deep feature registration. We first encode shape descriptors constructed with respect to reference frames defined over different scales, e.g., local patches and global topology, to generate rotation-invariant latent shape codes. Within the integration stage, we propose Aligned Integration Transformer to produce a discriminative feature representation by integrating point-wise self- and cross-relations established within the shape codes. Meanwhile, we adopt rigid transformations between reference frames to align the shape codes for feature consistency across different scales. Finally, the deep integrated feature is registered to both rotation-invariant shape codes to maximize feature similarities, such that rotation invariance of the integrated feature is preserved and shared semantic information is implicitly extracted from shape codes. Experimental results on 3D shape classification, part segmentation, and retrieval tasks prove the feasibility of our work. Our project page is released at: https://rotation3d.github.io/.

Spatiality-guided Transformer for 3D Dense Captioning on Point Clouds

Apr 22, 2022Heng Wang, Chaoyi Zhang, Jianhui Yu, Weidong Cai

Dense captioning in 3D point clouds is an emerging vision-and-language task involving object-level 3D scene understanding. Apart from coarse semantic class prediction and bounding box regression as in traditional 3D object detection, 3D dense captioning aims at producing a further and finer instance-level label of natural language description on visual appearance and spatial relations for each scene object of interest. To detect and describe objects in a scene, following the spirit of neural machine translation, we propose a transformer-based encoder-decoder architecture, namely SpaCap3D, to transform objects into descriptions, where we especially investigate the relative spatiality of objects in 3D scenes and design a spatiality-guided encoder via a token-to-token spatial relation learning objective and an object-centric decoder for precise and spatiality-enhanced object caption generation. Evaluated on two benchmark datasets, ScanRefer and ReferIt3D, our proposed SpaCap3D outperforms the baseline method Scan2Cap by 4.94% and 9.61% in CIDEr@0.5IoU, respectively. Our project page with source code and supplementary files is available at https://SpaCap3D.github.io/ .

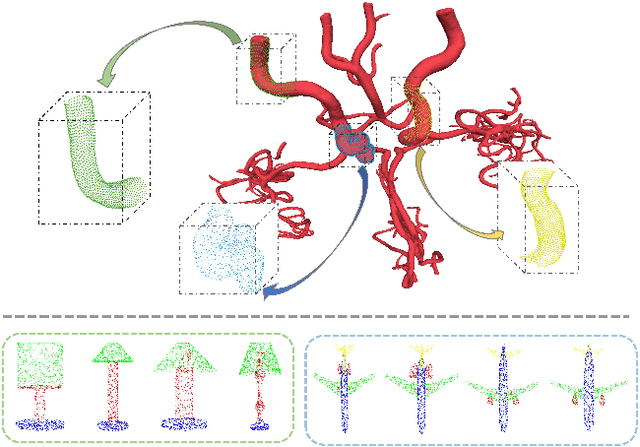

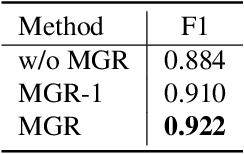

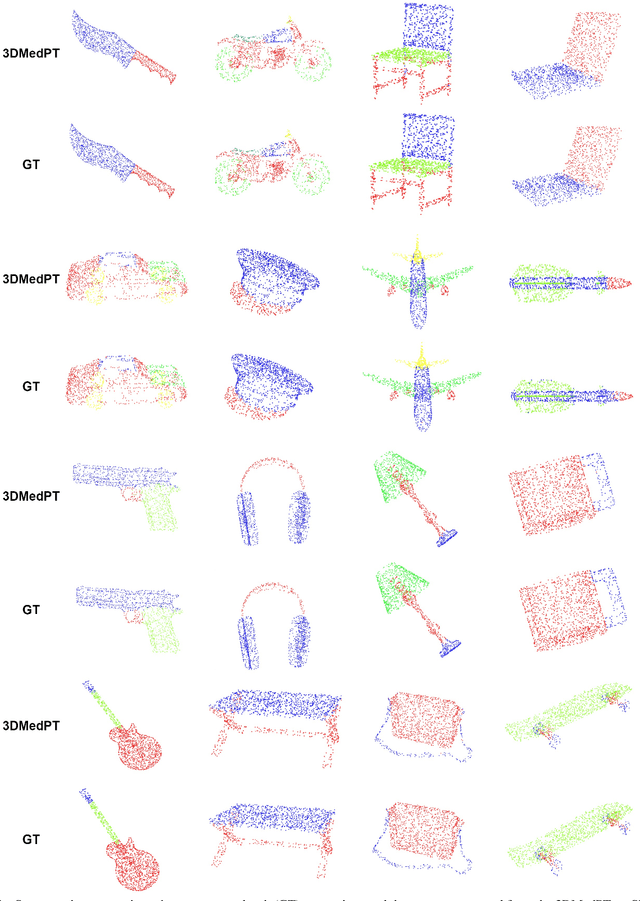

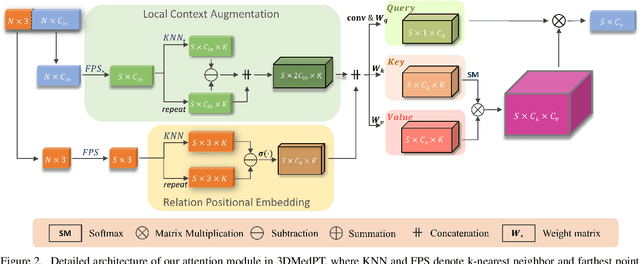

3D Medical Point Transformer: Introducing Convolution to Attention Networks for Medical Point Cloud Analysis

Dec 17, 2021Jianhui Yu, Chaoyi Zhang, Heng Wang, Dingxin Zhang, Yang Song, Tiange Xiang, Dongnan Liu, Weidong Cai

General point clouds have been increasingly investigated for different tasks, and recently Transformer-based networks are proposed for point cloud analysis. However, there are barely related works for medical point clouds, which are important for disease detection and treatment. In this work, we propose an attention-based model specifically for medical point clouds, namely 3D medical point Transformer (3DMedPT), to examine the complex biological structures. By augmenting contextual information and summarizing local responses at query, our attention module can capture both local context and global content feature interactions. However, the insufficient training samples of medical data may lead to poor feature learning, so we apply position embeddings to learn accurate local geometry and Multi-Graph Reasoning (MGR) to examine global knowledge propagation over channel graphs to enrich feature representations. Experiments conducted on IntrA dataset proves the superiority of 3DMedPT, where we achieve the best classification and segmentation results. Furthermore, the promising generalization ability of our method is validated on general 3D point cloud benchmarks: ModelNet40 and ShapeNetPart. Code is released.

Voxel-wise Cross-Volume Representation Learning for 3D Neuron Reconstruction

Aug 14, 2021Heng Wang, Chaoyi Zhang, Jianhui Yu, Yang Song, Siqi Liu, Wojciech Chrzanowski, Weidong Cai

Automatic 3D neuron reconstruction is critical for analysing the morphology and functionality of neurons in brain circuit activities. However, the performance of existing tracing algorithms is hinged by the low image quality. Recently, a series of deep learning based segmentation methods have been proposed to improve the quality of raw 3D optical image stacks by removing noises and restoring neuronal structures from low-contrast background. Due to the variety of neuron morphology and the lack of large neuron datasets, most of current neuron segmentation models rely on introducing complex and specially-designed submodules to a base architecture with the aim of encoding better feature representations. Though successful, extra burden would be put on computation during inference. Therefore, rather than modifying the base network, we shift our focus to the dataset itself. The encoder-decoder backbone used in most neuron segmentation models attends only intra-volume voxel points to learn structural features of neurons but neglect the shared intrinsic semantic features of voxels belonging to the same category among different volumes, which is also important for expressive representation learning. Hence, to better utilise the scarce dataset, we propose to explicitly exploit such intrinsic features of voxels through a novel voxel-level cross-volume representation learning paradigm on the basis of an encoder-decoder segmentation model. Our method introduces no extra cost during inference. Evaluated on 42 3D neuron images from BigNeuron project, our proposed method is demonstrated to improve the learning ability of the original segmentation model and further enhancing the reconstruction performance.

Walk in the Cloud: Learning Curves for Point Clouds Shape Analysis

May 04, 2021Tiange Xiang, Chaoyi Zhang, Yang Song, Jianhui Yu, Weidong Cai

Discrete point cloud objects lack sufficient shape descriptors of 3D geometries. In this paper, we present a novel method for aggregating hypothetical curves in point clouds. Sequences of connected points (curves) are initially grouped by taking guided walks in the point clouds, and then subsequently aggregated back to augment their point-wise features. We provide an effective implementation of the proposed aggregation strategy including a novel curve grouping operator followed by a curve aggregation operator. Our method was benchmarked on several point cloud analysis tasks where we achieved the state-of-the-art classification accuracy of 94.2% on the ModelNet40 classification task, instance IoU of 86.8 on the ShapeNetPart segmentation task and cosine error of 0.11 on the ModelNet40 normal estimation task

Exploiting Edge-Oriented Reasoning for 3D Point-based Scene Graph Analysis

Mar 31, 2021Chaoyi Zhang, Jianhui Yu, Yang Song, Weidong Cai

Scene understanding is a critical problem in computer vision. In this paper, we propose a 3D point-based scene graph generation ($\mathbf{SGG_{point}}$) framework to effectively bridge perception and reasoning to achieve scene understanding via three sequential stages, namely scene graph construction, reasoning, and inference. Within the reasoning stage, an EDGE-oriented Graph Convolutional Network ($\texttt{EdgeGCN}$) is created to exploit multi-dimensional edge features for explicit relationship modeling, together with the exploration of two associated twinning interaction mechanisms between nodes and edges for the independent evolution of scene graph representations. Overall, our integrated $\mathbf{SGG_{point}}$ framework is established to seek and infer scene structures of interest from both real-world and synthetic 3D point-based scenes. Our experimental results show promising edge-oriented reasoning effects on scene graph generation studies. We also demonstrate our method advantage on several traditional graph representation learning benchmark datasets, including the node-wise classification on citation networks and whole-graph recognition problems for molecular analysis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge