Use of Student's t-Distribution for the Latent Layer in a Coupled Variational Autoencoder

Nov 21, 2020Kevin R. Chen, Daniel Svoboda, Kenric P. Nelson

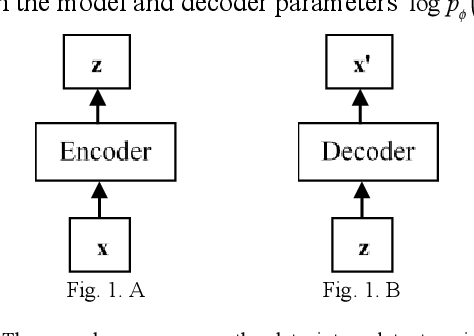

A Coupled Variational Autoencoder, which incorporates both a generalized loss function and latent layer distribution, shows improvement in the accuracy and robustness of generated replicas of MNIST numerals. The latent layer uses a Student's t-distribution to incorporate heavy-tail decay. The loss function uses a coupled logarithm, which increases the penalty on images with outlier likelihood. The generalized mean of the generated image's likelihood is used to measure the performance of the algorithm's decisiveness, accuracy, and robustness.

Applying the Decisiveness and Robustness Metrics to Convolutional Neural Networks

May 29, 2020Christopher A. George, Eduardo A. Barrera, Kenric P. Nelson

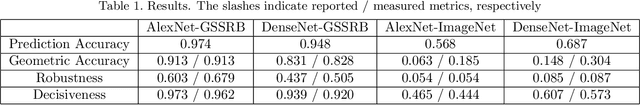

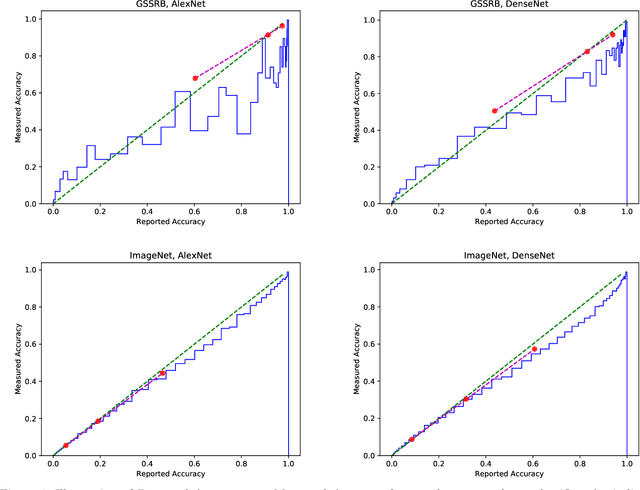

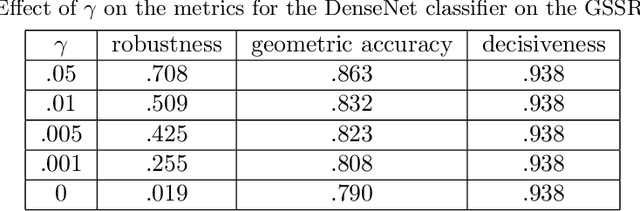

We review three recently-proposed classifier quality metrics and consider their suitability for large-scale classification challenges such as applying convolutional neural networks to the 1000-class ImageNet dataset. These metrics, referred to as the "geometric accuracy," "decisiveness," and "robustness," are based on the generalized mean ($\rho$ equals 0, 1, and -2/3, respectively) of the classifier's self-reported and measured probabilities of correct classification. We also propose some minor clarifications to standardize the metric definitions. With these updates, we show some examples of calculating the metrics using deep convolutional neural networks (AlexNet and DenseNet) acting on large datasets (the German Traffic Sign Recognition Benchmark and ImageNet).

Coupled VAE: Improved Accuracy and Robustness of a Variational Autoencoder

Jun 05, 2019Shichen Cao, Jingjing Li, Kenric P. Nelson, Mark A. Kon

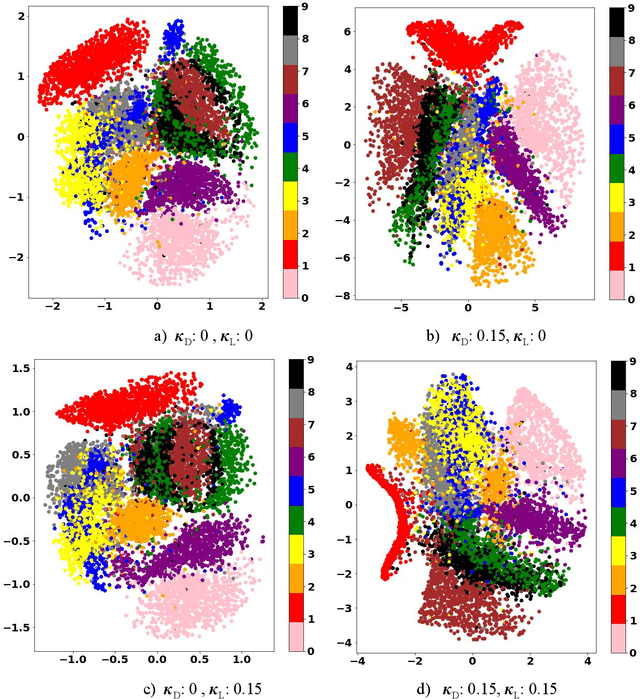

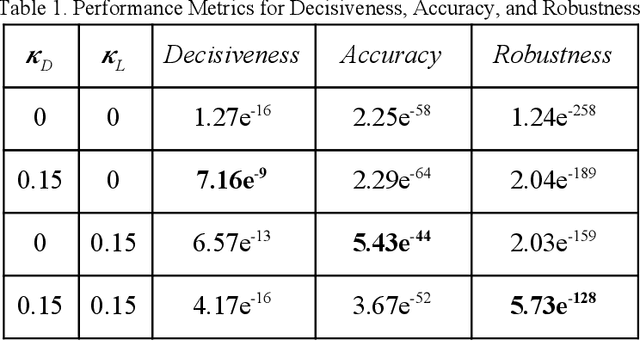

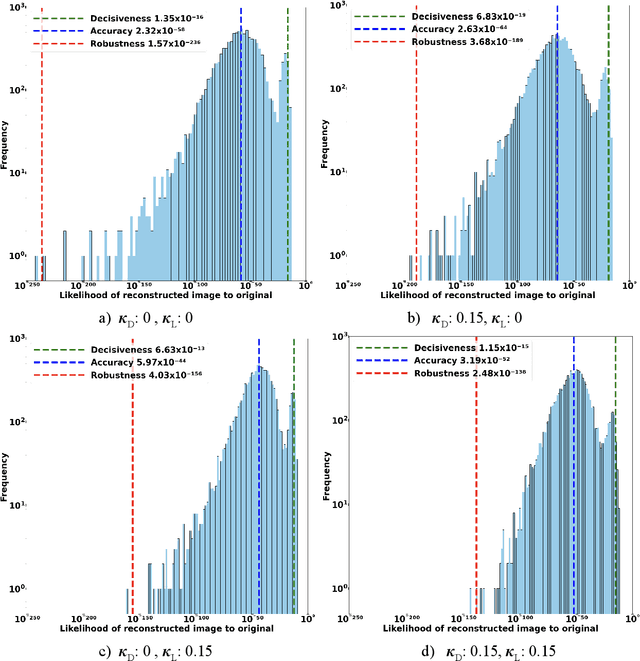

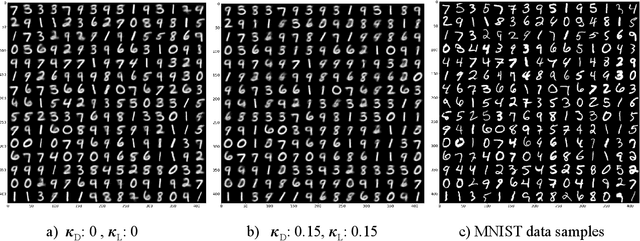

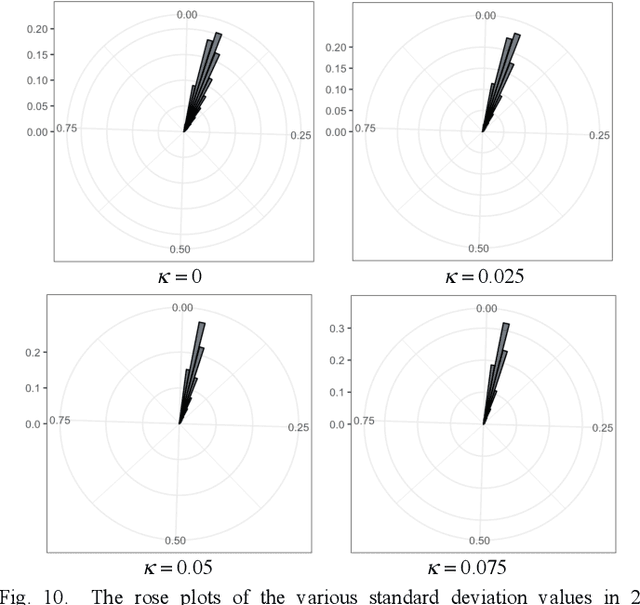

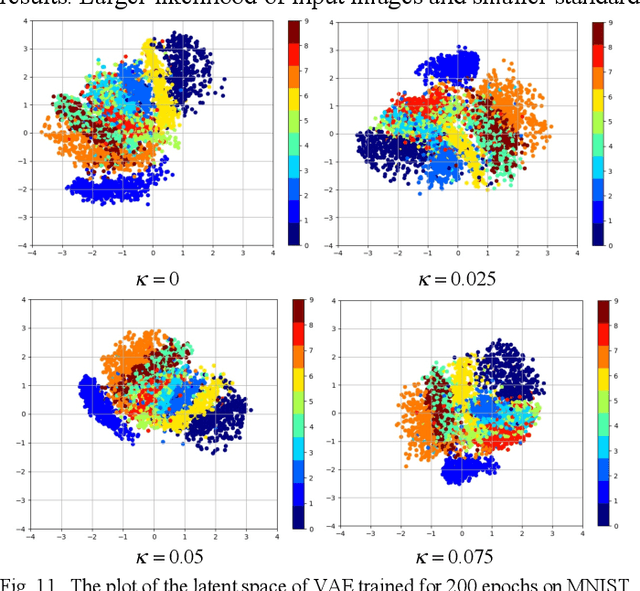

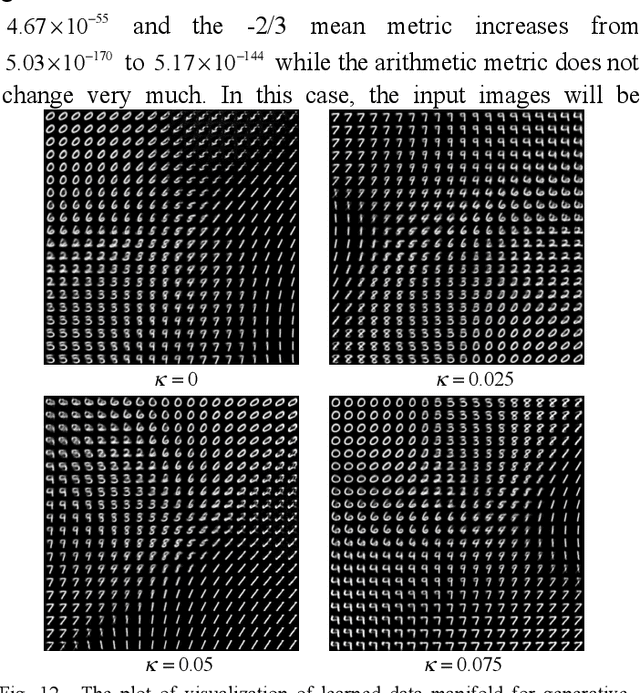

We present a coupled Variational Auto-Encoder (VAE) method that improves the accuracy and robustness of the probabilistic inferences on represented data. The new method models the dependency between input feature vectors (images) and weighs the outliers with a higher penalty by generalizing the original loss function to the coupled entropy function, using the principles of nonlinear statistical coupling. We evaluate the performance of the coupled VAE model using the MNIST dataset. Compared with the traditional VAE algorithm, the output images generated by the coupled VAE method are clearer and less blurry. The visualization of the input images embedded in 2D latent variable space provides a deeper insight into the structure of new model with coupled loss function: the latent variable has a smaller deviation and the output values are generated by a more compact latent space. We analyze the histogram of the likelihoods of the input images using the generalized mean, which measures the model's accuracy as a function of the relative risk. The neutral accuracy, which is the geometric mean and is consistent with a measure of the Shannon cross-entropy, is improved. The robust accuracy, measured by the -2/3 generalized mean, is also improved. And the decisive accuracy, measured by the arithmetic mean, is unchanged.

Probabilistic graphs using coupled random variables

Apr 23, 2014Kenric P. Nelson, Madalina Barbu, Brian J. Scannell

Neural network design has utilized flexible nonlinear processes which can mimic biological systems, but has suffered from a lack of traceability in the resulting network. Graphical probabilistic models ground network design in probabilistic reasoning, but the restrictions reduce the expressive capability of each node making network designs complex. The ability to model coupled random variables using the calculus of nonextensive statistical mechanics provides a neural node design incorporating nonlinear coupling between input states while maintaining the rigor of probabilistic reasoning. A generalization of Bayes rule using the coupled product enables a single node to model correlation between hundreds of random variables. A coupled Markov random field is designed for the inferencing and classification of UCI's MLR 'Multiple Features Data Set' such that thousands of linear correlation parameters can be replaced with a single coupling parameter with just a (3%, 4%) percent reduction in (classification, inference) performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge