WSPAlign: Word Alignment Pre-training via Large-Scale Weakly Supervised Span Prediction

Jun 09, 2023Qiyu Wu, Masaaki Nagata, Yoshimasa Tsuruoka

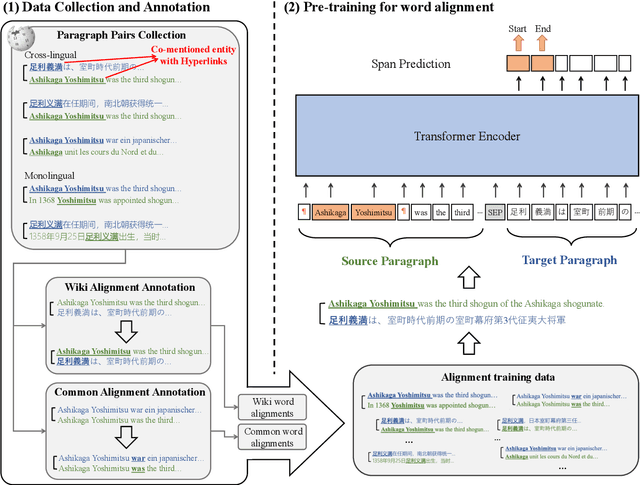

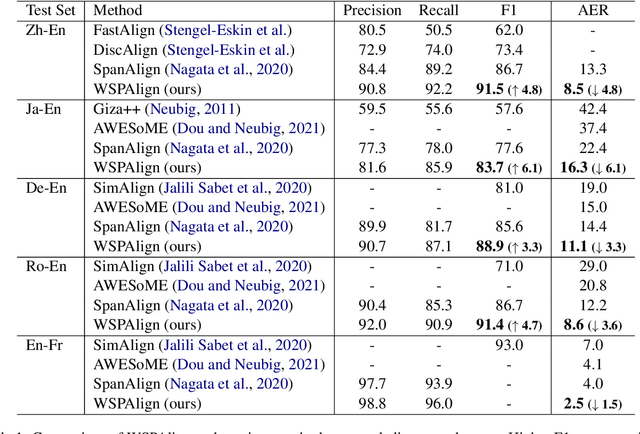

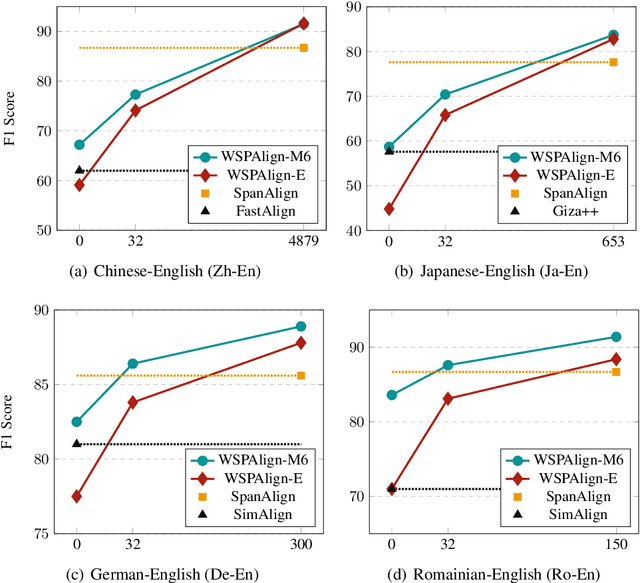

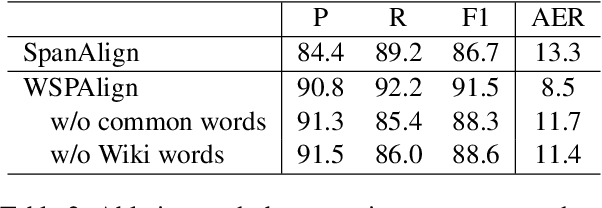

Most existing word alignment methods rely on manual alignment datasets or parallel corpora, which limits their usefulness. Here, to mitigate the dependence on manual data, we broaden the source of supervision by relaxing the requirement for correct, fully-aligned, and parallel sentences. Specifically, we make noisy, partially aligned, and non-parallel paragraphs. We then use such a large-scale weakly-supervised dataset for word alignment pre-training via span prediction. Extensive experiments with various settings empirically demonstrate that our approach, which is named WSPAlign, is an effective and scalable way to pre-train word aligners without manual data. When fine-tuned on standard benchmarks, WSPAlign has set a new state-of-the-art by improving upon the best-supervised baseline by 3.3~6.1 points in F1 and 1.5~6.1 points in AER. Furthermore, WSPAlign also achieves competitive performance compared with the corresponding baselines in few-shot, zero-shot and cross-lingual tests, which demonstrates that WSPAlign is potentially more practical for low-resource languages than existing methods.

Domain Adaptation of Machine Translation with Crowdworkers

Oct 28, 2022Makoto Morishita, Jun Suzuki, Masaaki Nagata

Although a machine translation model trained with a large in-domain parallel corpus achieves remarkable results, it still works poorly when no in-domain data are available. This situation restricts the applicability of machine translation when the target domain's data are limited. However, there is great demand for high-quality domain-specific machine translation models for many domains. We propose a framework that efficiently and effectively collects parallel sentences in a target domain from the web with the help of crowdworkers. With the collected parallel data, we can quickly adapt a machine translation model to the target domain. Our experiments show that the proposed method can collect target-domain parallel data over a few days at a reasonable cost. We tested it with five domains, and the domain-adapted model improved the BLEU scores to +19.7 by an average of +7.8 points compared to a general-purpose translation model.

A Simple and Strong Baseline for End-to-End Neural RST-style Discourse Parsing

Oct 15, 2022Naoki Kobayashi, Tsutomu Hirao, Hidetaka Kamigaito, Manabu Okumura, Masaaki Nagata

To promote and further develop RST-style discourse parsing models, we need a strong baseline that can be regarded as a reference for reporting reliable experimental results. This paper explores a strong baseline by integrating existing simple parsing strategies, top-down and bottom-up, with various transformer-based pre-trained language models. The experimental results obtained from two benchmark datasets demonstrate that the parsing performance strongly relies on the pretrained language models rather than the parsing strategies. In particular, the bottom-up parser achieves large performance gains compared to the current best parser when employing DeBERTa. We further reveal that language models with a span-masking scheme especially boost the parsing performance through our analysis within intra- and multi-sentential parsing, and nuclearity prediction.

Extending Word-Level Quality Estimation for Post-Editing Assistance

Sep 23, 2022Yizhen Wei, Takehito Utsuro, Masaaki Nagata

We define a novel concept called extended word alignment in order to improve post-editing assistance efficiency. Based on extended word alignment, we further propose a novel task called refined word-level QE that outputs refined tags and word-level correspondences. Compared to original word-level QE, the new task is able to directly point out editing operations, thus improves efficiency. To extract extended word alignment, we adopt a supervised method based on mBERT. To solve refined word-level QE, we firstly predict original QE tags by training a regression model for sequence tagging based on mBERT and XLM-R. Then, we refine original word tags with extended word alignment. In addition, we extract source-gap correspondences, meanwhile, obtaining gap tags. Experiments on two language pairs show the feasibility of our method and give us inspirations for further improvement.

JParaCrawl v3.0: A Large-scale English-Japanese Parallel Corpus

Feb 28, 2022Makoto Morishita, Katsuki Chousa, Jun Suzuki, Masaaki Nagata

Most current machine translation models are mainly trained with parallel corpora, and their translation accuracy largely depends on the quality and quantity of the corpora. Although there are billions of parallel sentences for a few language pairs, effectively dealing with most language pairs is difficult due to a lack of publicly available parallel corpora. This paper creates a large parallel corpus for English-Japanese, a language pair for which only limited resources are available, compared to such resource-rich languages as English-German. It introduces a new web-based English-Japanese parallel corpus named JParaCrawl v3.0. Our new corpus contains more than 21 million unique parallel sentence pairs, which is more than twice as many as the previous JParaCrawl v2.0 corpus. Through experiments, we empirically show how our new corpus boosts the accuracy of machine translation models on various domains. The JParaCrawl v3.0 corpus will eventually be publicly available online for research purposes.

Bilingual Text Extraction as Reading Comprehension

Apr 29, 2020Katsuki Chousa, Masaaki Nagata, Masaaki Nishino

In this paper, we propose a method to extract bilingual texts automatically from noisy parallel corpora by framing the problem as a token-level span prediction, such as SQuAD-style Reading Comprehension. To extract a span of the target document that is a translation of a given source sentence (span), we use either QANet or multilingual BERT. QANet can be trained for a specific parallel corpus from scratch, while multilingual BERT can utilize pre-trained multilingual representations. For the span prediction method using QANet, we introduce a total optimization method using integer linear programming to achieve consistency in the predicted parallel spans. We conduct a parallel sentence extraction experiment using simulated noisy parallel corpora with two language pairs (En-Fr and En-Ja) and find that the proposed method using QANet achieves significantly better accuracy than a baseline method using two bi-directional RNN encoders, particularly for distant language pairs (En-Ja). We also conduct a sentence alignment experiment using En-Ja newspaper articles and find that the proposed method using multilingual BERT achieves significantly better accuracy than a baseline method using a bilingual dictionary and dynamic programming.

A Supervised Word Alignment Method based on Cross-Language Span Prediction using Multilingual BERT

Apr 29, 2020Masaaki Nagata, Chousa Katsuki, Masaaki Nishino

We present a novel supervised word alignment method based on cross-language span prediction. We first formalize a word alignment problem as a collection of independent predictions from a token in the source sentence to a span in the target sentence. As this is equivalent to a SQuAD v2.0 style question answering task, we then solve this problem by using multilingual BERT, which is fine-tuned on a manually created gold word alignment data. We greatly improved the word alignment accuracy by adding the context of the token to the question. In the experiments using five word alignment datasets among Chinese, Japanese, German, Romanian, French, and English, we show that the proposed method significantly outperformed previous supervised and unsupervised word alignment methods without using any bitexts for pretraining. For example, we achieved an F1 score of 86.7 for the Chinese-English data, which is 13.3 points higher than the previous state-of-the-art supervised methods.

JParaCrawl: A Large Scale Web-Based English-Japanese Parallel Corpus

Nov 25, 2019Makoto Morishita, Jun Suzuki, Masaaki Nagata

Recent machine translation algorithms mainly rely on parallel corpora. However, since the availability of parallel corpora remains limited, only some resource-rich language pairs can benefit from them. In this paper, we constructed a parallel corpus for English-Japanese, where the amount of publicly available parallel corpora is still limited. We constructed a parallel corpus by broadly crawling the web and automatically aligning parallel sentences. Our collected corpus, called JParaCrawl, amassed over 8.7 million sentence pairs. We show how it includes broader domains, and the NMT model trained with it works as a good pre-trained model for fine-tuning specific domains. The pre-training and fine-tuning approaches surpassed or achieved comparable performance to the model training from the initial state and largely reduced the training cost. Additionally, we trained the model with an in-domain dataset and JParaCrawl to show how we achieved the best performance with them. JParaCrawl and the pre-trained models are freely available online for research purposes.

NTT's Machine Translation Systems for WMT19 Robustness Task

Jul 09, 2019Soichiro Murakami, Makoto Morishita, Tsutomu Hirao, Masaaki Nagata

This paper describes NTT's submission to the WMT19 robustness task. This task mainly focuses on translating noisy text (e.g., posts on Twitter), which presents different difficulties from typical translation tasks such as news. Our submission combined techniques including utilization of a synthetic corpus, domain adaptation, and a placeholder mechanism, which significantly improved over the previous baseline. Experimental results revealed the placeholder mechanism, which temporarily replaces the non-standard tokens including emojis and emoticons with special placeholder tokens during translation, improves translation accuracy even with noisy texts.

Character n-gram Embeddings to Improve RNN Language Models

Jun 13, 2019Sho Takase, Jun Suzuki, Masaaki Nagata

This paper proposes a novel Recurrent Neural Network (RNN) language model that takes advantage of character information. We focus on character n-grams based on research in the field of word embedding construction (Wieting et al. 2016). Our proposed method constructs word embeddings from character n-gram embeddings and combines them with ordinary word embeddings. We demonstrate that the proposed method achieves the best perplexities on the language modeling datasets: Penn Treebank, WikiText-2, and WikiText-103. Moreover, we conduct experiments on application tasks: machine translation and headline generation. The experimental results indicate that our proposed method also positively affects these tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge