VLPose: Bridging the Domain Gap in Pose Estimation with Language-Vision Tuning

Feb 22, 2024Jingyao Li, Pengguang Chen, Xuan Ju, Hong Xu, Jiaya Jia

Thanks to advances in deep learning techniques, Human Pose Estimation (HPE) has achieved significant progress in natural scenarios. However, these models perform poorly in artificial scenarios such as painting and sculpture due to the domain gap, constraining the development of virtual reality and augmented reality. With the growth of model size, retraining the whole model on both natural and artificial data is computationally expensive and inefficient. Our research aims to bridge the domain gap between natural and artificial scenarios with efficient tuning strategies. Leveraging the potential of language models, we enhance the adaptability of traditional pose estimation models across diverse scenarios with a novel framework called VLPose. VLPose leverages the synergy between language and vision to extend the generalization and robustness of pose estimation models beyond the traditional domains. Our approach has demonstrated improvements of 2.26% and 3.74% on HumanArt and MSCOCO, respectively, compared to state-of-the-art tuning strategies.

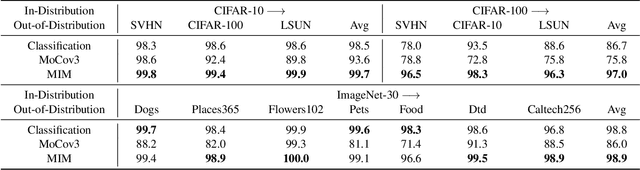

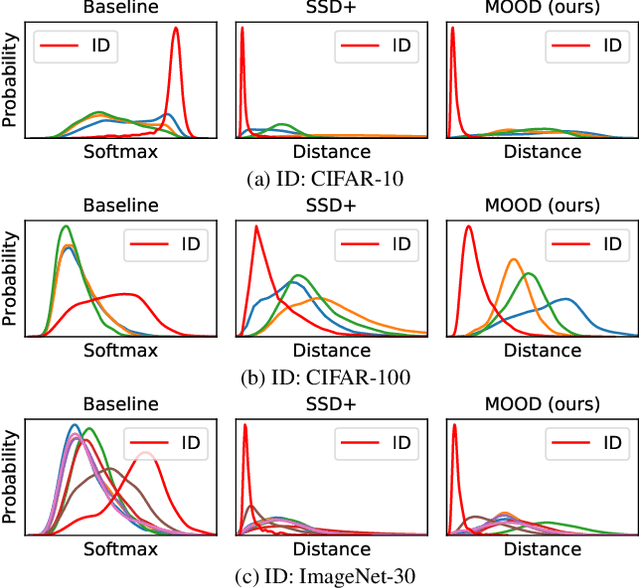

MOODv2: Masked Image Modeling for Out-of-Distribution Detection

Jan 05, 2024Jingyao Li, Pengguang Chen, Shaozuo Yu, Shu Liu, Jiaya Jia

The crux of effective out-of-distribution (OOD) detection lies in acquiring a robust in-distribution (ID) representation, distinct from OOD samples. While previous methods predominantly leaned on recognition-based techniques for this purpose, they often resulted in shortcut learning, lacking comprehensive representations. In our study, we conducted a comprehensive analysis, exploring distinct pretraining tasks and employing various OOD score functions. The results highlight that the feature representations pre-trained through reconstruction yield a notable enhancement and narrow the performance gap among various score functions. This suggests that even simple score functions can rival complex ones when leveraging reconstruction-based pretext tasks. Reconstruction-based pretext tasks adapt well to various score functions. As such, it holds promising potential for further expansion. Our OOD detection framework, MOODv2, employs the masked image modeling pretext task. Without bells and whistles, MOODv2 impressively enhances 14.30% AUROC to 95.68% on ImageNet and achieves 99.98% on CIFAR-10.

MoTCoder: Elevating Large Language Models with Modular of Thought for Challenging Programming Tasks

Jan 05, 2024Jingyao Li, Pengguang Chen, Jiaya Jia

Large Language Models (LLMs) have showcased impressive capabilities in handling straightforward programming tasks. However, their performance tends to falter when confronted with more challenging programming problems. We observe that conventional models often generate solutions as monolithic code blocks, restricting their effectiveness in tackling intricate questions. To overcome this limitation, we present Modular-of-Thought Coder (MoTCoder). We introduce a pioneering framework for MoT instruction tuning, designed to promote the decomposition of tasks into logical sub-tasks and sub-modules. Our investigations reveal that, through the cultivation and utilization of sub-modules, MoTCoder significantly improves both the modularity and correctness of the generated solutions, leading to substantial relative pass@1 improvements of 12.9% on APPS and 9.43% on CodeContests. Our codes are available at https://github.com/dvlab-research/MoTCoder.

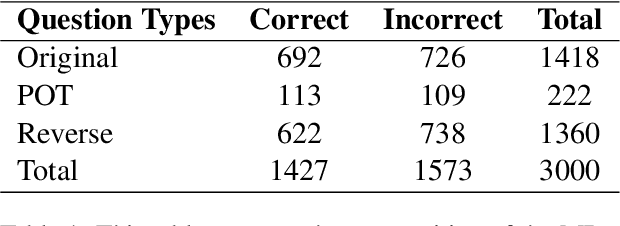

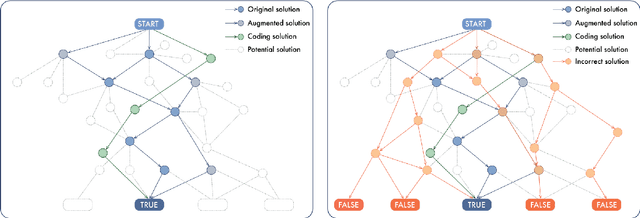

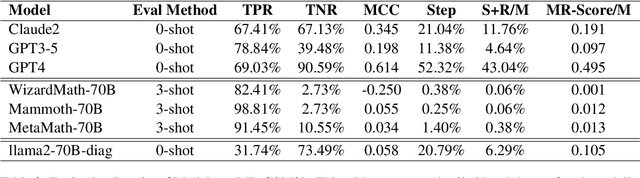

Challenge LLMs to Reason About Reasoning: A Benchmark to Unveil Cognitive Depth in LLMs

Dec 28, 2023Zhongshen Zeng, Pengguang Chen, Haiyun Jiang, Jiaya Jia

In this work, we introduce a novel evaluation paradigm for Large Language Models, one that challenges them to engage in meta-reasoning. This approach addresses critical shortcomings in existing math problem-solving benchmarks, traditionally used to evaluate the cognitive capabilities of agents. Our paradigm shifts the focus from result-oriented assessments, which often overlook the reasoning process, to a more holistic evaluation that effectively differentiates the cognitive capabilities among models. For example, in our benchmark, GPT-4 demonstrates a performance ten times more accurate than GPT3-5. The significance of this new paradigm lies in its ability to reveal potential cognitive deficiencies in LLMs that current benchmarks, such as GSM8K, fail to uncover due to their saturation and lack of effective differentiation among varying reasoning abilities. Our comprehensive analysis includes several state-of-the-art math models from both open-source and closed-source communities, uncovering fundamental deficiencies in their training and evaluation approaches. This paper not only advocates for a paradigm shift in the assessment of LLMs but also contributes to the ongoing discourse on the trajectory towards Artificial General Intelligence (AGI). By promoting the adoption of meta-reasoning evaluation methods similar to ours, we aim to facilitate a more accurate assessment of the true cognitive abilities of LLMs.

BAL: Balancing Diversity and Novelty for Active Learning

Dec 26, 2023Jingyao Li, Pengguang Chen, Shaozuo Yu, Shu Liu, Jiaya Jia

The objective of Active Learning is to strategically label a subset of the dataset to maximize performance within a predetermined labeling budget. In this study, we harness features acquired through self-supervised learning. We introduce a straightforward yet potent metric, Cluster Distance Difference, to identify diverse data. Subsequently, we introduce a novel framework, Balancing Active Learning (BAL), which constructs adaptive sub-pools to balance diverse and uncertain data. Our approach outperforms all established active learning methods on widely recognized benchmarks by 1.20%. Moreover, we assess the efficacy of our proposed framework under extended settings, encompassing both larger and smaller labeling budgets. Experimental results demonstrate that, when labeling 80% of the samples, the performance of the current SOTA method declines by 0.74%, whereas our proposed BAL achieves performance comparable to the full dataset. Codes are available at https://github.com/JulietLJY/BAL.

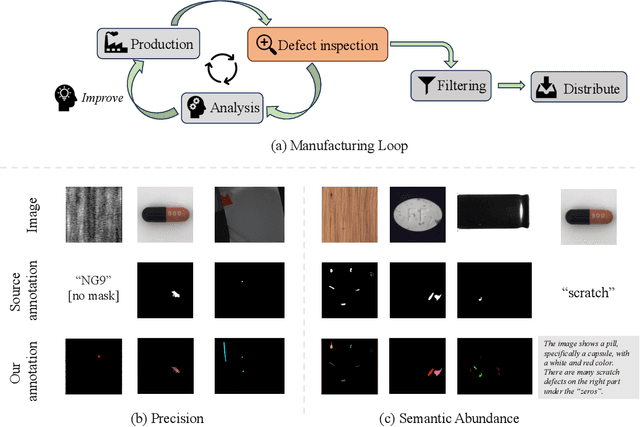

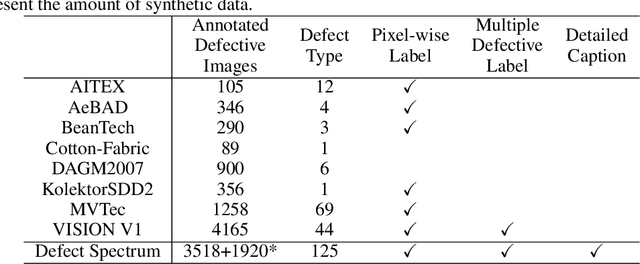

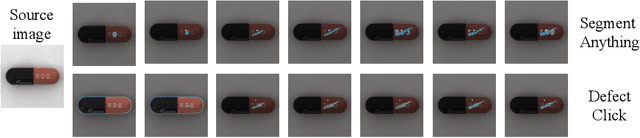

Defect Spectrum: A Granular Look of Large-Scale Defect Datasets with Rich Semantics

Nov 06, 2023Shuai Yang, Zhifei Chen, Pengguang Chen, Xi Fang, Shu Liu, Yingcong Chen

Defect inspection is paramount within the closed-loop manufacturing system. However, existing datasets for defect inspection often lack precision and semantic granularity required for practical applications. In this paper, we introduce the Defect Spectrum, a comprehensive benchmark that offers precise, semantic-abundant, and large-scale annotations for a wide range of industrial defects. Building on four key industrial benchmarks, our dataset refines existing annotations and introduces rich semantic details, distinguishing multiple defect types within a single image. Furthermore, we introduce Defect-Gen, a two-stage diffusion-based generator designed to create high-quality and diverse defective images, even when working with limited datasets. The synthetic images generated by Defect-Gen significantly enhance the efficacy of defect inspection models. Overall, The Defect Spectrum dataset demonstrates its potential in defect inspection research, offering a solid platform for testing and refining advanced models.

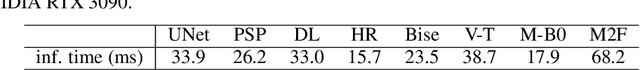

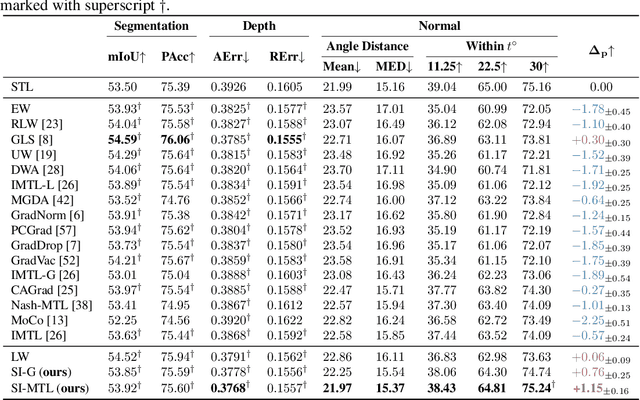

A Scale-Invariant Task Balancing Approach for Multi-Task Learning

Aug 23, 2023Baijiong Lin, Weisen Jiang, Feiyang Ye, Yu Zhang, Pengguang Chen, Ying-Cong Chen, Shu Liu

Multi-task learning (MTL), a learning paradigm to learn multiple related tasks simultaneously, has achieved great success in various fields. However, task-balancing remains a significant challenge in MTL, with the disparity in loss/gradient scales often leading to performance compromises. In this paper, we propose a Scale-Invariant Multi-Task Learning (SI-MTL) method to alleviate the task-balancing problem from both loss and gradient perspectives. Specifically, SI-MTL contains a logarithm transformation which is performed on all task losses to ensure scale-invariant at the loss level, and a gradient balancing method, SI-G, which normalizes all task gradients to the same magnitude as the maximum gradient norm. Extensive experiments conducted on several benchmark datasets consistently demonstrate the effectiveness of SI-G and the state-of-the-art performance of SI-MTL.

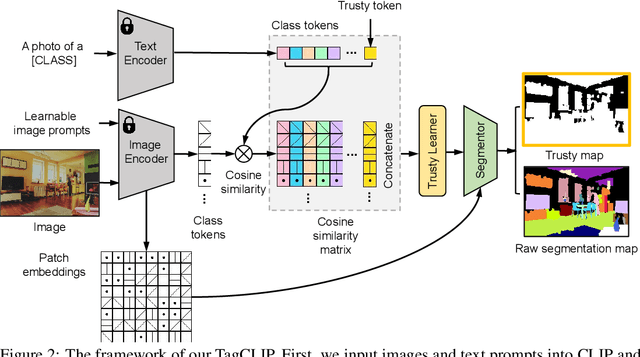

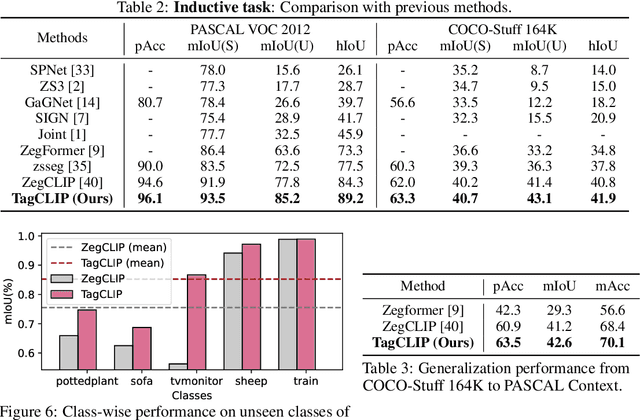

TagCLIP: Improving Discrimination Ability of Open-Vocabulary Semantic Segmentation

Apr 15, 2023Jingyao Li, Pengguang Chen, Shengju Qian, Jiaya Jia

Recent success of Contrastive Language-Image Pre-training~(CLIP) has shown great promise in pixel-level open-vocabulary learning tasks. A general paradigm utilizes CLIP's text and patch embeddings to generate semantic masks. However, existing models easily misidentify input pixels from unseen classes, thus confusing novel classes with semantically-similar ones. In our work, we disentangle the ill-posed optimization problem into two parallel processes: one performs semantic matching individually, and the other judges reliability for improving discrimination ability. Motivated by special tokens in language modeling that represents sentence-level embeddings, we design a trusty token that decouples the known and novel category prediction tendency. With almost no extra overhead, we upgrade the pixel-level generalization capacity of existing models effectively. Our TagCLIP (CLIP adapting with Trusty-guidance) boosts the IoU of unseen classes by 7.4% and 1.7% on PASCAL VOC 2012 and COCO-Stuff 164K.

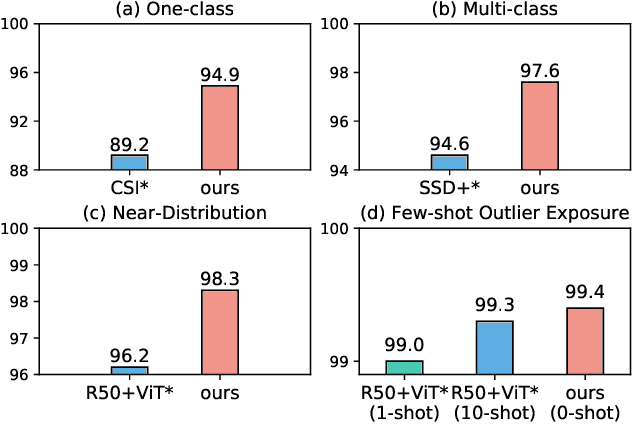

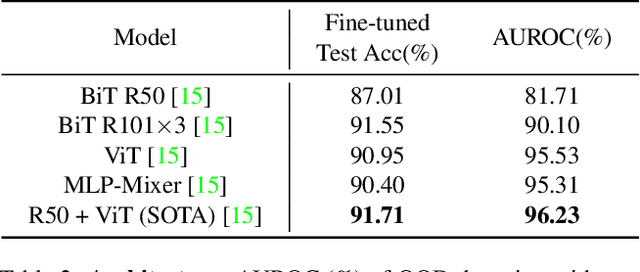

Rethinking Out-of-distribution (OOD) Detection: Masked Image Modeling is All You Need

Feb 06, 2023Jingyao Li, Pengguang Chen, Shaozuo Yu, Zexin He, Shu Liu, Jiaya Jia

The core of out-of-distribution (OOD) detection is to learn the in-distribution (ID) representation, which is distinguishable from OOD samples. Previous work applied recognition-based methods to learn the ID features, which tend to learn shortcuts instead of comprehensive representations. In this work, we find surprisingly that simply using reconstruction-based methods could boost the performance of OOD detection significantly. We deeply explore the main contributors of OOD detection and find that reconstruction-based pretext tasks have the potential to provide a generally applicable and efficacious prior, which benefits the model in learning intrinsic data distributions of the ID dataset. Specifically, we take Masked Image Modeling as a pretext task for our OOD detection framework (MOOD). Without bells and whistles, MOOD outperforms previous SOTA of one-class OOD detection by 5.7%, multi-class OOD detection by 3.0%, and near-distribution OOD detection by 2.1%. It even defeats the 10-shot-per-class outlier exposure OOD detection, although we do not include any OOD samples for our detection

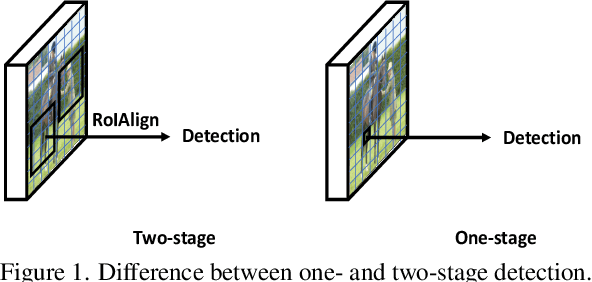

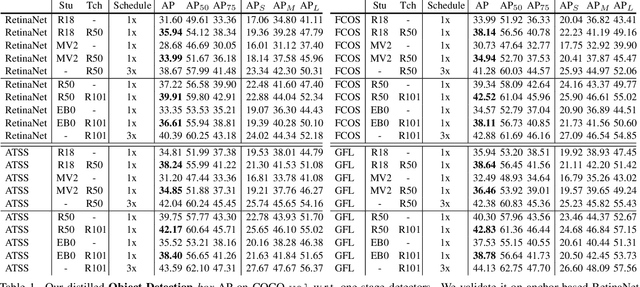

SEA: Bridging the Gap Between One- and Two-stage Detector Distillation via SEmantic-aware Alignment

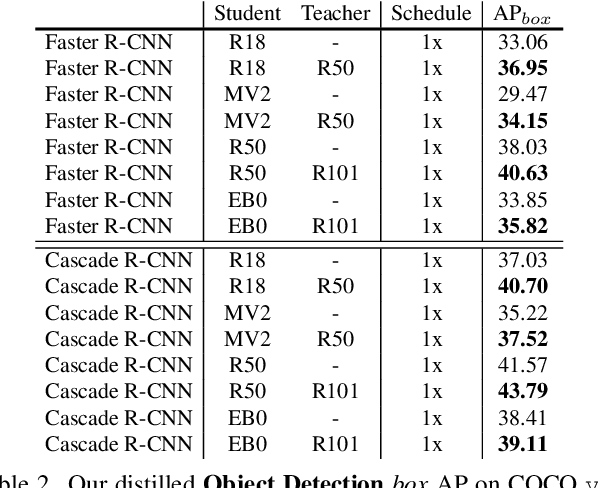

Mar 02, 2022Yixin Chen, Zhuotao Tian, Pengguang Chen, Shu Liu, Jiaya Jia

We revisit the one- and two-stage detector distillation tasks and present a simple and efficient semantic-aware framework to fill the gap between them. We address the pixel-level imbalance problem by designing the category anchor to produce a representative pattern for each category and regularize the topological distance between pixels and category anchors to further tighten their semantic bonds. We name our method SEA (SEmantic-aware Alignment) distillation given the nature of abstracting dense fine-grained information by semantic reliance to well facilitate distillation efficacy. SEA is well adapted to either detection pipeline and achieves new state-of-the-art results on the challenging COCO object detection task on both one- and two-stage detectors. Its superior performance on instance segmentation further manifests the generalization ability. Both 2x-distilled RetinaNet and FCOS with ResNet50-FPN outperform their corresponding 3x ResNet101-FPN teacher, arriving 40.64 and 43.06 AP, respectively. Code will be made publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge