Crossing the Reality Gap: a Short Introduction to the Transferability Approach

Jul 07, 2013Jean-Baptiste Mouret, Sylvain Koos, Stéphane Doncieux

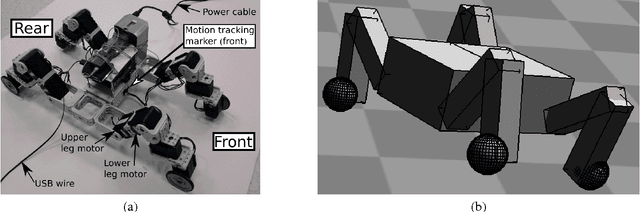

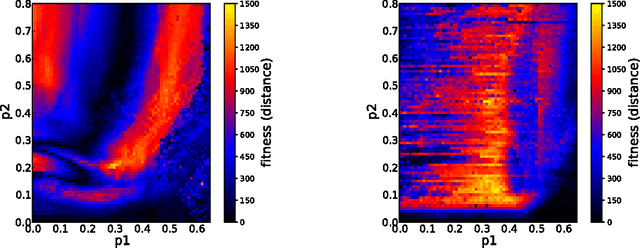

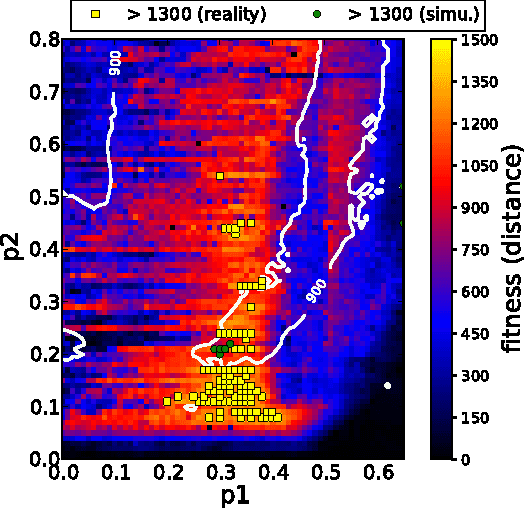

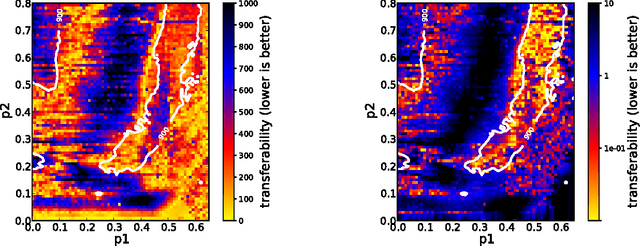

In robotics, gradient-free optimization algorithms (e.g. evolutionary algorithms) are often used only in simulation because they require the evaluation of many candidate solutions. Nevertheless, solutions obtained in simulation often do not work well on the real device. The transferability approach aims at crossing this gap between simulation and reality by \emph{making the optimization algorithm aware of the limits of the simulation}. In the present paper, we first describe the transferability function, that maps solution descriptors to a score representing how well a simulator matches the reality. We then show that this function can be learned using a regression algorithm and a few experiments with the real devices. Our results are supported by an extensive study of the reality gap for a simple quadruped robot whose control parameters are optimized. In particular, we mapped the whole search space in reality and in simulation to understand the differences between the fitness landscapes.

Fast Damage Recovery in Robotics with the T-Resilience Algorithm

Feb 02, 2013Sylvain Koos, Antoine Cully, Jean-Baptiste Mouret

Damage recovery is critical for autonomous robots that need to operate for a long time without assistance. Most current methods are complex and costly because they require anticipating each potential damage in order to have a contingency plan ready. As an alternative, we introduce the T-resilience algorithm, a new algorithm that allows robots to quickly and autonomously discover compensatory behaviors in unanticipated situations. This algorithm equips the robot with a self-model and discovers new behaviors by learning to avoid those that perform differently in the self-model and in reality. Our algorithm thus does not identify the damaged parts but it implicitly searches for efficient behaviors that do not use them. We evaluate the T-Resilience algorithm on a hexapod robot that needs to adapt to leg removal, broken legs and motor failures; we compare it to stochastic local search, policy gradient and the self-modeling algorithm proposed by Bongard et al. The behavior of the robot is assessed on-board thanks to a RGB-D sensor and a SLAM algorithm. Using only 25 tests on the robot and an overall running time of 20 minutes, T-Resilience consistently leads to substantially better results than the other approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge