D-LGP: Dynamic Logic-Geometric Program for Combined Task and Motion Planning

Dec 05, 2023Teng Xue, Amirreza Razmjoo, Sylvain Calinon

Many real-world sequential manipulation tasks involve a combination of discrete symbolic search and continuous motion planning, collectively known as combined task and motion planning (TAMP). However, prevailing methods often struggle with the computational burden and intricate combinatorial challenges stemming from the multitude of action skeletons. To address this, we propose Dynamic Logic-Geometric Program (D-LGP), a novel approach integrating Dynamic Tree Search and global optimization for efficient hybrid planning. Through empirical evaluation on three benchmarks, we demonstrate the efficacy of our approach, showcasing superior performance in comparison to state-of-the-art techniques. We validate our approach through simulation and demonstrate its capability for online replanning under uncertainty and external disturbances in the real world.

Projection-based first-order constrained optimization solver for robotics

Jun 30, 2023Hakan Girgin, Tobias Löw, Teng Xue, Sylvain Calinon

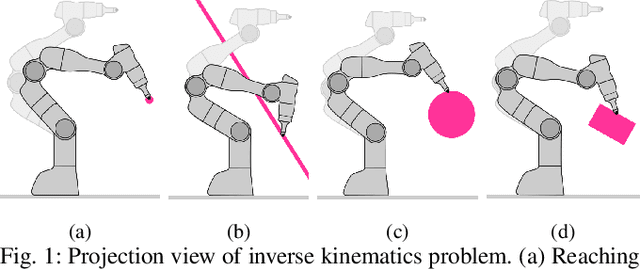

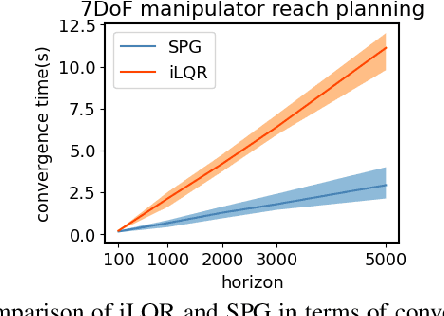

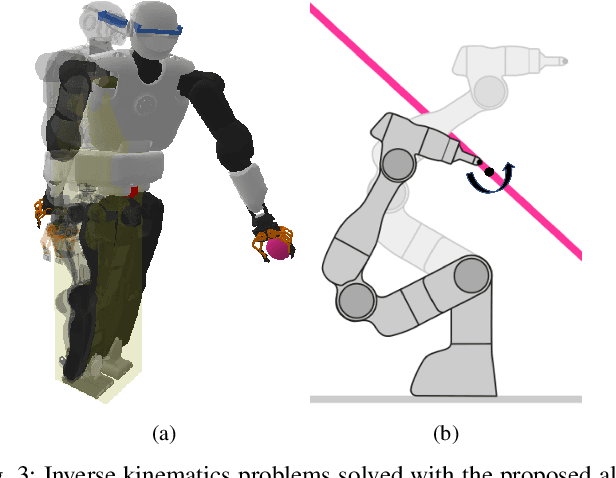

Robot programming tools ranging from inverse kinematics (IK) to model predictive control (MPC) are most often described as constrained optimization problems. Even though there are currently many commercially-available second-order solvers, robotics literature recently focused on efficient implementations and improvements over these solvers for real-time robotic applications. However, most often, these implementations stay problem-specific and are not easy to access or implement, or do not exploit the geometric aspect of the robotics problems. In this work, we propose to solve these problems using a fast, easy-to-implement first-order method that fully exploits the geometric constraints via Euclidean projections, called Augmented Lagrangian Spectral Projected Gradient Descent (ALSPG). We show that 1. using projections instead of full constraints and gradients improves the performance of the solver and 2. ALSPG stays competitive to the standard second-order methods such as iLQR in the unconstrained case. We showcase these results with IK and motion planning problems on simulated examples and with an MPC problem on a 7-axis manipulator experiment.

Contact Optimization with Learning from Demonstration: Application in Long-term Non-prehensile Planar Manipulation

May 19, 2023Teng Xue, Sylvain Calinon

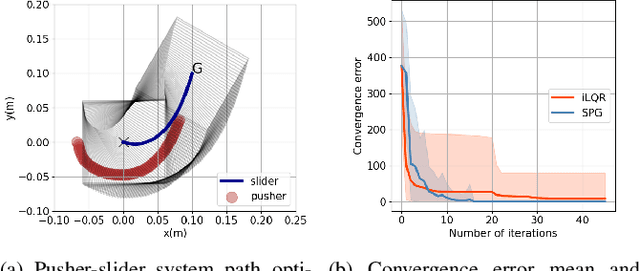

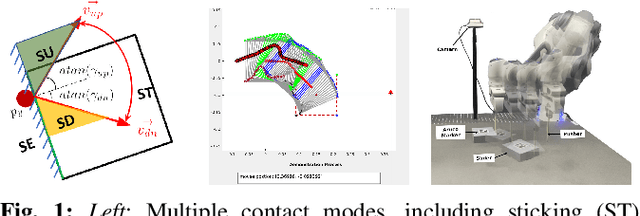

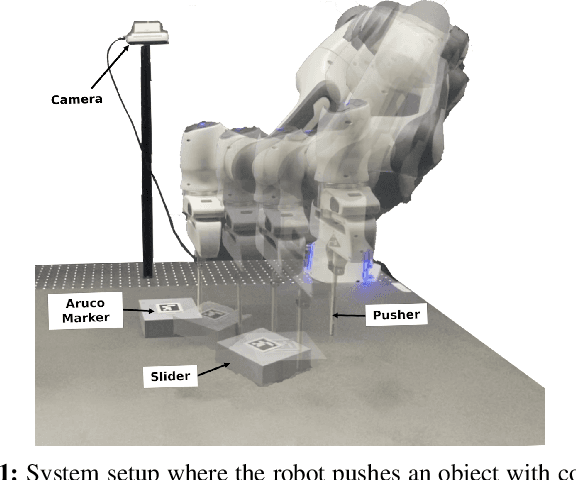

Long-term non-prehensile planar manipulation is a challenging task for planning and control, requiring determination of both continuous and discrete contact configurations, such as contact points and modes. This leads to the non-convexity and hybridness of contact optimization. To overcome these difficulties, we propose a novel approach that incorporates human demonstrations into trajectory optimization. We show that our approach effectively handles the hybrid combinatorial nature of the problem, mitigates the issues with local minima present in current state-of-the-art solvers, and requires only a small number of demonstrations while delivering robust generalization performance. We validate our results in simulation and demonstrate its applicability on a pusher-slider system with a real Franka Emika robot.

Demonstration-guided Optimal Control for Long-term Non-prehensile Planar Manipulation

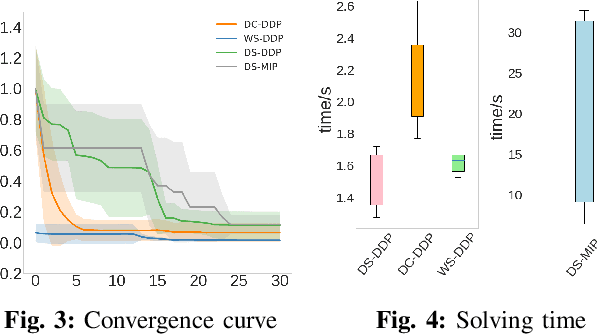

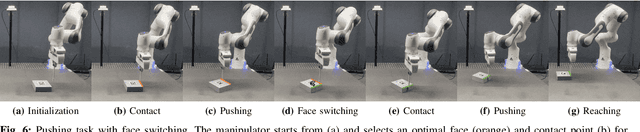

Dec 24, 2022Teng Xue, Hakan Girgin, Teguh Santoso Lembono, Sylvain Calinon

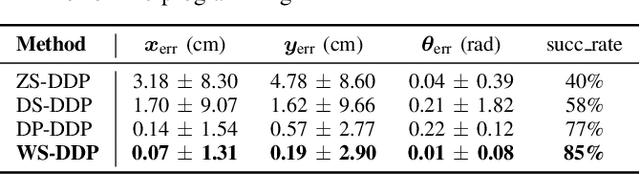

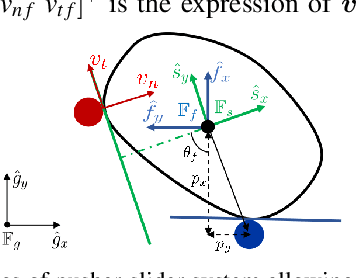

Long-term non-prehensile planar manipulation is a challenging task for robot planning and feedback control. It is characterized by underactuation, hybrid control, and contact uncertainty. One main difficulty is to determine contact points and directions, which involves joint logic and geometrical reasoning in the modes of the dynamics model. To tackle this issue, we propose a demonstration-guided hierarchical optimization framework to achieve offline task and motion planning (TAMP). Our work extends the formulation of the dynamics model of the pusher-slider system to include separation mode with face switching cases, and solves a warm-started TAMP problem by exploiting human demonstrations. We show that our approach can cope well with the local minima problems currently present in the state-of-the-art solvers and determine a valid solution to the task. We validate our results in simulation and demonstrate its applicability on a pusher-slider system with real Franka Emika robot in the presence of external disturbances.

Object-Agnostic Suction Grasp Affordance Detection in Dense Cluster Using Self-Supervised Learning.docx

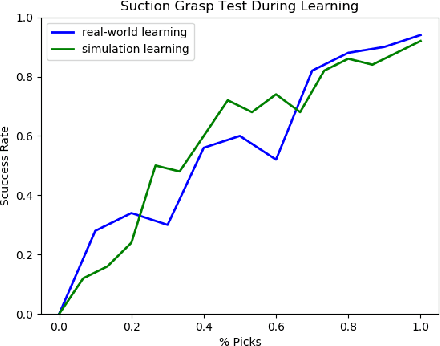

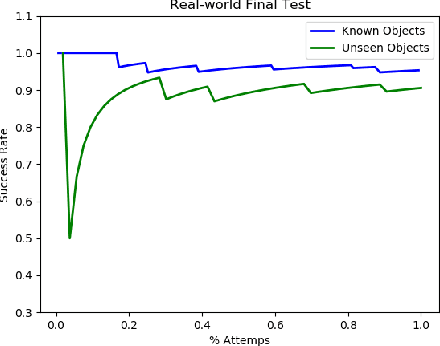

Jun 07, 2019Mingshuo Han, Wenhai Liu., Zhenyu Pan, Teng Xue, Quanquan Shao, Jin Ma, Weiming Wang

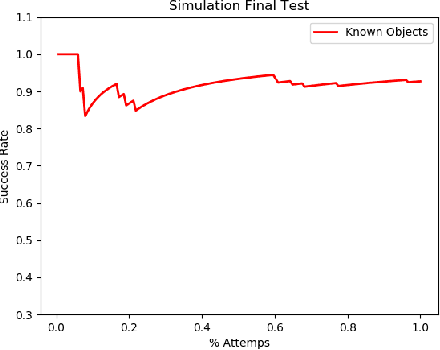

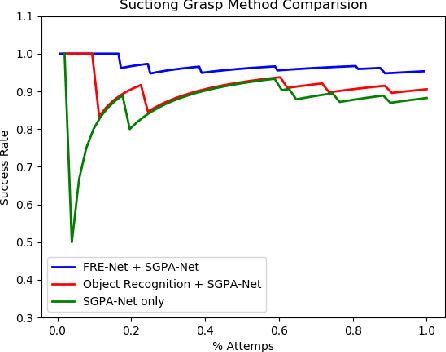

In this paper we study grasp problem in dense cluster, a challenging task in warehouse logistics scenario. By introducing a two-step robust suction affordance detection method, we focus on using vacuum suction pad to clear up a box filled with seen and unseen objects. Two CNN based neural networks are proposed. A Fast Region Estimation Network (FRE-Net) predicts which region contains pickable objects, and a Suction Grasp Point Affordance network (SGPA-Net) determines which point in that region is pickable. So as to enable such two networks, we design a self-supervised learning pipeline to accumulate data, train and test the performance of our method. In both virtual and real environment, within 1500 picks (~5 hours), we reach a picking accuracy of 95% for known objects and 90% for unseen objects with similar geometry features.

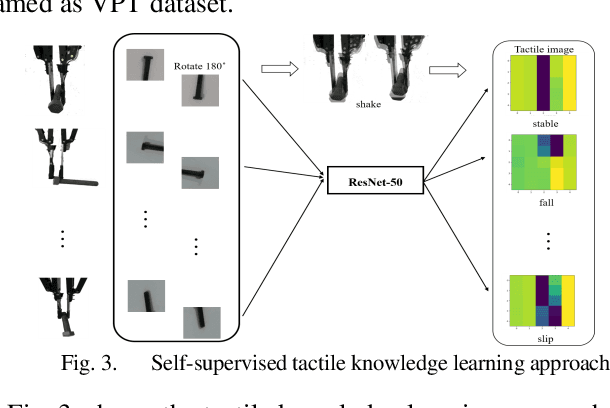

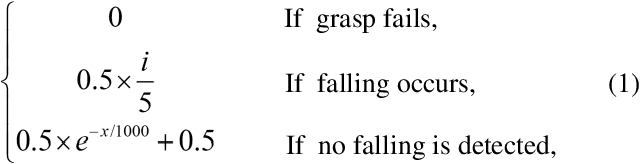

Bayesian Grasp: Robotic visual stable grasp based on prior tactile knowledge

May 30, 2019Teng Xue, Wenhai Liu, Mingshuo Han, Zhenyu Pan, Jin Ma, Quanquan Shao, Weiming Wang

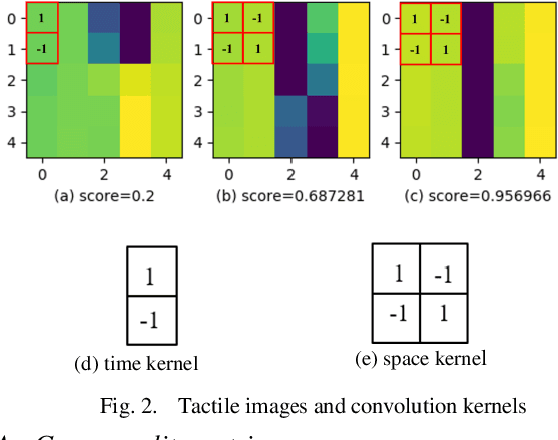

Robotic grasp detection is a fundamental capability for intelligent manipulation in unstructured environments. Previous work mainly employed visual and tactile fusion to achieve stable grasp, while, the whole process depending heavily on regrasping, which wastes much time to regulate and evaluate. We propose a novel way to improve robotic grasping: by using learned tactile knowledge, a robot can achieve a stable grasp from an image. First, we construct a prior tactile knowledge learning framework with novel grasp quality metric which is determined by measuring its resistance to external perturbations. Second, we propose a multi-phases Bayesian Grasp architecture to generate stable grasp configurations through a single RGB image based on prior tactile knowledge. Results show that this framework can classify the outcome of grasps with an average accuracy of 86% on known objects and 79% on novel objects. The prior tactile knowledge improves the successful rate of 55% over traditional vision-based strategies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge