AutoSAT: Automatically Optimize SAT Solvers via Large Language Models

Feb 16, 2024Yiwen Sun, Xianyin Zhang, Shiyu Huang, Shaowei Cai, Bing-Zhen Zhang, Ke Wei

Heuristics are crucial in SAT solvers, while no heuristic rules are suitable for all problem instances. Therefore, it typically requires to refine specific solvers for specific problem instances. In this context, we present AutoSAT, a novel framework for automatically optimizing heuristics in SAT solvers. AutoSAT is based on Large Large Models (LLMs) which is able to autonomously generate code, conduct evaluation, then utilize the feedback to further optimize heuristics, thereby reducing human intervention and enhancing solver capabilities. AutoSAT operates on a plug-and-play basis, eliminating the need for extensive preliminary setup and model training, and fosters a Chain of Thought collaborative process with fault-tolerance, ensuring robust heuristic optimization. Extensive experiments on a Conflict-Driven Clause Learning (CDCL) solver demonstrates the overall superior performance of AutoSAT, especially in solving some specific SAT problem instances.

OpenRL: A Unified Reinforcement Learning Framework

Dec 20, 2023Shiyu Huang, Wentse Chen, Yiwen Sun, Fuqing Bie, Wei-Wei Tu

We present OpenRL, an advanced reinforcement learning (RL) framework designed to accommodate a diverse array of tasks, from single-agent challenges to complex multi-agent systems. OpenRL's robust support for self-play training empowers agents to develop advanced strategies in competitive settings. Notably, OpenRL integrates Natural Language Processing (NLP) with RL, enabling researchers to address a combination of RL training and language-centric tasks effectively. Leveraging PyTorch's robust capabilities, OpenRL exemplifies modularity and a user-centric approach. It offers a universal interface that simplifies the user experience for beginners while maintaining the flexibility experts require for innovation and algorithm development. This equilibrium enhances the framework's practicality, adaptability, and scalability, establishing a new standard in RL research. To delve into OpenRL's features, we invite researchers and enthusiasts to explore our GitHub repository at https://github.com/OpenRL-Lab/openrl and access our comprehensive documentation at https://openrl-docs.readthedocs.io.

Learning Graph-Enhanced Commander-Executor for Multi-Agent Navigation

Feb 08, 2023Xinyi Yang, Shiyu Huang, Yiwen Sun, Yuxiang Yang, Chao Yu, Wei-Wei Tu, Huazhong Yang, Yu Wang

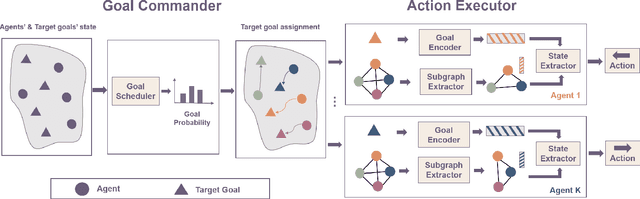

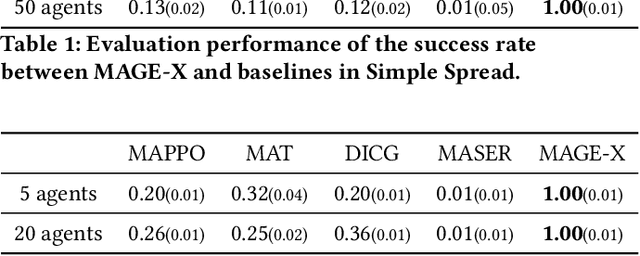

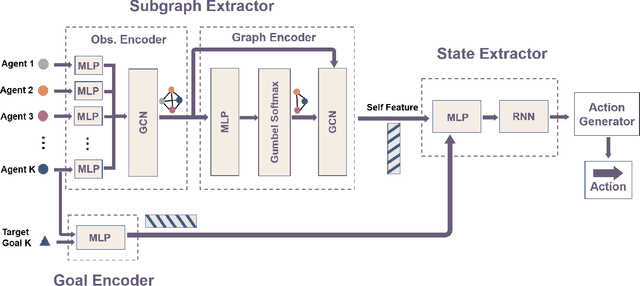

This paper investigates the multi-agent navigation problem, which requires multiple agents to reach the target goals in a limited time. Multi-agent reinforcement learning (MARL) has shown promising results for solving this issue. However, it is inefficient for MARL to directly explore the (nearly) optimal policy in the large search space, which is exacerbated as the agent number increases (e.g., 10+ agents) or the environment is more complex (e.g., 3D simulator). Goal-conditioned hierarchical reinforcement learning (HRL) provides a promising direction to tackle this challenge by introducing a hierarchical structure to decompose the search space, where the low-level policy predicts primitive actions in the guidance of the goals derived from the high-level policy. In this paper, we propose Multi-Agent Graph-Enhanced Commander-Executor (MAGE-X), a graph-based goal-conditioned hierarchical method for multi-agent navigation tasks. MAGE-X comprises a high-level Goal Commander and a low-level Action Executor. The Goal Commander predicts the probability distribution of goals and leverages them to assign each agent the most appropriate final target. The Action Executor utilizes graph neural networks (GNN) to construct a subgraph for each agent that only contains crucial partners to improve cooperation. Additionally, the Goal Encoder in the Action Executor captures the relationship between the agent and the designated goal to encourage the agent to reach the final target. The results show that MAGE-X outperforms the state-of-the-art MARL baselines with a 100% success rate with only 3 million training steps in multi-agent particle environments (MPE) with 50 agents, and at least a 12% higher success rate and 2x higher data efficiency in a more complicated quadrotor 3D navigation task.

A Machine Learning Method for Material Property Prediction: Example Polymer Compatibility

Feb 28, 2022Zhilong Liang, Zhiwei Li, Shuo Zhou, Yiwen Sun, Changshui Zhang, Jinying Yuan

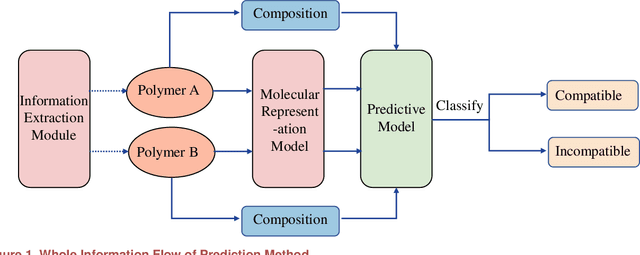

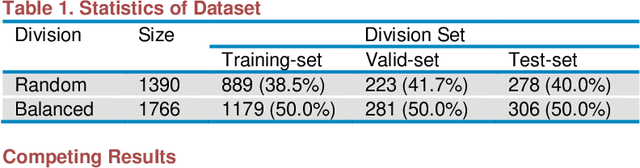

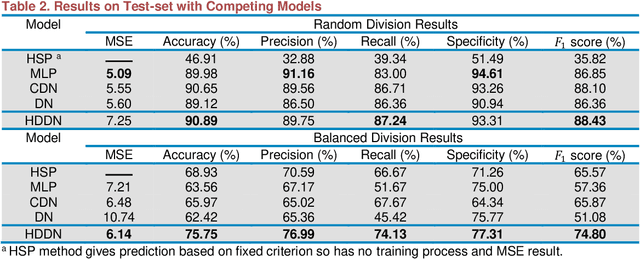

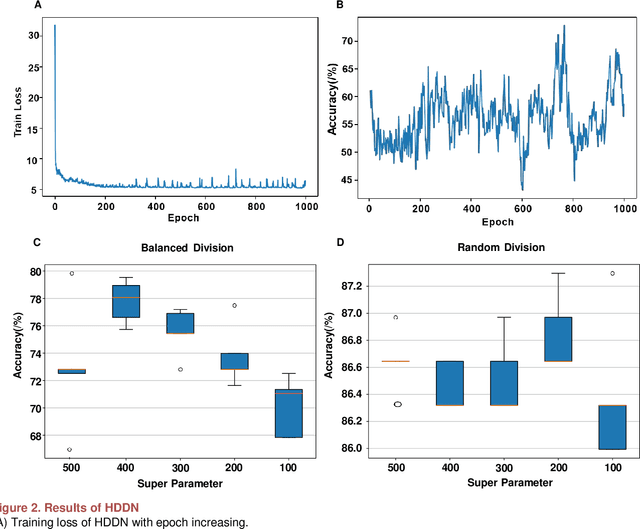

Prediction of material property is a key problem because of its significance to material design and screening. We present a brand-new and general machine learning method for material property prediction. As a representative example, polymer compatibility is chosen to demonstrate the effectiveness of our method. Specifically, we mine data from related literature to build a specific database and give a prediction based on the basic molecular structures of blending polymers and, as auxiliary, the blending composition. Our model obtains at least 75% accuracy on the dataset consisting of thousands of entries. We demonstrate that the relationship between structure and properties can be learned and simulated by machine learning method.

Road Network Metric Learning for Estimated Time of Arrival

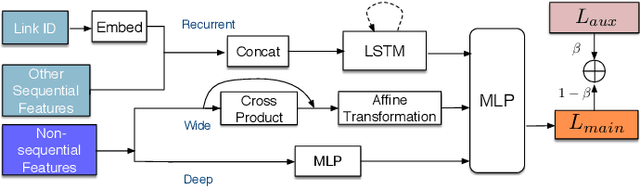

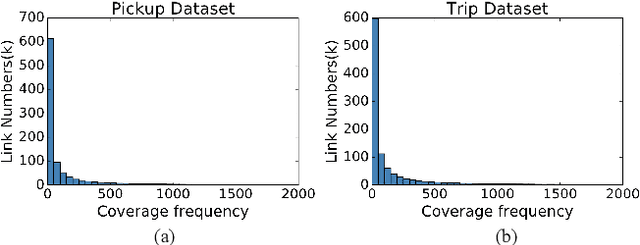

Jun 24, 2020Yiwen Sun, Kun Fu, Zheng Wang, Changshui Zhang, Jieping Ye

Recently, deep learning have achieved promising results in Estimated Time of Arrival (ETA), which is considered as predicting the travel time from the origin to the destination along a given path. One of the key techniques is to use embedding vectors to represent the elements of road network, such as the links (road segments). However, the embedding suffers from the data sparsity problem that many links in the road network are traversed by too few floating cars even in large ride-hailing platforms like Uber and DiDi. Insufficient data makes the embedding vectors in an under-fitting status, which undermines the accuracy of ETA prediction. To address the data sparsity problem, we propose the Road Network Metric Learning framework for ETA (RNML-ETA). It consists of two components: (1) a main regression task to predict the travel time, and (2) an auxiliary metric learning task to improve the quality of link embedding vectors. We further propose the triangle loss, a novel loss function to improve the efficiency of metric learning. We validated the effectiveness of RNML-ETA on large scale real-world datasets, by showing that our method outperforms the state-of-the-art model and the promotion concentrates on the cold links with few data.

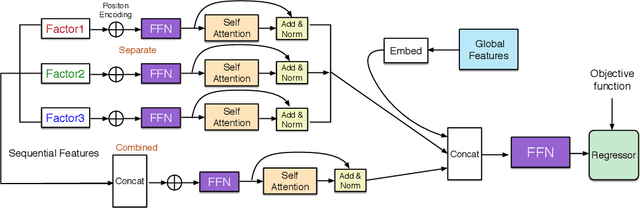

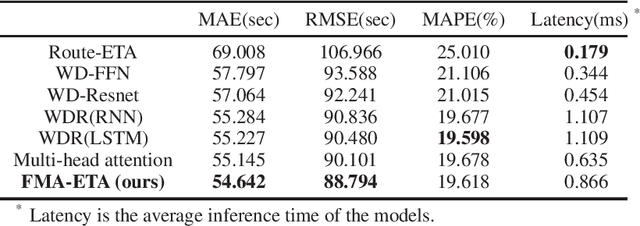

FMA-ETA: Estimating Travel Time Entirely Based on FFN With Attention

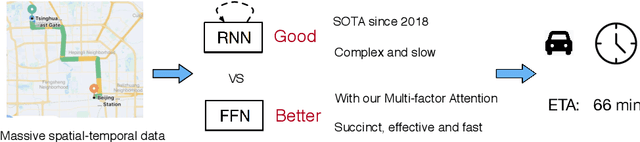

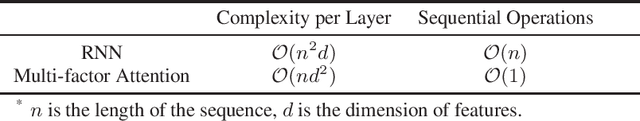

Jun 07, 2020Yiwen Sun, Yulu Wang, Kun Fu, Zheng Wang, Ziang Yan, Changshui Zhang, Jieping Ye

Estimated time of arrival (ETA) is one of the most important services in intelligent transportation systems and becomes a challenging spatial-temporal (ST) data mining task in recent years. Nowadays, deep learning based methods, specifically recurrent neural networks (RNN) based ones are adapted to model the ST patterns from massive data for ETA and become the state-of-the-art. However, RNN is suffering from slow training and inference speed, as its structure is unfriendly to parallel computing. To solve this problem, we propose a novel, brief and effective framework mainly based on feed-forward network (FFN) for ETA, FFN with Multi-factor self-Attention (FMA-ETA). The novel Multi-factor self-attention mechanism is proposed to deal with different category features and aggregate the information purposefully. Extensive experimental results on the real-world vehicle travel dataset show FMA-ETA is competitive with state-of-the-art methods in terms of the prediction accuracy with significantly better inference speed.

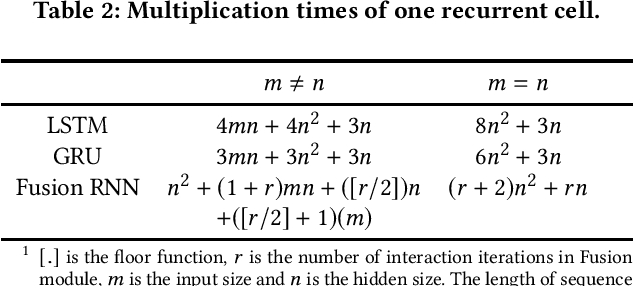

Fusion Recurrent Neural Network

Jun 07, 2020Yiwen Sun, Yulu Wang, Kun Fu, Zheng Wang, Changshui Zhang, Jieping Ye

Considering deep sequence learning for practical application, two representative RNNs - LSTM and GRU may come to mind first. Nevertheless, is there no chance for other RNNs? Will there be a better RNN in the future? In this work, we propose a novel, succinct and promising RNN - Fusion Recurrent Neural Network (Fusion RNN). Fusion RNN is composed of Fusion module and Transport module every time step. Fusion module realizes the multi-round fusion of the input and hidden state vector. Transport module which mainly refers to simple recurrent network calculate the hidden state and prepare to pass it to the next time step. Furthermore, in order to evaluate Fusion RNN's sequence feature extraction capability, we choose a representative data mining task for sequence data, estimated time of arrival (ETA) and present a novel model based on Fusion RNN. We contrast our method and other variants of RNN for ETA under massive vehicle travel data from DiDi Chuxing. The results demonstrate that for ETA, Fusion RNN is comparable to state-of-the-art LSTM and GRU which are more complicated than Fusion RNN.

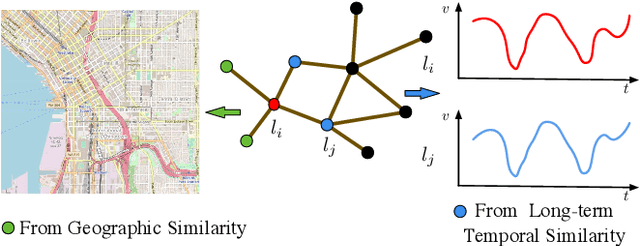

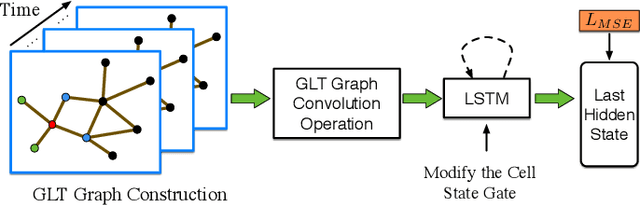

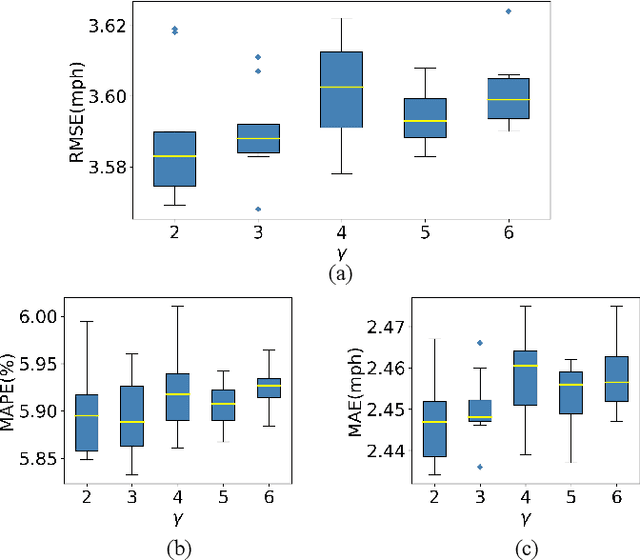

Constructing Geographic and Long-term Temporal Graph for Traffic Forecasting

Apr 23, 2020Yiwen Sun, Yulu Wang, Kun Fu, Zheng Wang, Changshui Zhang, Jieping Ye

Traffic forecasting influences various intelligent transportation system (ITS) services and is of great significance for user experience as well as urban traffic control. It is challenging due to the fact that the road network contains complex and time-varying spatial-temporal dependencies. Recently, deep learning based methods have achieved promising results by adopting graph convolutional network (GCN) to extract the spatial correlations and recurrent neural network (RNN) to capture the temporal dependencies. However, the existing methods often construct the graph only based on road network connectivity, which limits the interaction between roads. In this work, we propose Geographic and Long term Temporal Graph Convolutional Recurrent Neural Network (GLT-GCRNN), a novel framework for traffic forecasting that learns the rich interactions between roads sharing similar geographic or longterm temporal patterns. Extensive experiments on a real-world traffic state dataset validate the effectiveness of our method by showing that GLT-GCRNN outperforms the state-of-the-art methods in terms of different metrics.

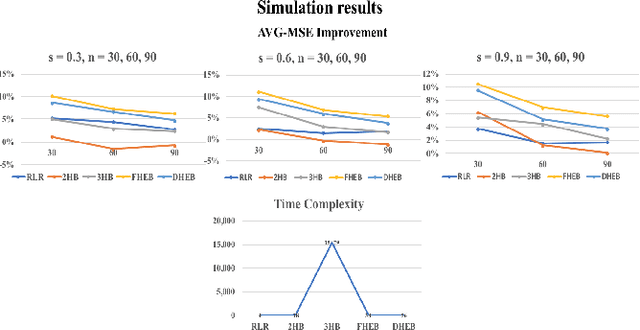

Dynamic Hierarchical Empirical Bayes: A Predictive Model Applied to Online Advertising

Sep 06, 2018Yuan Yuan, Xiaojing Dong, Chen Dong, Yiwen Sun, Zhenyu Yan, Abhishek Pani

Predicting keywords performance, such as number of impressions, click-through rate (CTR), conversion rate (CVR), revenue per click (RPC), and cost per click (CPC), is critical for sponsored search in the online advertising industry. An interesting phenomenon is that, despite the size of the overall data, the data are very sparse at the individual unit level. To overcome the sparsity and leverage hierarchical information across the data structure, we propose a Dynamic Hierarchical Empirical Bayesian (DHEB) model that dynamically determines the hierarchy through a data-driven process and provides shrinkage-based estimations. Our method is also equipped with an efficient empirical approach to derive inferences through the hierarchy. We evaluate the proposed method in both simulated and real-world datasets and compare to several competitive models. The results favor the proposed method among all comparisons in terms of both accuracy and efficiency. In the end, we design a two-phase system to serve prediction in real time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge