Band-Attention Modulated RetNet for Face Forgery Detection

Apr 09, 2024Zhida Zhang, Jie Cao, Wenkui Yang, Qihang Fan, Kai Zhou, Ran He

The transformer networks are extensively utilized in face forgery detection due to their scalability across large datasets.Despite their success, transformers face challenges in balancing the capture of global context, which is crucial for unveiling forgery clues, with computational complexity.To mitigate this issue, we introduce Band-Attention modulated RetNet (BAR-Net), a lightweight network designed to efficiently process extensive visual contexts while avoiding catastrophic forgetting.Our approach empowers the target token to perceive global information by assigning differential attention levels to tokens at varying distances. We implement self-attention along both spatial axes, thereby maintaining spatial priors and easing the computational burden.Moreover, we present the adaptive frequency Band-Attention Modulation mechanism, which treats the entire Discrete Cosine Transform spectrogram as a series of frequency bands with learnable weights.Together, BAR-Net achieves favorable performance on several face forgery datasets, outperforming current state-of-the-art methods.

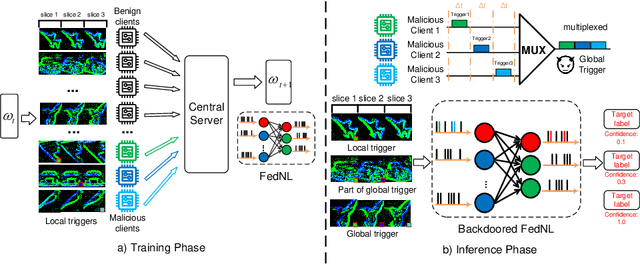

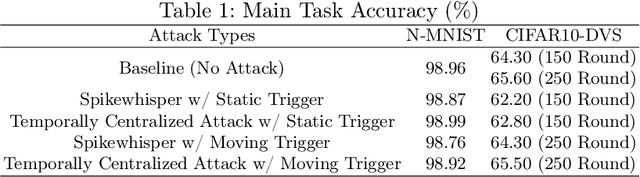

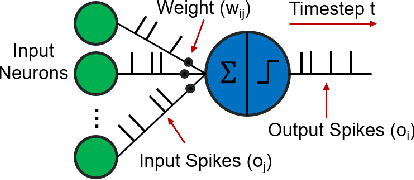

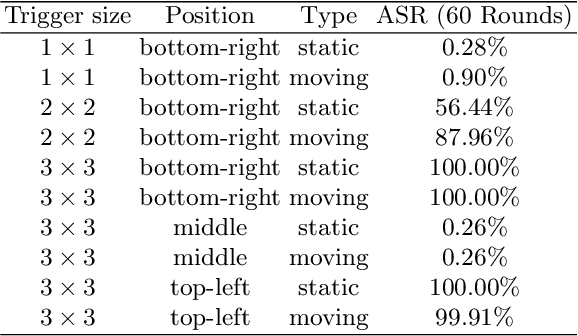

Spikewhisper: Temporal Spike Backdoor Attacks on Federated Neuromorphic Learning over Low-power Devices

Mar 27, 2024Hanqing Fu, Gaolei Li, Jun Wu, Jianhua Li, Xi Lin, Kai Zhou, Yuchen Liu

Federated neuromorphic learning (FedNL) leverages event-driven spiking neural networks and federated learning frameworks to effectively execute intelligent analysis tasks over amounts of distributed low-power devices but also perform vulnerability to poisoning attacks. The threat of backdoor attacks on traditional deep neural networks typically comes from time-invariant data. However, in FedNL, unknown threats may be hidden in time-varying spike signals. In this paper, we start to explore a novel vulnerability of FedNL-based systems with the concept of time division multiplexing, termed Spikewhisper, which allows attackers to evade detection as much as possible, as multiple malicious clients can imperceptibly poison with different triggers at different timeslices. In particular, the stealthiness of Spikewhisper is derived from the time-domain divisibility of global triggers, in which each malicious client pastes only one local trigger to a certain timeslice in the neuromorphic sample, and also the polarity and motion of each local trigger can be configured by attackers. Extensive experiments based on two different neuromorphic datasets demonstrate that the attack success rate of Spikewispher is higher than the temporally centralized attacks. Besides, it is validated that the effect of Spikewispher is sensitive to the trigger duration.

Collective Certified Robustness against Graph Injection Attacks

Mar 03, 2024Yuni Lai, Bailin Pan, Kaihuang Chen, Yancheng Yuan, Kai Zhou

We investigate certified robustness for GNNs under graph injection attacks. Existing research only provides sample-wise certificates by verifying each node independently, leading to very limited certifying performance. In this paper, we present the first collective certificate, which certifies a set of target nodes simultaneously. To achieve it, we formulate the problem as a binary integer quadratic constrained linear programming (BQCLP). We further develop a customized linearization technique that allows us to relax the BQCLP into linear programming (LP) that can be efficiently solved. Through comprehensive experiments, we demonstrate that our collective certification scheme significantly improves certification performance with minimal computational overhead. For instance, by solving the LP within 1 minute on the Citeseer dataset, we achieve a significant increase in the certified ratio from 0.0% to 81.2% when the injected node number is 5% of the graph size. Our step marks a crucial step towards making provable defense more practical.

Adversarially Robust Signed Graph Contrastive Learning from Balance Augmentation

Jan 19, 2024Jialong Zhou, Xing Ai, Yuni Lai, Kai Zhou

Signed graphs consist of edges and signs, which can be separated into structural information and balance-related information, respectively. Existing signed graph neural networks (SGNNs) typically rely on balance-related information to generate embeddings. Nevertheless, the emergence of recent adversarial attacks has had a detrimental impact on the balance-related information. Similar to how structure learning can restore unsigned graphs, balance learning can be applied to signed graphs by improving the balance degree of the poisoned graph. However, this approach encounters the challenge "Irreversibility of Balance-related Information" - while the balance degree improves, the restored edges may not be the ones originally affected by attacks, resulting in poor defense effectiveness. To address this challenge, we propose a robust SGNN framework called Balance Augmented-Signed Graph Contrastive Learning (BA-SGCL), which combines Graph Contrastive Learning principles with balance augmentation techniques. Experimental results demonstrate that BA-SGCL not only enhances robustness against existing adversarial attacks but also achieves superior performance on link sign prediction task across various datasets.

Universally Robust Graph Neural Networks by Preserving Neighbor Similarity

Jan 18, 2024Yulin Zhu, Yuni Lai, Xing Ai, Kai Zhou

Despite the tremendous success of graph neural networks in learning relational data, it has been widely investigated that graph neural networks are vulnerable to structural attacks on homophilic graphs. Motivated by this, a surge of robust models is crafted to enhance the adversarial robustness of graph neural networks on homophilic graphs. However, the vulnerability based on heterophilic graphs remains a mystery to us. To bridge this gap, in this paper, we start to explore the vulnerability of graph neural networks on heterophilic graphs and theoretically prove that the update of the negative classification loss is negatively correlated with the pairwise similarities based on the powered aggregated neighbor features. This theoretical proof explains the empirical observations that the graph attacker tends to connect dissimilar node pairs based on the similarities of neighbor features instead of ego features both on homophilic and heterophilic graphs. In this way, we novelly introduce a novel robust model termed NSPGNN which incorporates a dual-kNN graphs pipeline to supervise the neighbor similarity-guided propagation. This propagation utilizes the low-pass filter to smooth the features of node pairs along the positive kNN graphs and the high-pass filter to discriminate the features of node pairs along the negative kNN graphs. Extensive experiments on both homophilic and heterophilic graphs validate the universal robustness of NSPGNN compared to the state-of-the-art methods.

Cost Aware Untargeted Poisoning Attack against Graph Neural Networks,

Dec 12, 2023Yuwei Han, Yuni Lai, Yulin Zhu, Kai Zhou

Graph Neural Networks (GNNs) have become widely used in the field of graph mining. However, these networks are vulnerable to structural perturbations. While many research efforts have focused on analyzing vulnerability through poisoning attacks, we have identified an inefficiency in current attack losses. These losses steer the attack strategy towards modifying edges targeting misclassified nodes or resilient nodes, resulting in a waste of structural adversarial perturbation. To address this issue, we propose a novel attack loss framework called the Cost Aware Poisoning Attack (CA-attack) to improve the allocation of the attack budget by dynamically considering the classification margins of nodes. Specifically, it prioritizes nodes with smaller positive margins while postponing nodes with negative margins. Our experiments demonstrate that the proposed CA-attack significantly enhances existing attack strategies

Node-aware Bi-smoothing: Certified Robustness against Graph Injection Attacks

Dec 07, 2023Yuni Lai, Yulin Zhu, Bailin Pan, Kai Zhou

Deep Graph Learning (DGL) has emerged as a crucial technique across various domains. However, recent studies have exposed vulnerabilities in DGL models, such as susceptibility to evasion and poisoning attacks. While empirical and provable robustness techniques have been developed to defend against graph modification attacks (GMAs), the problem of certified robustness against graph injection attacks (GIAs) remains largely unexplored. To bridge this gap, we introduce the node-aware bi-smoothing framework, which is the first certifiably robust approach for general node classification tasks against GIAs. Notably, the proposed node-aware bi-smoothing scheme is model-agnostic and is applicable for both evasion and poisoning attacks. Through rigorous theoretical analysis, we establish the certifiable conditions of our smoothing scheme. We also explore the practical implications of our node-aware bi-smoothing schemes in two contexts: as an empirical defense approach against real-world GIAs and in the context of recommendation systems. Furthermore, we extend two state-of-the-art certified robustness frameworks to address node injection attacks and compare our approach against them. Extensive evaluations demonstrate the effectiveness of our proposed certificates.

Optimal Power Flow in Highly Renewable Power System Based on Attention Neural Networks

Nov 23, 2023Chen Li, Alexander Kies, Kai Zhou, Markus Schlott, Omar El Sayed, Mariia Bilousova, Horst Stoecker

The Optimal Power Flow (OPF) problem is pivotal for power system operations, guiding generator output and power distribution to meet demand at minimized costs, while adhering to physical and engineering constraints. The integration of renewable energy sources, like wind and solar, however, poses challenges due to their inherent variability. This variability, driven largely by changing weather conditions, demands frequent recalibrations of power settings, thus necessitating recurrent OPF resolutions. This task is daunting using traditional numerical methods, particularly for extensive power systems. In this work, we present a cutting-edge, physics-informed machine learning methodology, trained using imitation learning and historical European weather datasets. Our approach directly correlates electricity demand and weather patterns with power dispatch and generation, circumventing the iterative requirements of traditional OPF solvers. This offers a more expedient solution apt for real-time applications. Rigorous evaluations on aggregated European power systems validate our method's superiority over existing data-driven techniques in OPF solving. By presenting a quick, robust, and efficient solution, this research sets a new standard in real-time OPF resolution, paving the way for more resilient power systems in the era of renewable energy.

Generative Diffusion Models for Lattice Field Theory

Nov 06, 2023Lingxiao Wang, Gert Aarts, Kai Zhou

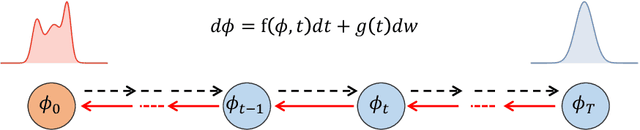

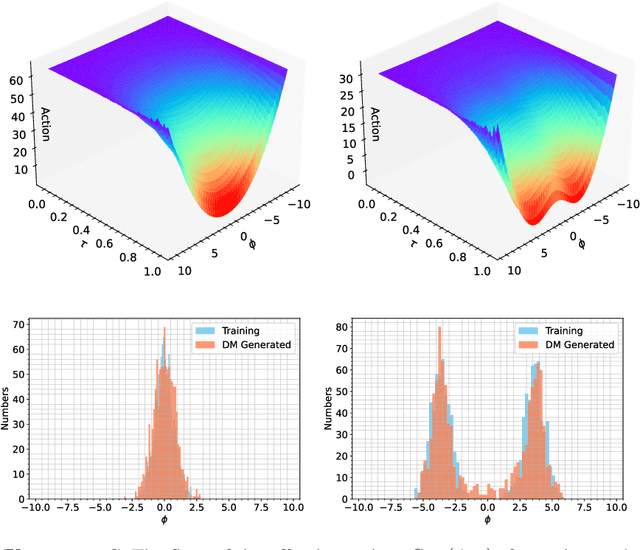

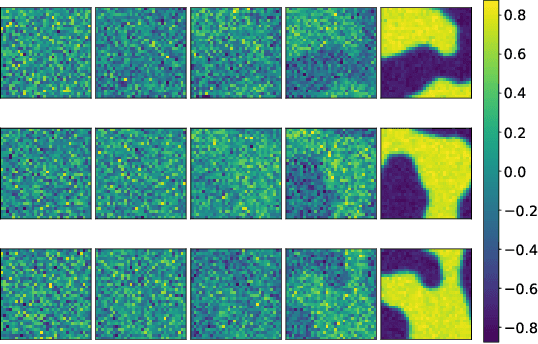

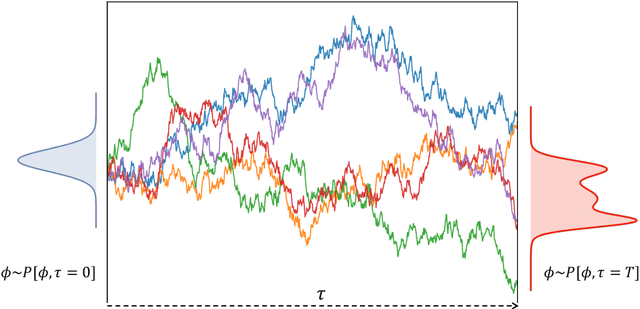

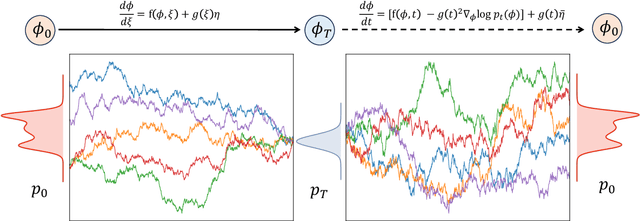

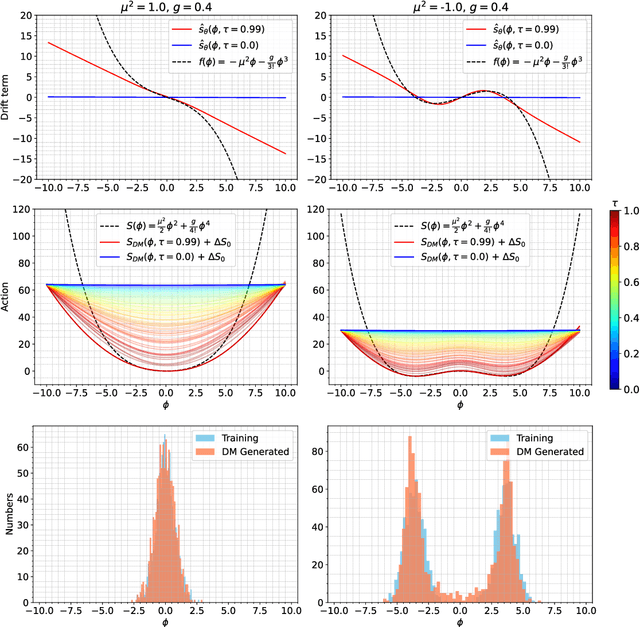

This study delves into the connection between machine learning and lattice field theory by linking generative diffusion models (DMs) with stochastic quantization, from a stochastic differential equation perspective. We show that DMs can be conceptualized by reversing a stochastic process driven by the Langevin equation, which then produces samples from an initial distribution to approximate the target distribution. In a toy model, we highlight the capability of DMs to learn effective actions. Furthermore, we demonstrate its feasibility to act as a global sampler for generating configurations in the two-dimensional $\phi^4$ quantum lattice field theory.

Diffusion Models as Stochastic Quantization in Lattice Field Theory

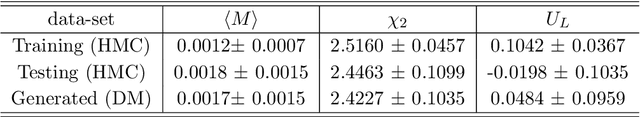

Sep 29, 2023Lingxiao Wang, Gert Aarts, Kai Zhou

In this work, we establish a direct connection between generative diffusion models (DMs) and stochastic quantization (SQ). The DM is realized by approximating the reversal of a stochastic process dictated by the Langevin equation, generating samples from a prior distribution to effectively mimic the target distribution. Using numerical simulations, we demonstrate that the DM can serve as a global sampler for generating quantum lattice field configurations in two-dimensional $\phi^4$ theory. We demonstrate that DMs can notably reduce autocorrelation times in the Markov chain, especially in the critical region where standard Markov Chain Monte-Carlo (MCMC) algorithms experience critical slowing down. The findings can potentially inspire further advancements in lattice field theory simulations, in particular in cases where it is expensive to generate large ensembles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge