Generative AI for Low-Carbon Artificial Intelligence of Things

Apr 28, 2024Jinbo Wen, Ruichen Zhang, Dusit Niyato, Jiawen Kang, Hongyang Du, Yang Zhang, Zhu Han

By integrating Artificial Intelligence (AI) with the Internet of Things (IoT), Artificial Intelligence of Things (AIoT) has revolutionized many fields. However, AIoT is facing the challenges of energy consumption and carbon emissions due to the continuous advancement of mobile technology. Fortunately, Generative AI (GAI) holds immense potential to reduce carbon emissions of AIoT due to its excellent reasoning and generation capabilities. In this article, we explore the potential of GAI for carbon emissions reduction and propose a novel GAI-enabled solution for low-carbon AIoT. Specifically, we first study the main impacts that cause carbon emissions in AIoT, and then introduce GAI techniques and their relations to carbon emissions. We then explore the application prospects of GAI in low-carbon AIoT, focusing on how GAI can reduce carbon emissions of network components. Subsequently, we propose a Large Language Model (LLM)-enabled carbon emission optimization framework, in which we design pluggable LLM and Retrieval Augmented Generation (RAG) modules to generate more accurate and reliable optimization problems. Furthermore, we utilize Generative Diffusion Models (GDMs) to identify optimal strategies for carbon emission reduction. Simulation results demonstrate the effectiveness of the proposed framework. Finally, we insightfully provide open research directions for low-carbon AIoT.

Channel Estimation for Optical Intelligent Reflecting Surface-Assisted VLC System: A Joint Space-Time Sampling Approach

Apr 23, 2024Shiyuan Sun, Fang Yang, Weidong Mei, Jian Song, Zhu Han, Rui Zhang

Optical intelligent reflecting surface (OIRS) has attracted increasing attention due to its capability of overcoming signal blockages in visible light communication (VLC), an emerging technology for the next-generation advanced transceivers. However, current works on OIRS predominantly assume known channel state information (CSI), which is essential to practical OIRS configuration. To bridge such a gap, this paper proposes a new and customized channel estimation protocol for OIRSs under the alignment-based channel model. Specifically, we first unveil OIRS spatial and temporal coherence characteristics and derive the coherence distance and the coherence time in closed form. Next, to achieve fast beam alignment over different coherence time, we propose to dynamically tune the rotational angles of the OIRS reflecting elements following a geometric optics-based non-uniform codebook. Given the above beam alignment, we propose an efficient joint space-time sampling-based algorithm to estimate the OIRS channel. In particular, we divide the OIRS into multiple subarrays based on the coherence distance and sequentially estimate their associated CSI, followed by a spacetime interpolation to retrieve full CSI for other non-aligned transceiver antennas. Numerical results validate our theoretical analyses and demonstrate the efficacy of our proposed OIRS channel estimation scheme as compared to other benchmark schemes.

Channel Estimation for Optical IRS-Assisted VLC System via Spatial Coherence

Apr 23, 2024Shiyuan Sun, Fang Yang, Weidong Mei, Jian Song, Zhu Han, Rui Zhang

Optical intelligent reflecting surface (OIRS) has been considered a promising technology for visible light communication (VLC) by constructing visual line-of-sight propagation paths to address the signal blockage issue. However, the existing works on OIRSs are mostly based on perfect channel state information (CSI), whose acquisition appears to be challenging due to the passive nature of the OIRS. To tackle this challenge, this paper proposes a customized channel estimation algorithm for OIRSs. Specifically, we first unveil the OIRS spatial coherence characteristics and derive the coherence distance in closed form. Based on this property, a spatial sampling-based algorithm is proposed to estimate the OIRS-reflected channel, by dividing the OIRS into multiple subarrays based on the coherence distance and sequentially estimating their associated CSI, followed by an interpolation to retrieve the full CSI. Simulation results validate the derived OIRS spatial coherence and demonstrate the efficacy of the proposed OIRS channel estimation algorithm.

Generative Artificial Intelligence Assisted Wireless Sensing: Human Flow Detection in Practical Communication Environments

Apr 22, 2024Jiacheng Wang, Hongyang Du, Dusit Niyato, Zehui Xiong, Jiawen Kang, Bo Ai, Zhu Han, Dong In Kim

Groundbreaking applications such as ChatGPT have heightened research interest in generative artificial intelligence (GAI). Essentially, GAI excels not only in content generation but also in signal processing, offering support for wireless sensing. Hence, we introduce a novel GAI-assisted human flow detection system (G-HFD). Rigorously, G-HFD first uses channel state information (CSI) to estimate the velocity and acceleration of propagation path length change of the human-induced reflection (HIR). Then, given the strong inference ability of the diffusion model, we propose a unified weighted conditional diffusion model (UW-CDM) to denoise the estimation results, enabling the detection of the number of targets. Next, we use the CSI obtained by a uniform linear array with wavelength spacing to estimate the HIR's time of flight and direction of arrival (DoA). In this process, UW-CDM solves the problem of ambiguous DoA spectrum, ensuring accurate DoA estimation. Finally, through clustering, G-HFD determines the number of subflows and the number of targets in each subflow, i.e., the subflow size. The evaluation based on practical downlink communication signals shows G-HFD's accuracy of subflow size detection can reach 91%. This validates its effectiveness and underscores the significant potential of GAI in the context of wireless sensing.

Integrated Sensing and Communication Under DISCO Physical-Layer Jamming Attacks

Apr 11, 2024Huan Huang, Hongliang Zhang, Weidong Mei, Jun Li, Yi Cai, A. Lee Swindlehurst, Zhu Han

Integrated sensing and communication (ISAC) systems traditionally presuppose that sensing and communication (S&C) channels remain approximately constant during their coherence time. However, a "DISCO" reconfigurable intelligent surface (DRIS), i.e., an illegitimate RIS with random, time-varying reflection properties that acts like a "disco ball," introduces a paradigm shift that enables active channel aging more rapidly during the channel coherence time. In this letter, we investigate the impact of DISCO jamming attacks launched by a DRISbased fully-passive jammer (FPJ) on an ISAC system. Specifically, an ISAC problem formulation and a corresponding waveform optimization are presented in which the ISAC waveform design considers the trade-off between the S&C performance and is formulated as a Pareto optimization problem. Moreover, a theoretical analysis is conducted to quantify the impact of DISCO jamming attacks. Numerical results are presented to evaluate the S&C performance under DISCO jamming attacks and to validate the derived theoretical analysis.

Harnessing the Power of AI-Generated Content for Semantic Communication

Apr 10, 2024Yiru Wang, Wanting Yang, Zehui Xiong, Yuping Zhao, Tony Q. S. Quek, Zhu Han

Semantic Communication (SemCom) is envisaged as the next-generation paradigm to address challenges stemming from the conflicts between the increasing volume of transmission data and the scarcity of spectrum resources. However, existing SemCom systems face drawbacks, such as low explainability, modality rigidity, and inadequate reconstruction functionality. Recognizing the transformative capabilities of AI-generated content (AIGC) technologies in content generation, this paper explores a pioneering approach by integrating them into SemCom to address the aforementioned challenges. We employ a three-layer model to illustrate the proposed AIGC-assisted SemCom (AIGC-SCM) architecture, emphasizing its clear deviation from existing SemCom. Grounded in this model, we investigate various AIGC technologies with the potential to augment SemCom's performance. In alignment with SemCom's goal of conveying semantic meanings, we also introduce the new evaluation methods for our AIGC-SCM system. Subsequently, we explore communication scenarios where our proposed AIGC-SCM can realize its potential. For practical implementation, we construct a detailed integration workflow and conduct a case study in a virtual reality image transmission scenario. The results demonstrate our ability to maintain a high degree of alignment between the reconstructed content and the original source information, while substantially minimizing the data volume required for transmission. These findings pave the way for further enhancements in communication efficiency and the improvement of Quality of Service. At last, we present future directions for AIGC-SCM studies.

From Learning to Analytics: Improving Model Efficacy with Goal-Directed Client Selection

Mar 30, 2024Jingwen Tong, Zhenzhen Chen, Liqun Fu, Jun Zhang, Zhu Han

Federated learning (FL) is an appealing paradigm for learning a global model among distributed clients while preserving data privacy. Driven by the demand for high-quality user experiences, evaluating the well-trained global model after the FL process is crucial. In this paper, we propose a closed-loop model analytics framework that allows for effective evaluation of the trained global model using clients' local data. To address the challenges posed by system and data heterogeneities in the FL process, we study a goal-directed client selection problem based on the model analytics framework by selecting a subset of clients for the model training. This problem is formulated as a stochastic multi-armed bandit (SMAB) problem. We first put forth a quick initial upper confidence bound (Quick-Init UCB) algorithm to solve this SMAB problem under the federated analytics (FA) framework. Then, we further propose a belief propagation-based UCB (BP-UCB) algorithm under the democratized analytics (DA) framework. Moreover, we derive two regret upper bounds for the proposed algorithms, which increase logarithmically over the time horizon. The numerical results demonstrate that the proposed algorithms achieve nearly optimal performance, with a gap of less than 1.44% and 3.12% under the FA and DA frameworks, respectively.

Wavenumber Domain Sparse Channel Estimation in Holographic MIMO

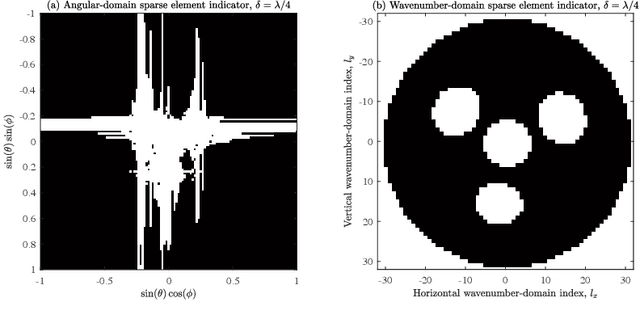

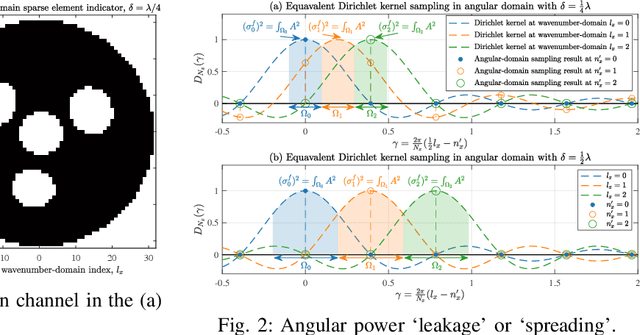

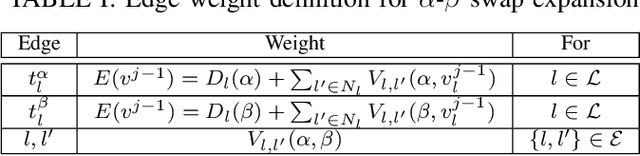

Mar 17, 2024Xufeng Guo, Yuanbin Chen, Ying Wang, Zhaocheng Wang, Zhu Han

In this paper, we investigate the sparse channel estimation in holographic multiple-input multiple-output (HMIMO) systems. The conventional angular-domain representation fails to capture the continuous angular power spectrum characterized by the spatially-stationary electromagnetic random field, thus leading to the ambiguous detection of the significant angular power, which is referred to as the power leakage. To tackle this challenge, the HMIMO channel is represented in the wavenumber domain for exploring its cluster-dominated sparsity. Specifically, a finite set of Fourier harmonics acts as a series of sampling probes to encapsulate the integral of the power spectrum over specific angular regions. This technique effectively eliminates power leakage resulting from power mismatches induced by the use of discrete angular-domain probes. Next, the channel estimation problem is recast as a sparse recovery of the significant angular power spectrum over the continuous integration region. We then propose an accompanying graph-cut-based swap expansion (GCSE) algorithm to extract beneficial sparsity inherent in HMIMO channels. Numerical results demonstrate that this wavenumber-domainbased GCSE approach achieves robust performance with rapid convergence.

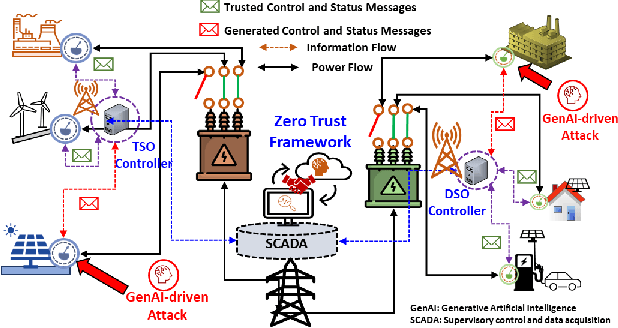

A Zero Trust Framework for Realization and Defense Against Generative AI Attacks in Power Grid

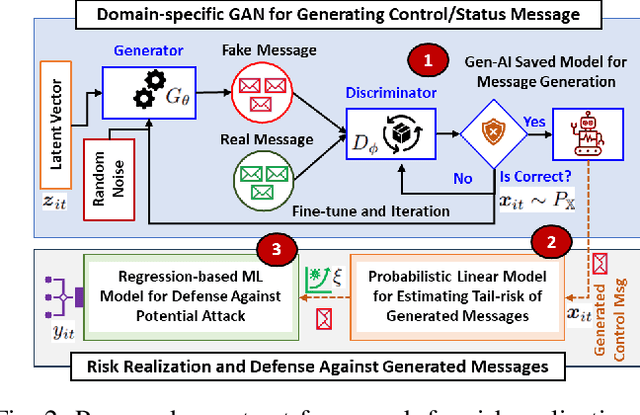

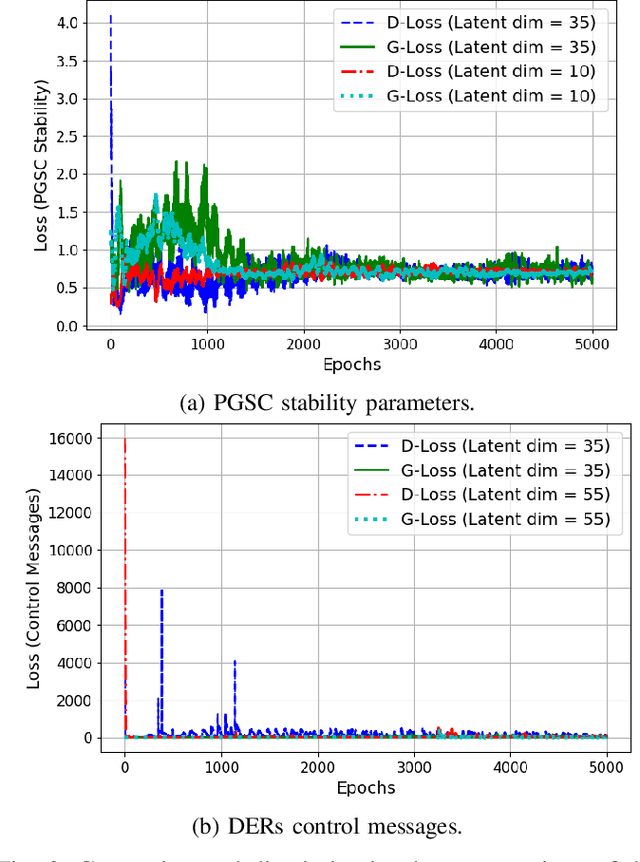

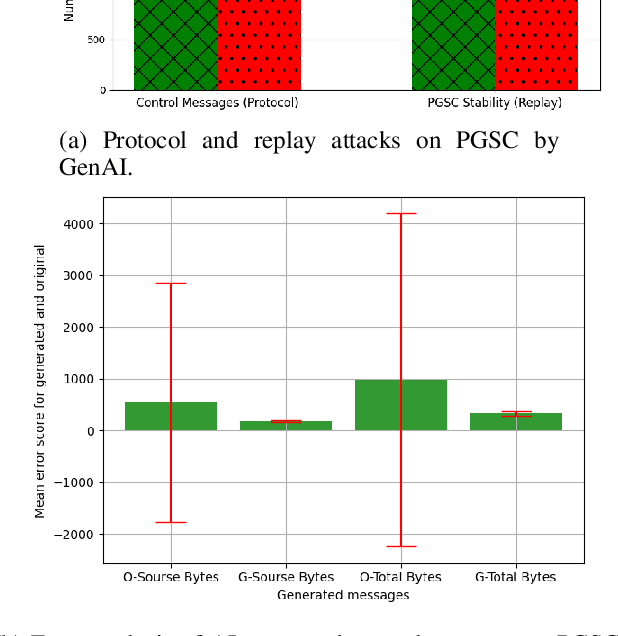

Mar 11, 2024Md. Shirajum Munir, Sravanthi Proddatoori, Manjushree Muralidhara, Walid Saad, Zhu Han, Sachin Shetty

Understanding the potential of generative AI (GenAI)-based attacks on the power grid is a fundamental challenge that must be addressed in order to protect the power grid by realizing and validating risk in new attack vectors. In this paper, a novel zero trust framework for a power grid supply chain (PGSC) is proposed. This framework facilitates early detection of potential GenAI-driven attack vectors (e.g., replay and protocol-type attacks), assessment of tail risk-based stability measures, and mitigation of such threats. First, a new zero trust system model of PGSC is designed and formulated as a zero-trust problem that seeks to guarantee for a stable PGSC by realizing and defending against GenAI-driven cyber attacks. Second, in which a domain-specific generative adversarial networks (GAN)-based attack generation mechanism is developed to create a new vulnerability cyberspace for further understanding that threat. Third, tail-based risk realization metrics are developed and implemented for quantifying the extreme risk of a potential attack while leveraging a trust measurement approach for continuous validation. Fourth, an ensemble learning-based bootstrap aggregation scheme is devised to detect the attacks that are generating synthetic identities with convincing user and distributed energy resources device profiles. Experimental results show the efficacy of the proposed zero trust framework that achieves an accuracy of 95.7% on attack vector generation, a risk measure of 9.61% for a 95% stable PGSC, and a 99% confidence in defense against GenAI-driven attack.

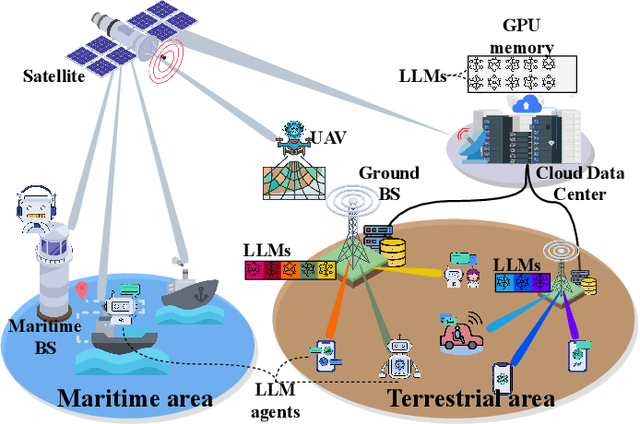

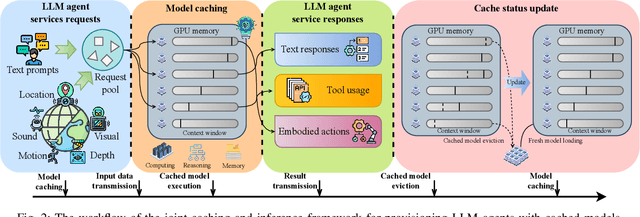

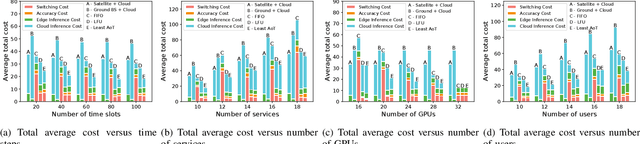

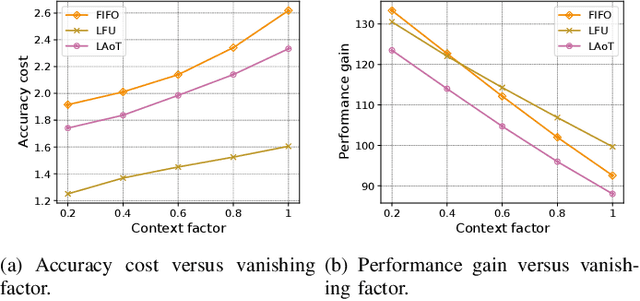

Cached Model-as-a-Resource: Provisioning Large Language Model Agents for Edge Intelligence in Space-air-ground Integrated Networks

Mar 09, 2024Minrui Xu, Dusit Niyato, Hongliang Zhang, Jiawen Kang, Zehui Xiong, Shiwen Mao, Zhu Han

Space-air-ground integrated networks (SAGINs) enable worldwide network coverage beyond geographical limitations for users to access ubiquitous intelligence services. {\color{black}Facing global coverage and complex environments in SAGINs, edge intelligence can provision AI agents based on large language models (LLMs) for users via edge servers at ground base stations (BSs) or cloud data centers relayed by satellites.} As LLMs with billions of parameters are pre-trained on vast datasets, LLM agents have few-shot learning capabilities, e.g., chain-of-thought (CoT) prompting for complex tasks, which are challenged by limited resources in SAGINs. In this paper, we propose a joint caching and inference framework for edge intelligence to provision sustainable and ubiquitous LLM agents in SAGINs. We introduce "cached model-as-a-resource" for offering LLMs with limited context windows and propose a novel optimization framework, i.e., joint model caching and inference, to utilize cached model resources for provisioning LLM agent services along with communication, computing, and storage resources. We design "age of thought" (AoT) considering the CoT prompting of LLMs, and propose the least AoT cached model replacement algorithm for optimizing the provisioning cost. We propose a deep Q-network-based modified second-bid (DQMSB) auction to incentivize these network operators, which can enhance allocation efficiency while guaranteeing strategy-proofness and free from adverse selection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge