Deep Joint CSI Feedback and Multiuser Precoding for MIMO OFDM Systems

Apr 25, 2024Yiran Guo, Wei Chen, Jialong Xu, Lun Li, Bo Ai

The design of precoding plays a crucial role in achieving a high downlink sum-rate in multiuser multiple-input multiple-output (MIMO) orthogonal frequency-division multiplexing (OFDM) systems. In this correspondence, we propose a deep learning based joint CSI feedback and multiuser precoding method in frequency division duplex systems, aiming at maximizing the downlink sum-rate performance in an end-to-end manner. Specifically, the eigenvectors of the CSI matrix are compressed using deep joint source-channel coding techniques. This compression method enhances the resilience of the feedback CSI information against degradation in the feedback channel. A joint multiuser precoding module and a power allocation module are designed to adjust the precoding direction and the precoding power for users based on the feedback CSI information. Experimental results demonstrate that the downlink sum-rate can be significantly improved by using the proposed method, especially in scenarios with low signal-to-noise ratio and low feedback overhead.

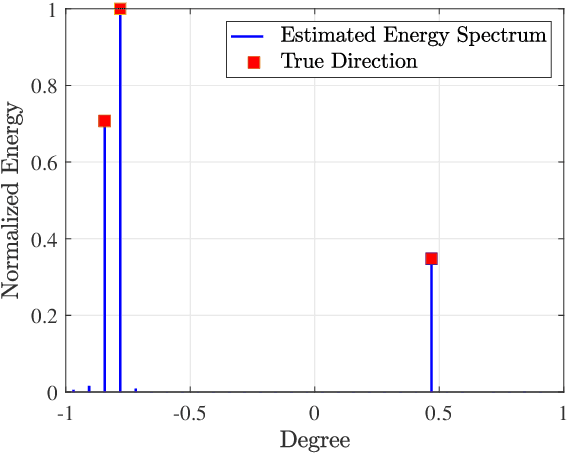

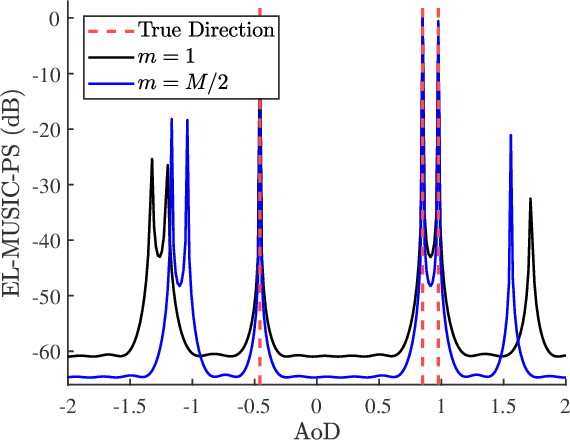

Generative Artificial Intelligence Assisted Wireless Sensing: Human Flow Detection in Practical Communication Environments

Apr 22, 2024Jiacheng Wang, Hongyang Du, Dusit Niyato, Zehui Xiong, Jiawen Kang, Bo Ai, Zhu Han, Dong In Kim

Groundbreaking applications such as ChatGPT have heightened research interest in generative artificial intelligence (GAI). Essentially, GAI excels not only in content generation but also in signal processing, offering support for wireless sensing. Hence, we introduce a novel GAI-assisted human flow detection system (G-HFD). Rigorously, G-HFD first uses channel state information (CSI) to estimate the velocity and acceleration of propagation path length change of the human-induced reflection (HIR). Then, given the strong inference ability of the diffusion model, we propose a unified weighted conditional diffusion model (UW-CDM) to denoise the estimation results, enabling the detection of the number of targets. Next, we use the CSI obtained by a uniform linear array with wavelength spacing to estimate the HIR's time of flight and direction of arrival (DoA). In this process, UW-CDM solves the problem of ambiguous DoA spectrum, ensuring accurate DoA estimation. Finally, through clustering, G-HFD determines the number of subflows and the number of targets in each subflow, i.e., the subflow size. The evaluation based on practical downlink communication signals shows G-HFD's accuracy of subflow size detection can reach 91%. This validates its effectiveness and underscores the significant potential of GAI in the context of wireless sensing.

Graph Neural Networks for Wireless Networks: Graph Representation, Architecture and Evaluation

Apr 18, 2024Yang Lu, Yuhang Li, Ruichen Zhang, Wei Chen, Bo Ai, Dusit Niyato

Graph neural networks (GNNs) have been regarded as the basic model to facilitate deep learning (DL) to revolutionize resource allocation in wireless networks. GNN-based models are shown to be able to learn the structural information about graphs representing the wireless networks to adapt to the time-varying channel state information and dynamics of network topology. This article aims to provide a comprehensive overview of applying GNNs to optimize wireless networks via answering three fundamental questions, i.e., how to input the wireless network data into GNNs, how to improve the performance of GNNs, and how to evaluate GNNs. Particularly, two graph representations are given to transform wireless network parameters into graph-structured data. Then, we focus on the architecture design of the GNN-based models via introducing the basic message passing as well as model improvement methods including multi-head attention mechanism and residual structure. At last, we give task-oriented evaluation metrics for DL-enabled wireless resource allocation. We also highlight certain challenges and potential research directions for the application of GNNs in wireless networks.

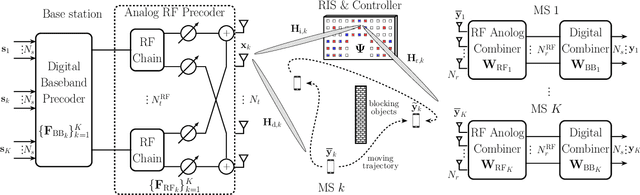

AI-Empowered RIS-Assisted Networks: CV-Enabled RIS Selection and DNN-Enabled Transmission

Apr 18, 2024Conggang Hu, Yang Lu, Hongyang Du, Mi Yang, Bo Ai, Dusit Niyato

This paper investigates artificial intelligence (AI) empowered schemes for reconfigurable intelligent surface (RIS) assisted networks from the perspective of fast implementation. We formulate a weighted sum-rate maximization problem for a multi-RIS-assisted network. To avoid huge channel estimation overhead due to activate all RISs, we propose a computer vision (CV) enabled RIS selection scheme based on a single shot multi-box detector. To realize real-time resource allocation, a deep neural network (DNN) enabled transmit design is developed to learn the optimal mapping from channel information to transmit beamformers and phase shift matrix. Numerical results illustrate that the CV module is able to select of RIS with the best propagation condition. The well-trained DNN achieves similar sum-rate performance to the existing alternative optimization method but with much smaller inference time.

Building Semantic Communication System via Molecules: An End-to-End Training Approach

Apr 15, 2024Yukun Cheng, Wei Chen, Bo Ai

The concept of semantic communication provides a novel approach for applications in scenarios with limited communication resources. In this paper, we propose an end-to-end (E2E) semantic molecular communication system, aiming to enhance the efficiency of molecular communication systems by reducing the transmitted information. Specifically, following the joint source channel coding paradigm, the network is designed to encode the task-relevant information into the concentration of the information molecules, which is robust to the degradation of the molecular communication channel. Furthermore, we propose a channel network to enable the E2E learning over the non-differentiable molecular channel. Experimental results demonstrate the superior performance of the semantic molecular communication system over the conventional methods in classification tasks.

Generative AI Agent for Next-Generation MIMO Design: Fundamentals, Challenges, and Vision

Apr 13, 2024Zhe Wang, Jiayi Zhang, Hongyang Du, Ruichen Zhang, Dusit Niyato, Bo Ai, Khaled B. Letaief

Next-generation multiple input multiple output (MIMO) is expected to be intelligent and scalable. In this paper, we study generative artificial intelligence (AI) agent-enabled next-generation MIMO design. Firstly, we provide an overview of the development, fundamentals, and challenges of the next-generation MIMO. Then, we propose the concept of the generative AI agent, which is capable of generating tailored and specialized contents with the aid of large language model (LLM) and retrieval augmented generation (RAG). Next, we comprehensively discuss the features and advantages of the generative AI agent framework. More importantly, to tackle existing challenges of next-generation MIMO, we discuss generative AI agent-enabled next-generation MIMO design, from the perspective of performance analysis, signal processing, and resource allocation. Furthermore, we present two compelling case studies that demonstrate the effectiveness of leveraging the generative AI agent for performance analysis in complex configuration scenarios. These examples highlight how the integration of generative AI agents can significantly enhance the analysis and design of next-generation MIMO systems. Finally, we discuss important potential research future directions.

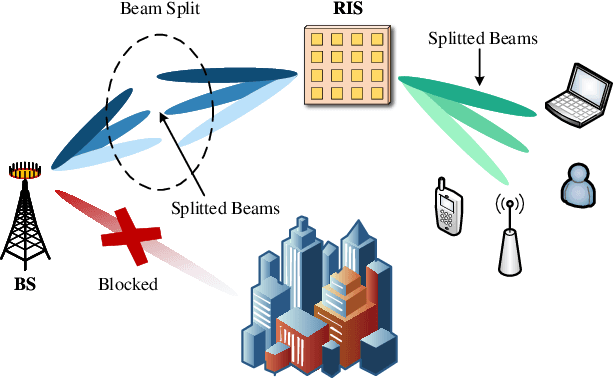

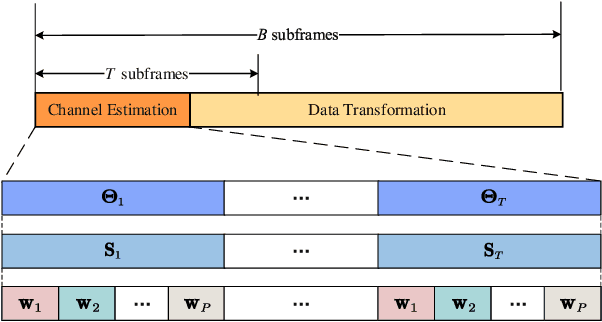

Low Complexity Channel Estimation for RIS-Assisted THz Systems with Beam Split

Mar 05, 2024Xin Su, Ruisi He, Peng Zhang, Bo Ai

To support extremely high data rates, reconfigurable intelligent surface (RIS)-assisted terahertz (THz) communication is considered to be a promising technology for future sixth-generation networks. However, due to the typical employment of hybrid beamforming architecture in THz systems, as well as the passive nature of RIS which lacks the capability to process pilot signals, obtaining channel state information (CSI) is facing significant challenges. To accurately estimate the cascaded channel, we propose a novel low-complexity channel estimation scheme, which includes three steps. Specifically, we first estimate full CSI within a small subset of subcarriers (SCs). Then, we acquire angular information at base station and RIS based on the full CSI. Finally, we derive spatial directions and recover full-CSI for the remaining SCs. Theoretical analysis and simulation results demonstrate that the proposed scheme can achieve superior performance in terms of normalized mean-square-error and exhibit a lower computational complexity compared with the existing algorithms.

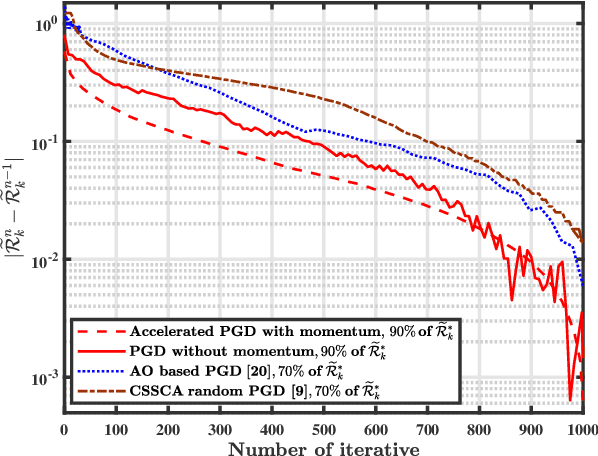

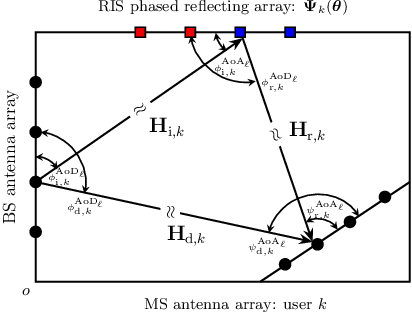

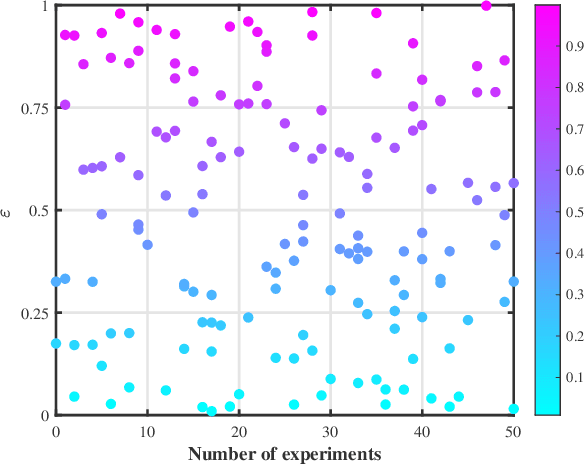

Blockage-Aware Robust Beamforming in RIS-Aided Mobile Millimeter Wave MIMO Systems

Mar 02, 2024Yan Yang, Shuping Dang, Miaowen Wen, Bo Ai, Rose Qingyang Hu

Millimeter wave (mmWave) communications are sensitive to blockage over radio propagation paths. The emerging paradigm of reconfigurable intelligent surface (RIS) has the potential to overcome this issue by its ability to arbitrarily reflect the incident signals toward desired directions. This paper proposes a Neyman-Pearson (NP) criterion-based blockage-aware algorithm to improve communication resilience against blockage in mobile mmWave multiple input multiple output (MIMO) systems. By virtue of this pragmatic blockage-aware technique, we further propose an outage-constrained beamforming design for downlink mmWave MIMO transmission to achieve outage probability minimization and achievable rate maximization. To minimize the outage probability, a robust RIS beamformer with variant beamwidth is designed to combat uncertain channel state information (CSI). For the rate maximization problem, an accelerated projected gradient descent (PGD) algorithm is developed to solve the computational challenge of high-dimensional RIS phase-shift matrix (PSM) optimization. Particularly, we leverage a subspace constraint to reduce the scope of the projection operation and formulate a new Nesterov momentum acceleration scheme to speed up the convergence process of PGD. Extensive experiments confirm the effectiveness of the proposed blockage-aware approach, and the proposed accelerated PGD algorithm outperforms a number of representative baseline algorithms in terms of the achievable rate.

A Cluster-Based Statistical Channel Model for Integrated Sensing and Communication Channels

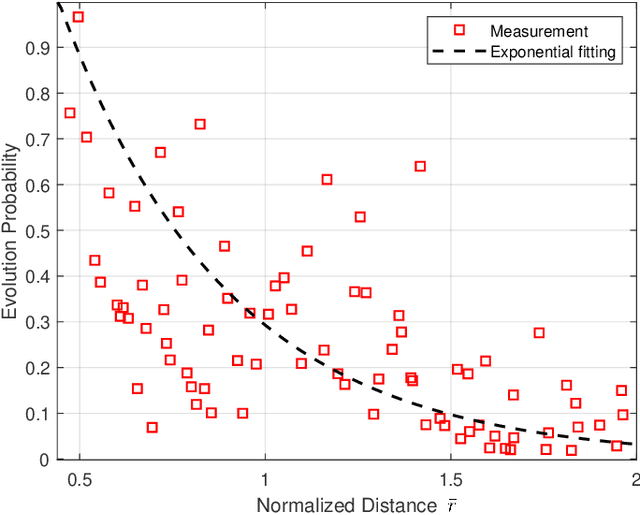

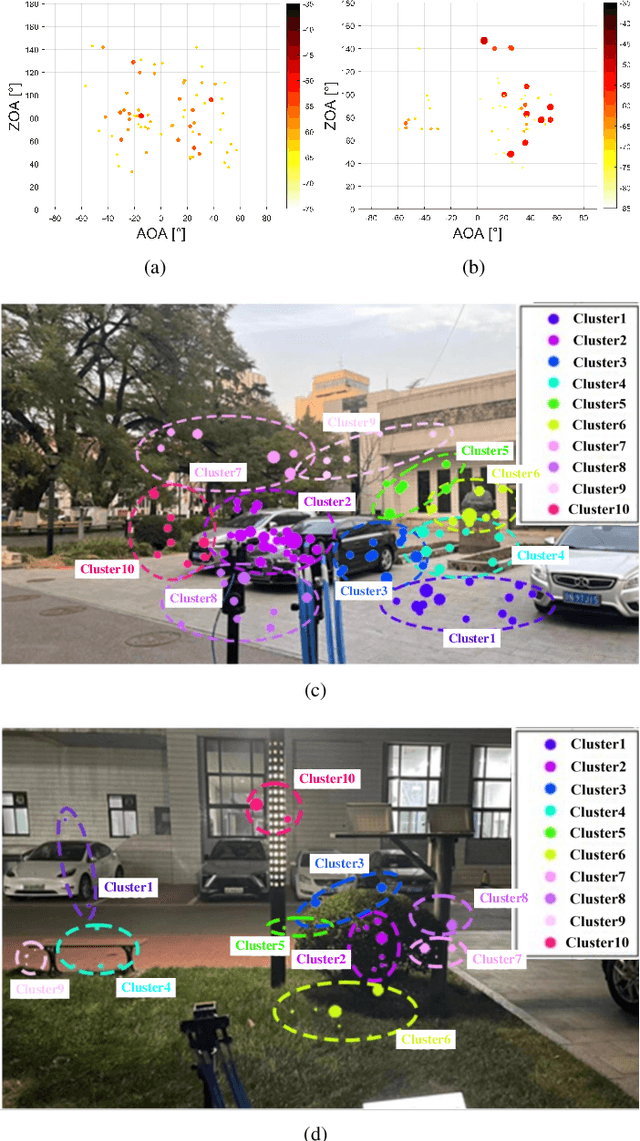

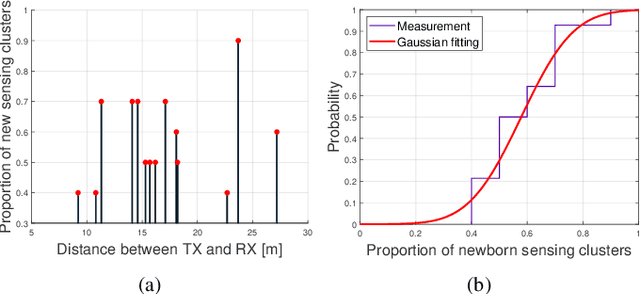

Mar 01, 2024Zhengyu Zhang, Ruisi He, Bo Ai, Mi Yang, Yong Niu, Zhangdui Zhong, Yujian Li, Xuejian Zhang, Jing Li

The emerging 6G network envisions integrated sensing and communication (ISAC) as a promising solution to meet growing demand for native perception ability. To optimize and evaluate ISAC systems and techniques, it is crucial to have an accurate and realistic wireless channel model. However, some important features of ISAC channels have not been well characterized, for example, most existing ISAC channel models consider communication channels and sensing channels independently, whereas ignoring correlation under the consistent environment. Moreover, sensing channels have not been well modeled in the existing standard-level channel models. Therefore, in order to better model ISAC channel, a cluster-based statistical channel model is proposed in this paper, which is based on measurements conducted at 28 GHz. In the proposed model, a new framework based on 3GPP standard is proposed, which includes communication clusters and sensing clusters. Clustering and tracking algorithms are used to extract and analyze ISAC channel characteristics. Furthermore, some special sensing cluster structures such as shared sensing cluster, newborn sensing cluster, etc., are defined to model correlation and difference between communication and sensing channels. Finally, accuracy of the proposed model is validated based on measurements and simulations.

Characterization of Wireless Channel Semantics: A New Paradigm

Mar 01, 2024Zhengyu Zhang, Ruisi He, Mi Yang, Xuejian Zhang, Ziyi Qi, Yuan Yuan, Bo Ai

Recently, deep learning enabled semantic communications have been developed to understand transmission content from semantic level, which realize effective and accurate information transfer. Aiming to the vision of sixth generation (6G) networks, wireless devices are expected to have native perception and intelligent capabilities, which associate wireless channel with surrounding environments from physical propagation dimension to semantic information dimension. Inspired by these, we aim to provide a new paradigm on wireless channel from semantic level. A channel semantic model and its characterization framework are proposed in this paper. Specifically, a channel semantic model composes of status semantics, behavior semantics and event semantics. Based on actual channel measurement at 28 GHz, as well as multi-mode data, example results of channel semantic characterization are provided and analyzed, which exhibits reasonable and interpretable semantic information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge